Contents of this page is copied directly from AWS blog sites to make it Kindle friendly. Some styles & sections from these pages are removed to render this properly in 'Article Mode' of Kindle e-Reader browser. All the contents of this page is property of AWS.

Page 1|Page 2|Page 3|Page 4

EC2 Image Builder and Hands-free Hardening of Windows Images for AWS Elastic Beanstalk

=======================

AWS Elastic Beanstalk takes care of undifferentiated heavy lifting for customers by regularly providing new platform versions to update all Linux-based and Windows Server-based platforms. In addition to the updates to existing software components and support for new features and configuration options incorporated into the Elastic Beanstalk managed Amazon Machine Images (AMI), you may need to install third-party packages, or apply additional controls in order to meet industry or internal security criteria; for example, the Defense Information Systems Agency’s (DISA) Security Technical Implementation Guides (STIG).

In this blog post you will learn how to automate the process of customizing Elastic Beanstalk managed AMIs using EC2 Image Builder and apply the medium and low severity STIG settings to Windows instances whenever new platform versions are released.

You can extend the solution in this blog post to go beyond system hardening. EC2 Image Builder allows you to execute scripts that define the custom configuration for an image, known as Components. There are over 20 Amazon managed Components that you can use. You can also create your own, and even share with others.

These services are discussed in this blog post:

EC2 Image Builder simplifies the building, testing, and deployment of virtual machine and container images.

Amazon EventBridge is a serverless event bus that simplifies the process of building event-driven architectures.

AWS Lambda lets you run code without provisioning or managing servers.

AWS Systems Manager Parameter Store provides secure, hierarchical storage for configuration data, and secrets.

AWS Elastic Beanstalk is an easy-to-use service for deploying and scaling web applications and services.

Prerequisites

This solution has the following prerequisites:

AWS SAM CLI to deploy the solution. See the installing the AWS CLI page for instructions if needed.

Either the AWS Command Line Interface (AWS CLI) or the AWS Tools for PowerShell should be installed and configured on your machine. This blog post provides equivalent commands when applicable.

Microsoft .NET 5 SDK to compile the included Lambda functions

Docker Desktop to create the container image used to publish the Lambda function

All of the code necessary to deploy the solution is available on the . The repository details the solution’s codebase, and the “Deploying the Solution” section walks through the deployment process. Let’s start with a walkthrough of the solution’s design.

Overview of solution

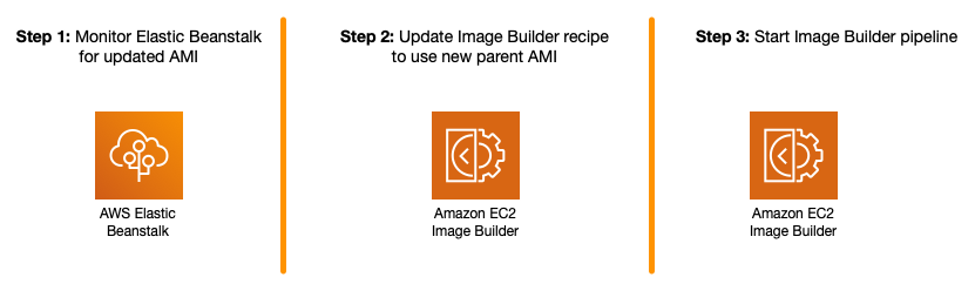

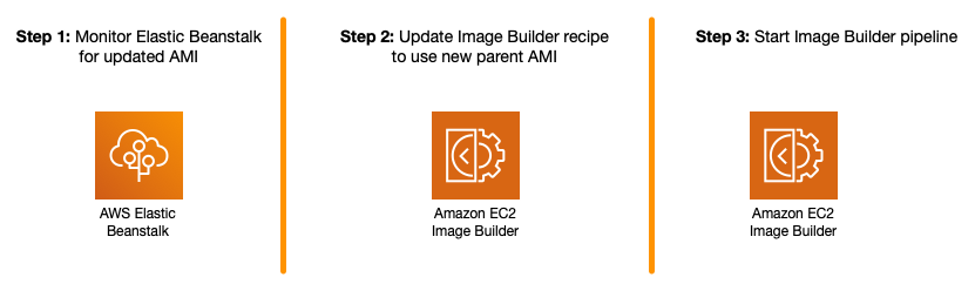

The solution automates the following three steps.

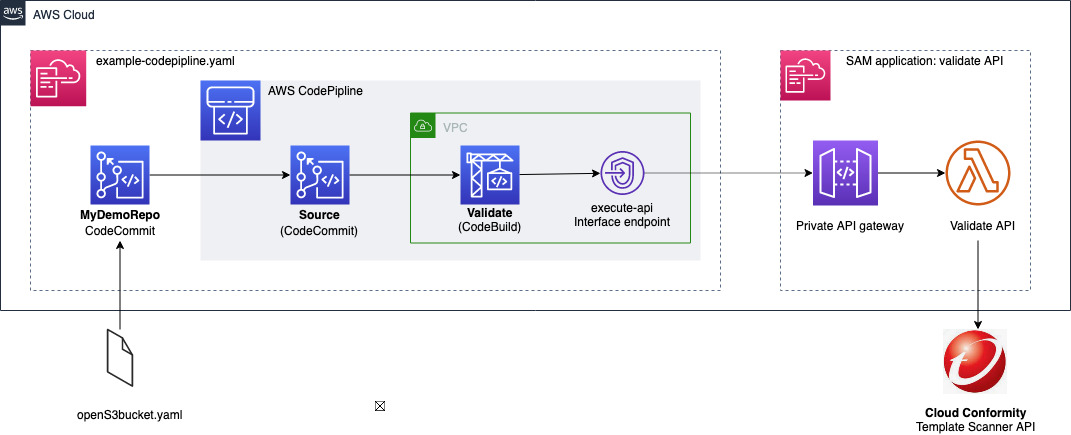

Figure 1 – Steps being automated

The Image Builder Pipeline takes care of launching an EC2 instance using the Elastic Beanstalk managed AMI, hardens the image using EC2 Image Builder’s STIG Medium Component, and outputs a new AMI that can be used by application teams to create their Elastic Beanstalk Environments.

Figure 2 – EC2 Image Builder Pipeline Steps

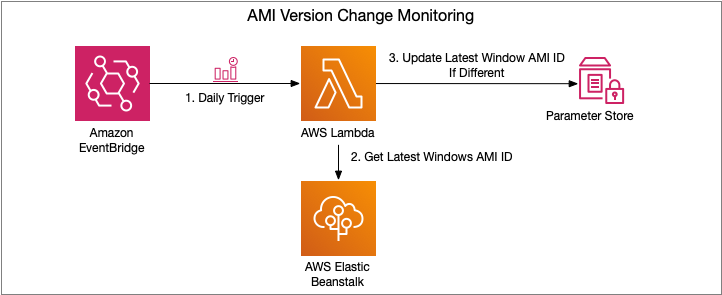

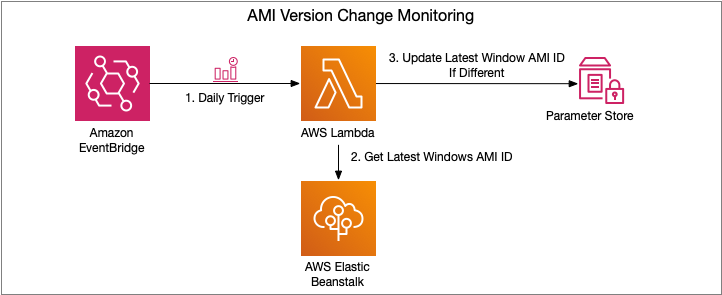

To automate Step 1, an Amazon EventBridge rule is used to trigger an AWS Lambda function to get the latest AMI ID for the Elastic Beanstalk platform used, and ensures that the Parameter Store parameter is kept up to date.

Figure 3 – AMI Version Change Monitoring Flow

Steps 2 and 3 are trigged upon change to the Parameter Store parameter. An EventBridge rule is created to trigger a Lambda function, which manages the creation of a new EC2 Image Builder Recipe, updates the EC2 Image Builder Pipeline to use this new recipe, and starts a new instance of an EC2 Image Builder Pipeline.

Figure 4 – EC2 Image Builder Pipeline Execution Flow

If you would also like to store the ID of the newly created AMI, see the Tracking the latest server images in Amazon EC2 Image Builder pipelines blog post on how to use Parameter Store for this purpose. This will enable you to notify teams that a new AMI is available for consumption.

Let’s dive a bit deeper into each of these pieces and how to deploy the solution.

Walkthrough

The following are the high-level steps we will be walking through in the rest of this post.

Deploy SAM template that will provision all pieces of the solution. Checkout the Using container image support for AWS Lambda with AWS SAM blog post for more details.

Invoke the AMI version monitoring AWS Lambda function. The EventBridge rule is configured for a daily trigger and for the purposes of this blog post, we do not want to wait that long prior to seeing the pipeline in action.

View the details of the resultant image after the Image Builder Pipeline completes

Deploying the Solution

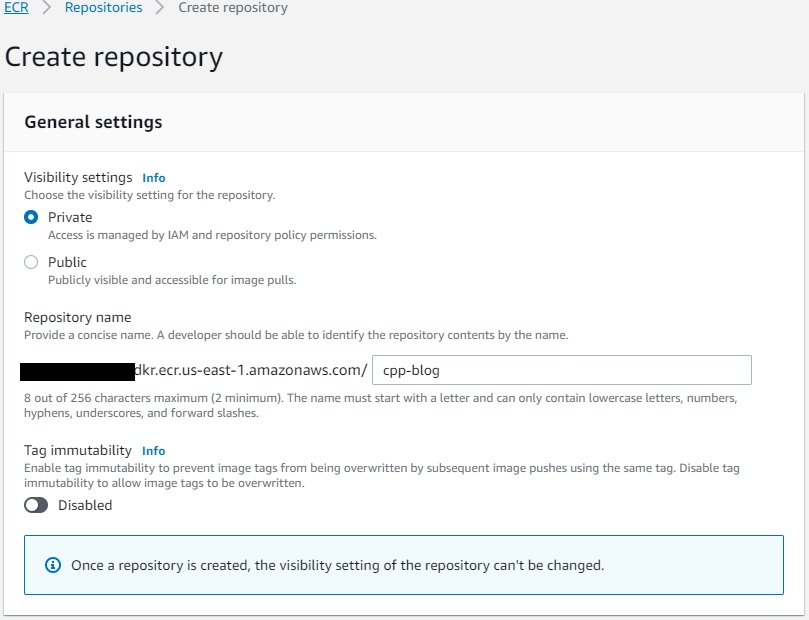

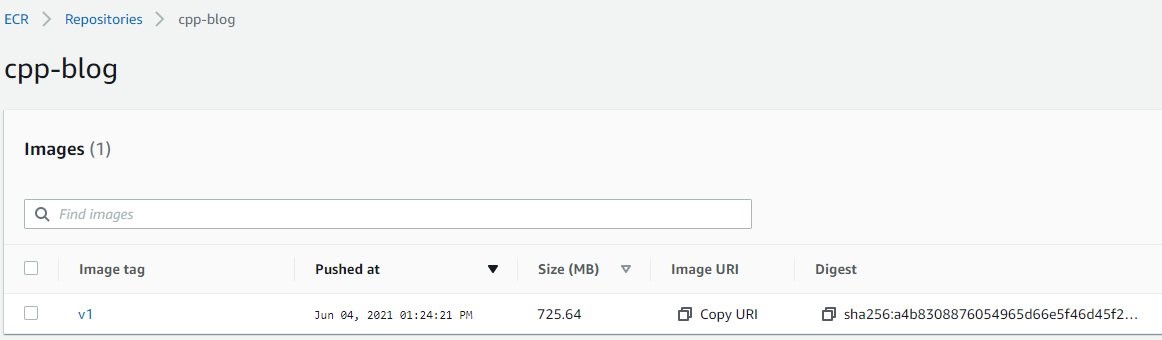

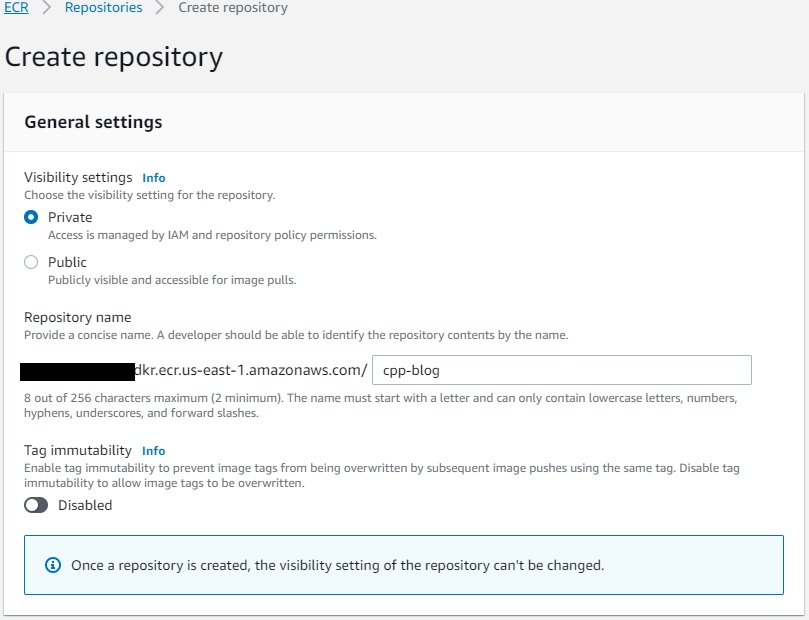

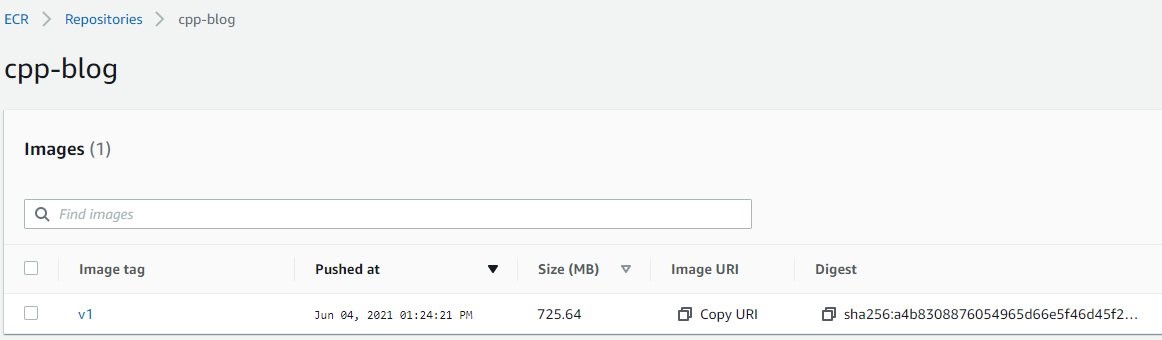

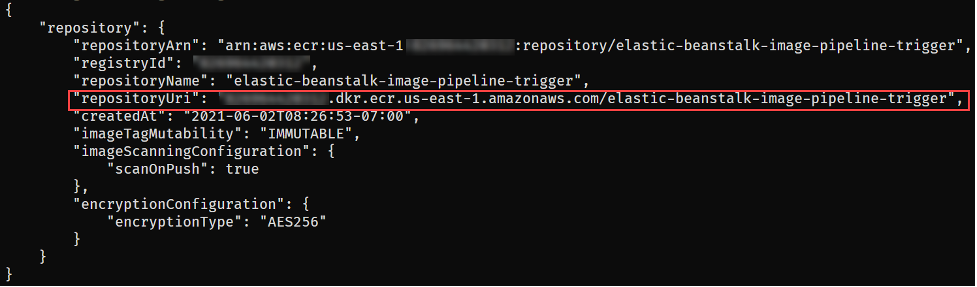

The first step to deploying the solution is to create the Elastic Container Registry Repository that will be used to upload the image artifacts created. You can do so using the following AWS CLI or AWS Tools for PowerShell command:

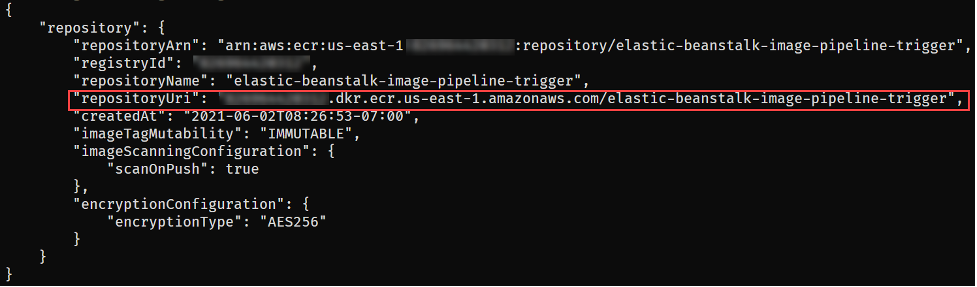

aws ecr create-repository --repository-name elastic-beanstalk-image-pipeline-trigger --image-tag-mutability IMMUTABLE --image-scanning-configuration scanOnPush=true --region us-east-1

New-ECRRepository -RepositoryName elastic-beanstalk-image-pipeline-trigger -ImageTagMutability IMMUTABLE -ImageScanningConfiguration_ScanOnPush $True -Region us-east-1

This will return output similar to the following. Take note of the repositoryUri as you will be using that in an upcoming step.

Figure 5 – ECR repository creation output

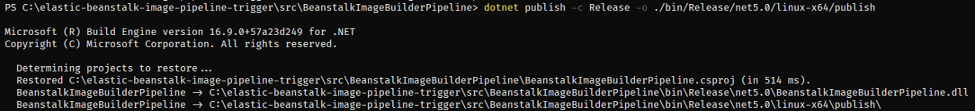

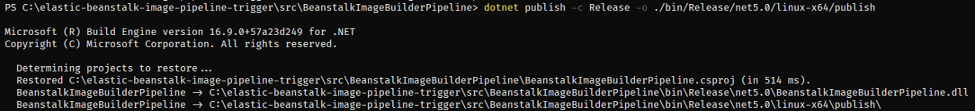

With the repository configured, you are ready to get the solution. Either download or clone the project’s aws-samples/elastic-beanstalk-image-pipeline-trigger GitHub repository to a local directory. Once you have the project downloaded, you can compile it using the following command from the project’s src/BeanstalkImageBuilderPipeline directory.

dotnet publish -c Release -o ./bin/Release/net5.0/linux-x64/publish

The output should look like:

Figure 6 – .NET project compilation output

Now that the project is compiled, you are ready to create the container image by executing the following SAM CLI command.

sam build --template-file ./serverless.template

Figure 7 – SAM build command output

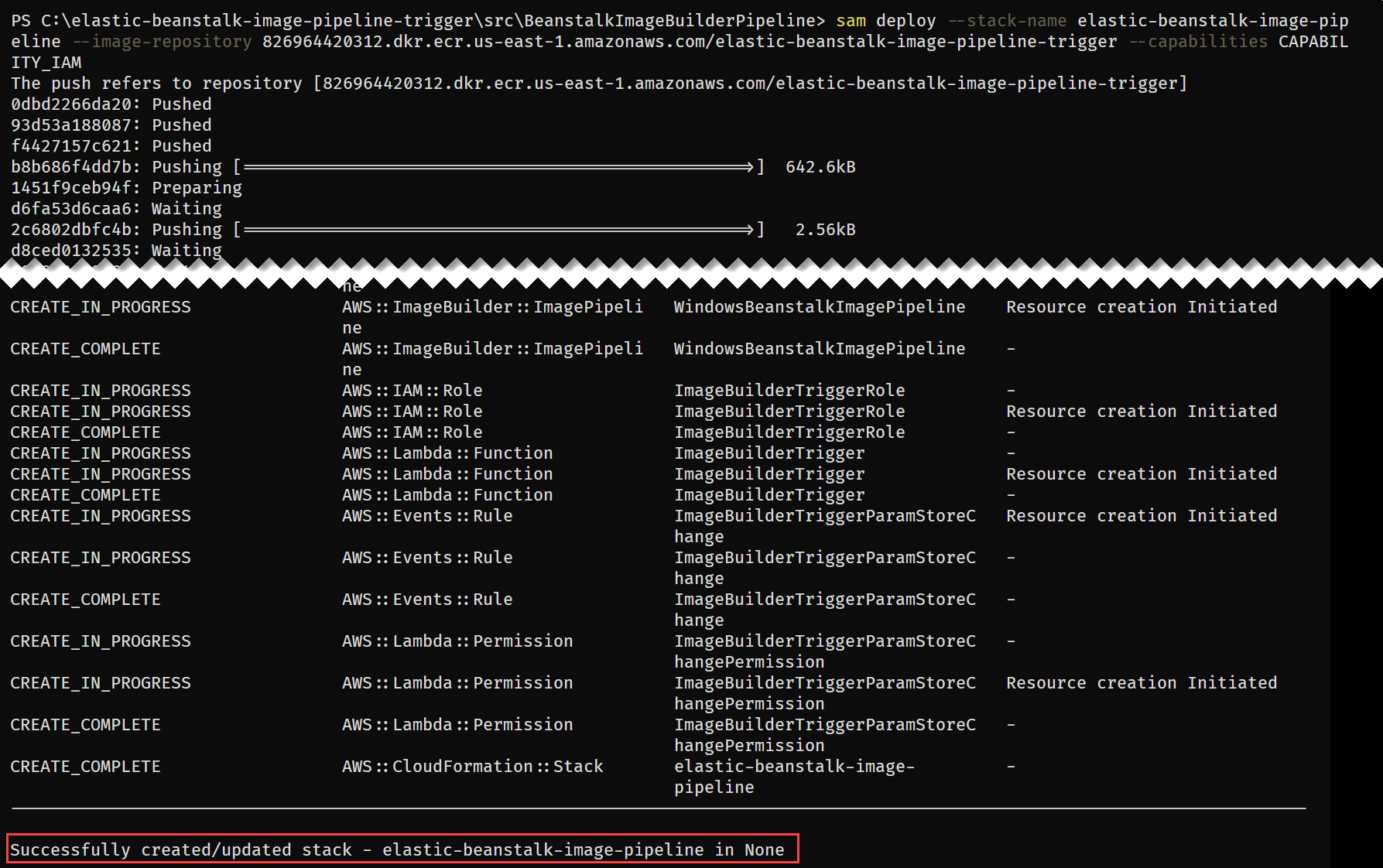

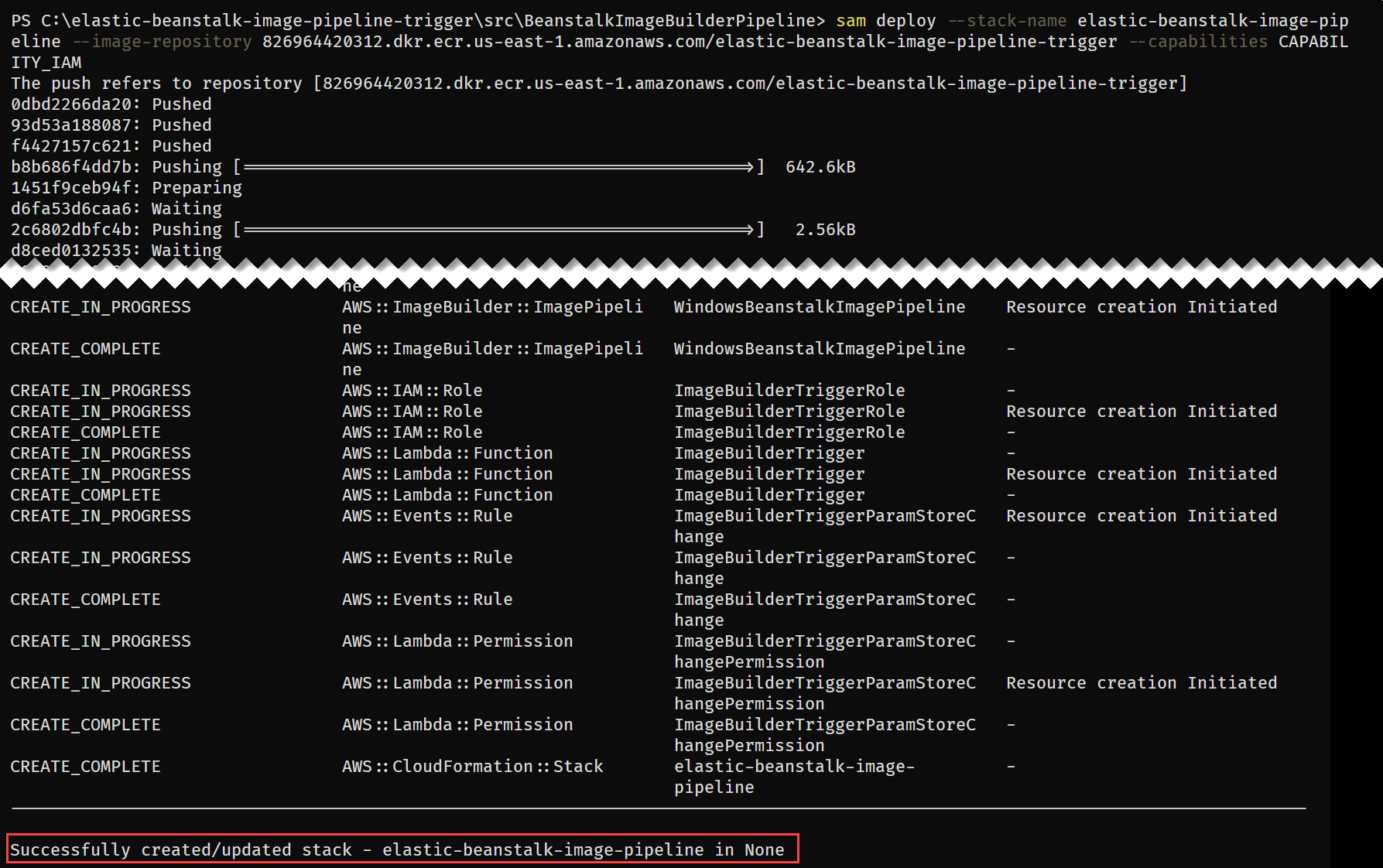

Next up deploy the SAM template with the following command, replacing REPOSITORY_URL with the URL of the ECR repository created earlier:

sam deploy --stack-name elastic-beanstalk-image-pipeline --image-repository <REPOSITORY_URL> --capabilities CAPABILITY_IAM --region us-east-1

The SAM CLI will both push the container image and create the CloudFormation Stack, deploying all resources needed for this solution. The deployment output will look similar to:

Figure 8 – SAM deploy command output

With the CloudFormation Stack completed, you are ready to move onto starting the pipeline to create a custom Windows AMI with the medium DISA STIG applied.

Invoke AMI ID Monitoring Lambda

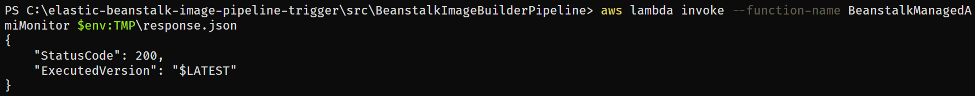

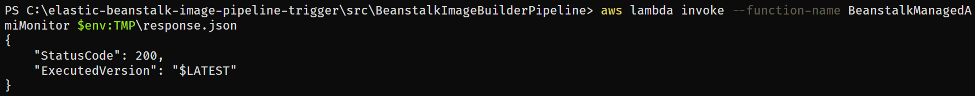

Let’s start by invoking the Lambda function, depicted in Figure 3, responsible for ensuring that the latest Elastic Beanstalk managed AMI ID is stored in Parameter Store.

aws lambda invoke --function-name BeanstalkManagedAmiMonitor response.json --region us-east-1

Invoke-LMFunction -FunctionName BeanstalkManagedAmiMonitor -Region us-east-1

Figure 9 – Lambda invocation output

The Lambda’s CloudWatch log group contains the BeanstalkManagedAmiMonitor function’s output. For example, below you can see that the SSM parameter is being updated with the new AMI ID.

Figure 10 – BeanstalkManagedAmiMonitor Lambda’s log

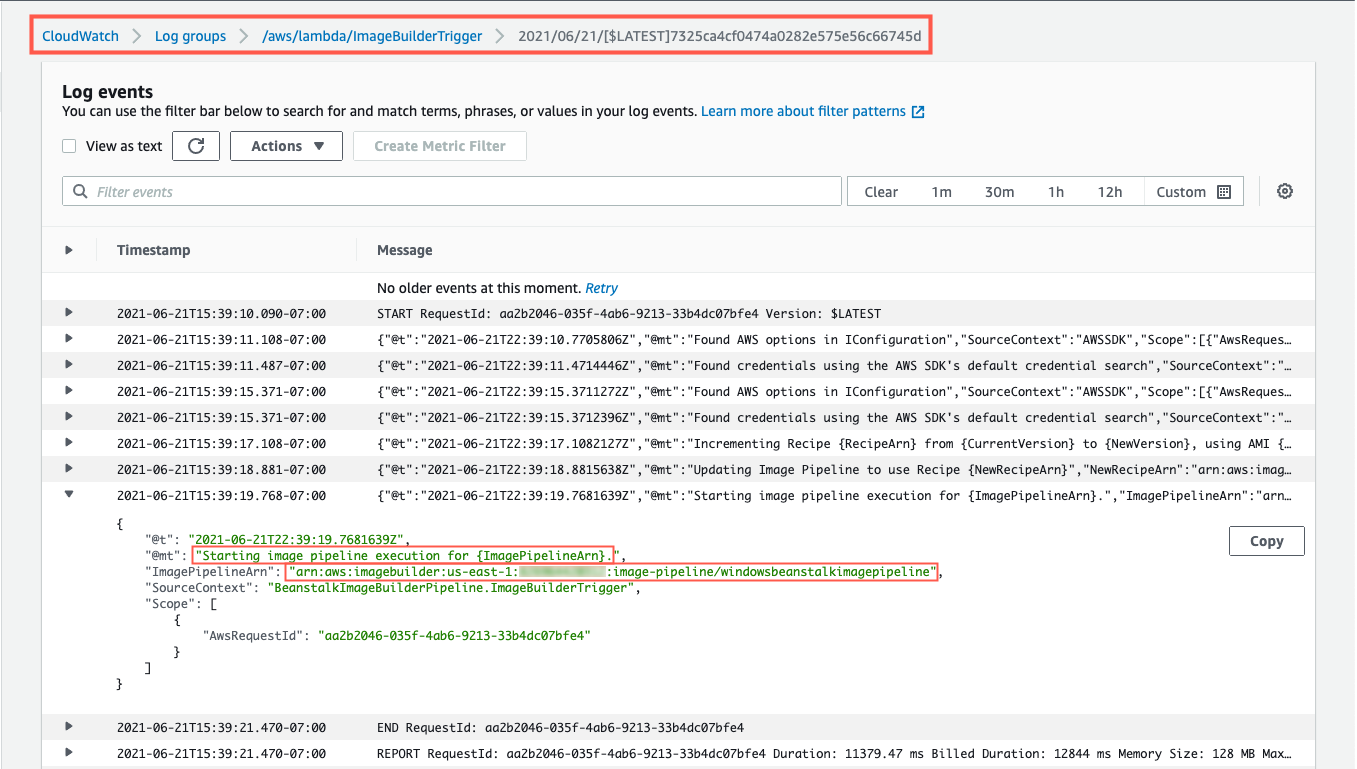

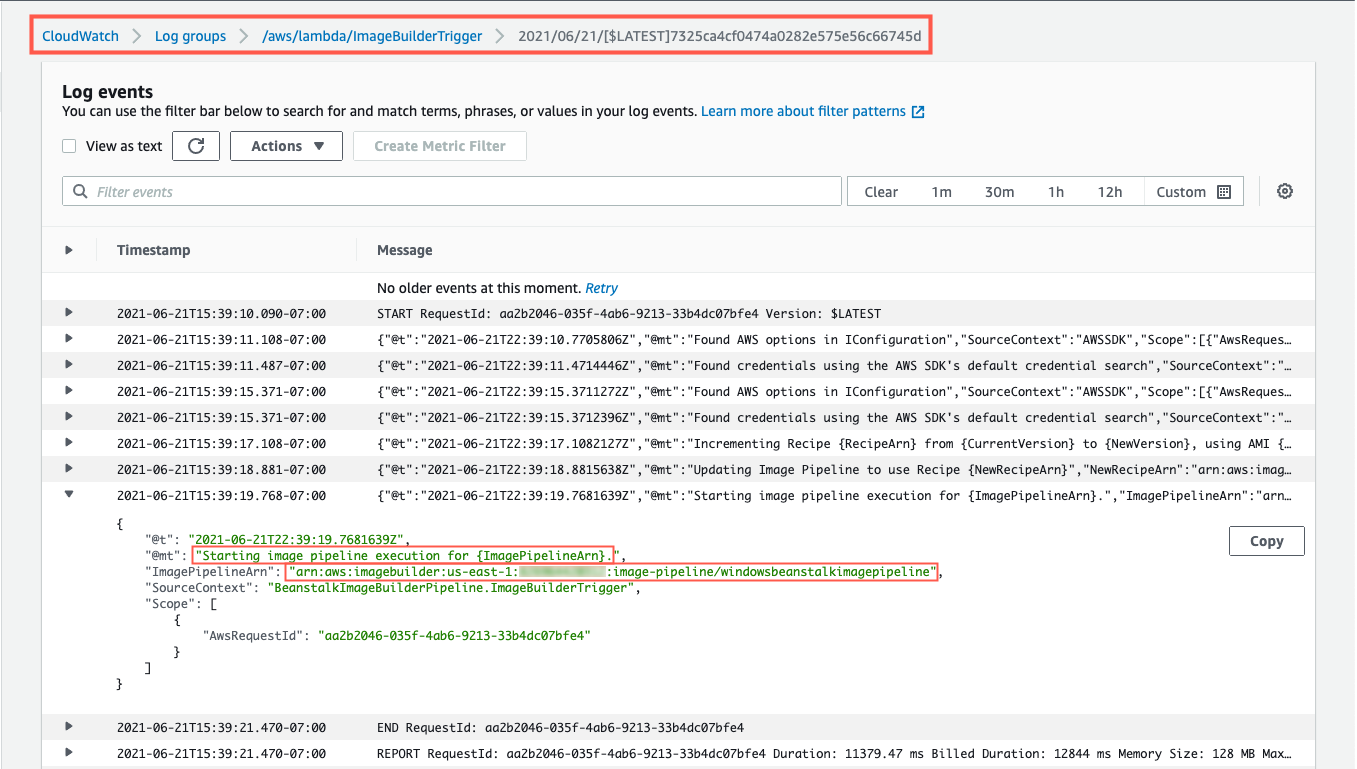

After this Lambda function updates the Parameter Store parameter with the latest AMI ID, the EC2 Image Builder recipe will be updated to use this AMI ID as the parent image, and the Image Builder pipeline will be started. You can see evidence of this by going to the ImageBuilderTrigger Lambda function’s CloudWatch log group. Below you can see a log entry with the message “Starting image pipeline execution…”.

Figure 11 – ImageBuilderTrigger Lambda’s log

To keep track of the status of the image creation, navigate to the EC2 Image Builder console, and select the 1.0.1 version of the demo-beanstalk-image.

Figure 12 – EC2 Image Builder images list

This will display the details for that build. Keep an eye on the status. While the image is being create, you will see the status as “Building”. Applying the latest Windows updates and DISA STIG can take about an hour.

Figure 13 – EC2 Image Builder image build version details

Once the AMI has been created, the status will change to “Available”. Click on the version column’s link to see the details of that version.

Figure 14 – EC2 Image Builder image build version details

You can use the AMI ID listed when creating an Elastic Beanstalk application. When using the create new environment wizard, you can modify the capacity settings to specify this custom AMI ID. The automation is configured to run on a daily basis. Only for the purposes of this post, did we have to invoke the Lambda function directly.

Cleaning up

To avoid incurring future charges, delete the resources using the following commands, replacing the AWS_ACCOUNT_NUMBER placeholder with appropriate value.

aws imagebuilder delete-image --image-build-version-arn arn:aws:imagebuilder:us-east-1:<AWS_ACCOUNT_NUMBER>:image/demo-beanstalk-image/1.0.1/1 --region us-east-1

aws imagebuilder delete-image-pipeline --image-pipeline-arn arn:aws:imagebuilder:us-east-1:<AWS_ACCOUNT_NUMBER>:image-pipeline/windowsbeanstalkimagepipeline --region us-east-1

aws imagebuilder delete-image-recipe --image-recipe-arn arn:aws:imagebuilder:us-east-1:<AWS_ACCOUNT_NUMBER>:image-recipe/demo-beanstalk-image/1.0.1 --region us-east-1

aws cloudformation delete-stack --stack-name elastic-beanstalk-image-pipeline --region us-east-1

aws cloudformation wait stack-delete-complete --stack-name elastic-beanstalk-image-pipeline --region us-east-1

aws ecr delete-repository --repository-name elastic-beanstalk-image-pipeline-trigger --force --region us-east-1

Remove-EC2IBImage -ImageBuildVersionArn arn:aws:imagebuilder:us-east-1:<AWS_ACCOUNT_NUMBER>:image/demo-beanstalk-image/1.0.1/1 -Region us-east-1

Remove-EC2IBImagePipeline -ImagePipelineArn arn:aws:imagebuilder:us-east-1:<AWS_ACCOUNT_NUMBER>:image-pipeline/windowsbeanstalkimagepipeline -Region us-east-1

Remove-EC2IBImageRecipe -ImageRecipeArn arn:aws:imagebuilder:us-east-1:<AWS_ACCOUNT_NUMBER>:image-recipe/demo-beanstalk-image/1.0.1 -Region us-east-1

Remove-CFNStack -StackName elastic-beanstalk-image-pipeline -Region us-east-1

Wait-CFNStack -StackName elastic-beanstalk-image-pipeline -Region us-east-1

Remove-ECRRepository -RepositoryName elastic-beanstalk-image-pipeline-trigger -IgnoreExistingImages $True -Region us-east-1

Conclusion

In this post, you learned how to leverage EC2 Image Builder, Lambda, and EventBridge to automate the creation of a Windows AMI with the medium DISA STIGs applied that can be used for Elastic Beanstalk environments. Don’t stop there though, you can apply these same techniques whenever you need to base recipes on AMIs that the image origin is not available in EC2 Image Builder.

Further Reading

EC2 Image Builder has a number of image origins supported out of the box, see the Automate OS Image Build Pipelines with EC2 Image Builder blog post for more details. EC2 Image Builder is also not limited to just creating AMIs. The Build and Deploy Docker Images to AWS using EC2 Image Builder blog post shows you how to build Docker images that can be utilized throughout your organization.

These resources can provide additional information on the topics touched on in this article:

A simpler deployment experience with AWS SAM CLI

AWS Tools for PowerShell is now generally available with version 4.0

.NET 5 on AWS

Carlos Santos

Carlos Santos is a Microsoft Specialist Solutions Architect with Amazon Web Services (AWS). In his role, Carlos helps customers through their cloud journey, leveraging his experience with application architecture, and distributed system design.

Deploy data lake ETL jobs using CDK Pipelines

=======================

This post is co-written with Isaiah Grant, Cloud Consultant at 2nd Watch.

Many organizations are building data lakes on AWS, which provides the most secure, scalable, comprehensive, and cost-effective portfolio of services. Like any application development project, a data lake must answer a fundamental question: “What is the DevOps strategy?” Defining a DevOps strategy for a data lake requires extensive planning and multiple teams. This typically requires multiple development and test cycles before maturing enough to support a data lake in a production environment. If an organization doesn’t have the right people, resources, and processes in place, this can quickly become daunting.

What if your data engineering team uses basic building blocks to encapsulate data lake infrastructure and data processing jobs? This is where CDK Pipelines brings the full benefit of infrastructure as code (IaC). CDK Pipelines is a high-level construct library within the AWS Cloud Development Kit (AWS CDK) that makes it easy to set up a continuous deployment pipeline for your AWS CDK applications. The AWS CDK provides essential automation for your release pipelines so that your development and operations team remain agile and focus on developing and delivering applications on the data lake.

In this post, we discuss a centralized deployment solution utilizing CDK Pipelines for data lakes. This implements a DevOps-driven data lake that delivers benefits such as continuous delivery of data lake infrastructure, data processing, and analytical jobs through a configuration-driven multi-account deployment strategy. Let’s dive in!

Data lakes on AWS

A data lake is a centralized repository where you can store all of your structured and unstructured data at any scale. Store your data as is, without having to first structure it, and run different types of analytics—from dashboards and visualizations to big data processing, real-time analytics, and machine learning in order to guide better decisions. To further explore data lakes, refer to What is a data lake?

We design a data lake with the following elements:

Secure data storage

Data cataloging in a central repository

Data movement

Data analysis

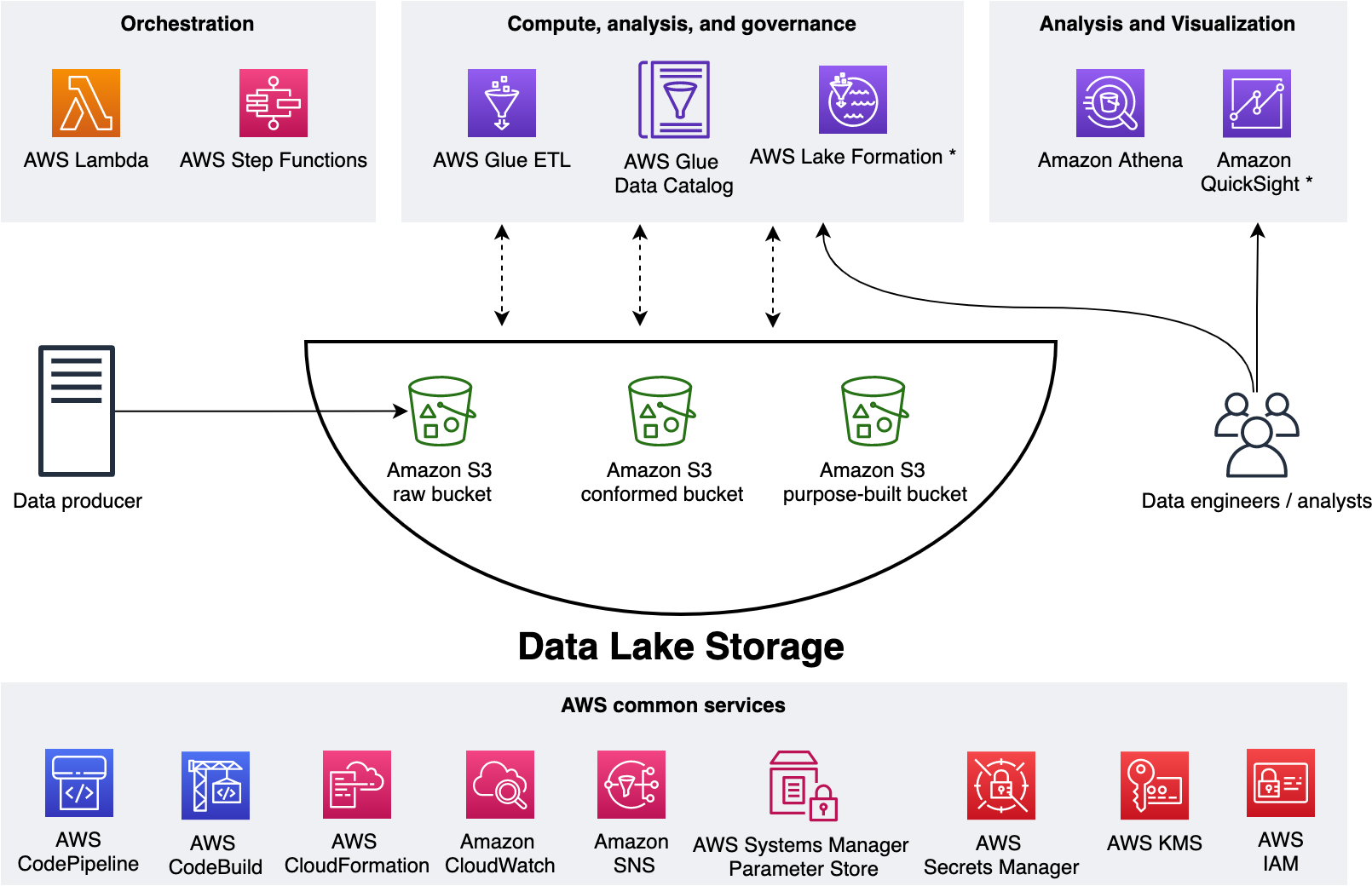

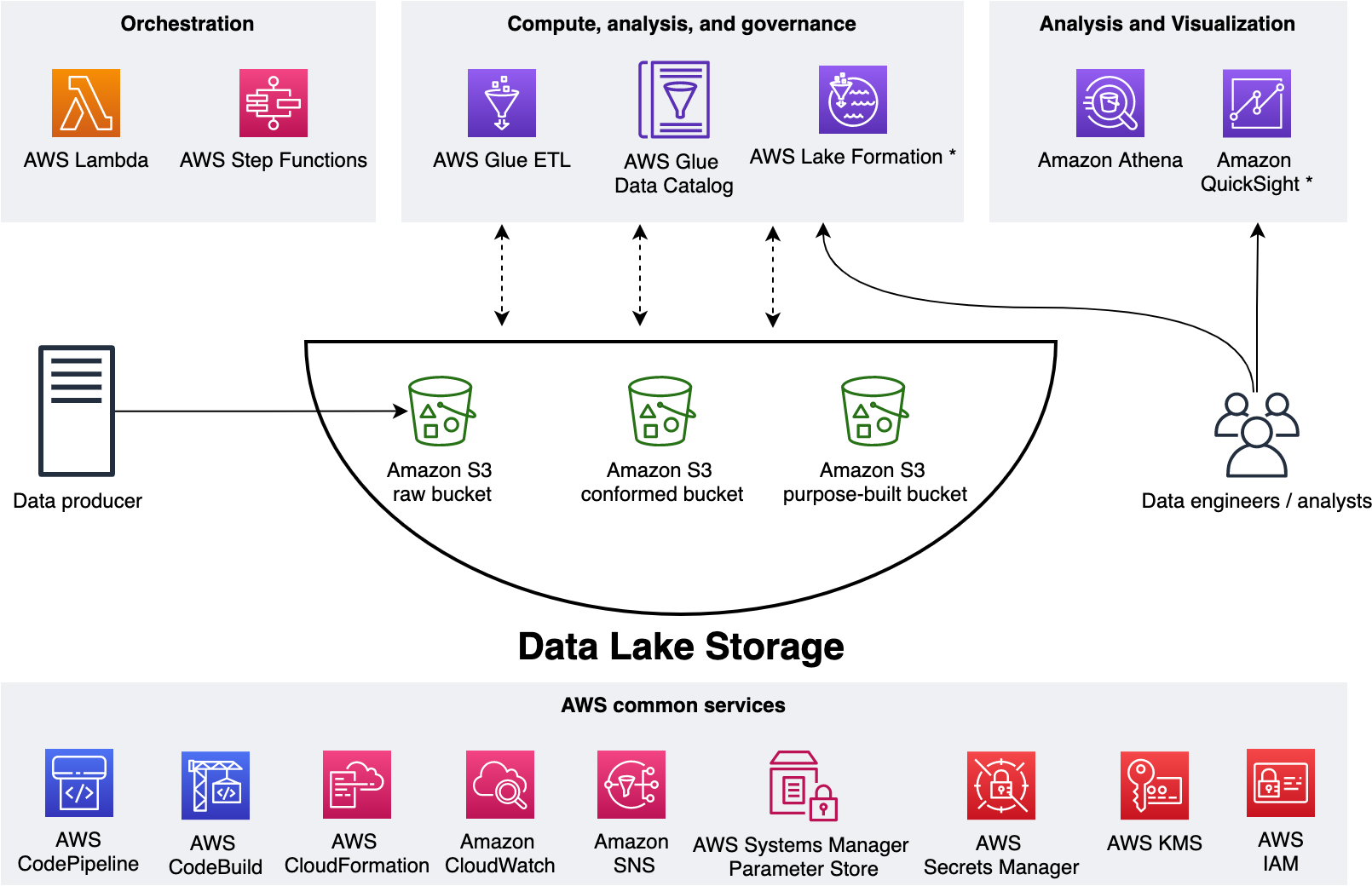

The following figure represents our data lake.

We use three Amazon Simple Storage Service (Amazon S3) buckets:

raw – Stores the input data in its original format

conformed – Stores the data that meets the data lake quality requirements

purpose-built – Stores the data that is ready for consumption by applications or data lake consumers

The data lake has a producer where we ingest data into the raw bucket at periodic intervals. We utilize the following tools: AWS Glue processes and analyzes the data. AWS Glue Data Catalog persists metadata in a central repository. AWS Lambda and AWS Step Functions schedule and orchestrate AWS Glue extract, transform, and load (ETL) jobs. Amazon Athena is used for interactive queries and analysis. Finally, we engage various AWS services for logging, monitoring, security, authentication, authorization, alerting, and notification.

A common data lake practice is to have multiple environments such as dev, test, and production. Applying the IaC principle for data lakes brings the benefit of consistent and repeatable runs across multiple environments, self-documenting infrastructure, and greater flexibility with resource management. The AWS CDK offers high-level constructs for use with all of our data lake resources. This simplifies usage and streamlines implementation.

Before exploring the implementation, let’s gain further scope of how we utilize our data lake.

The solution

Our goal is to implement a CI/CD solution that automates the provisioning of data lake infrastructure resources and deploys ETL jobs interactively. We accomplish this as follows: 1) applying separation of concerns (SoC) design principle to data lake infrastructure and ETL jobs via dedicated source code repositories, 2) a centralized deployment model utilizing CDK pipelines, and 3) AWS CDK enabled ETL pipelines from the start.

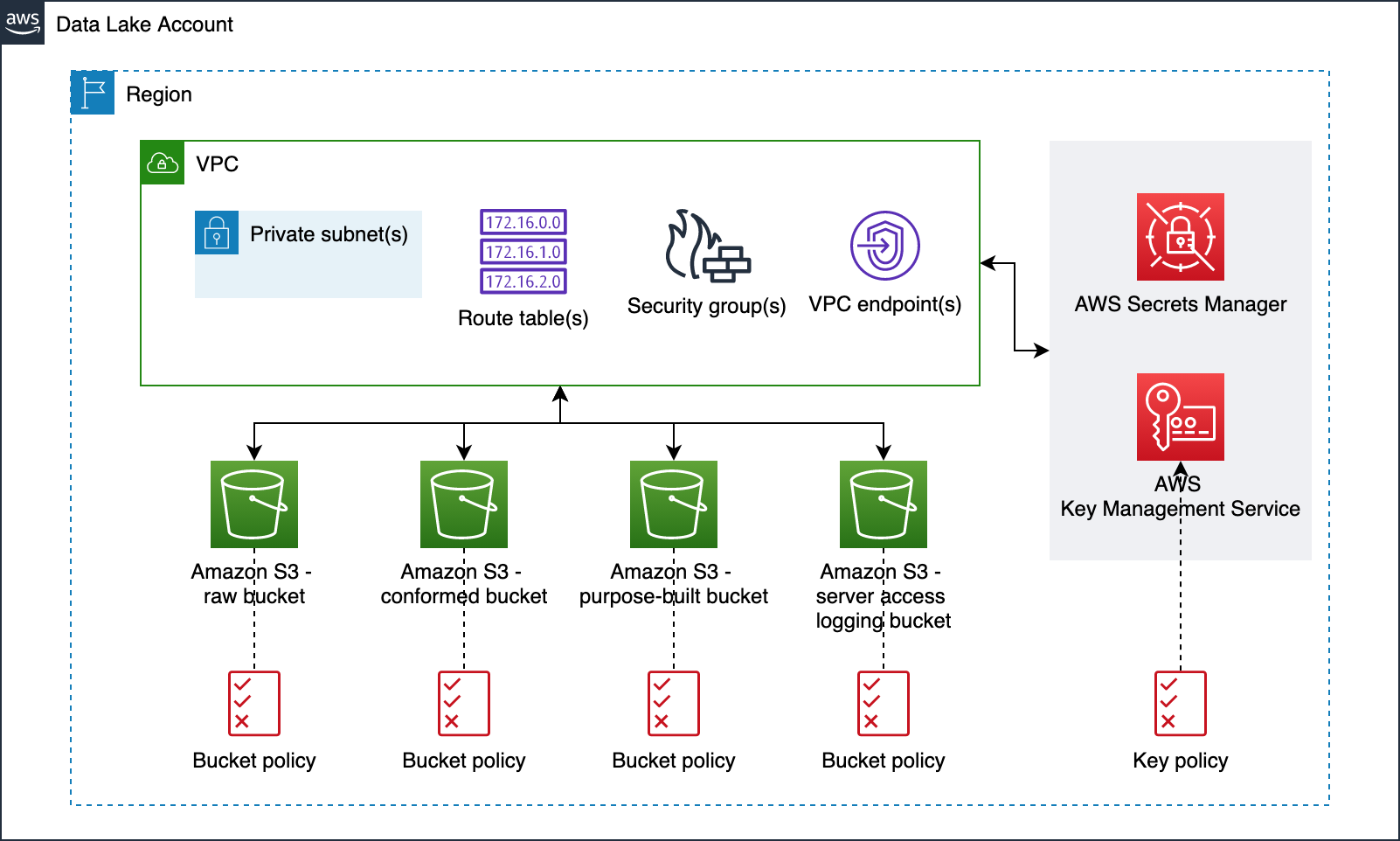

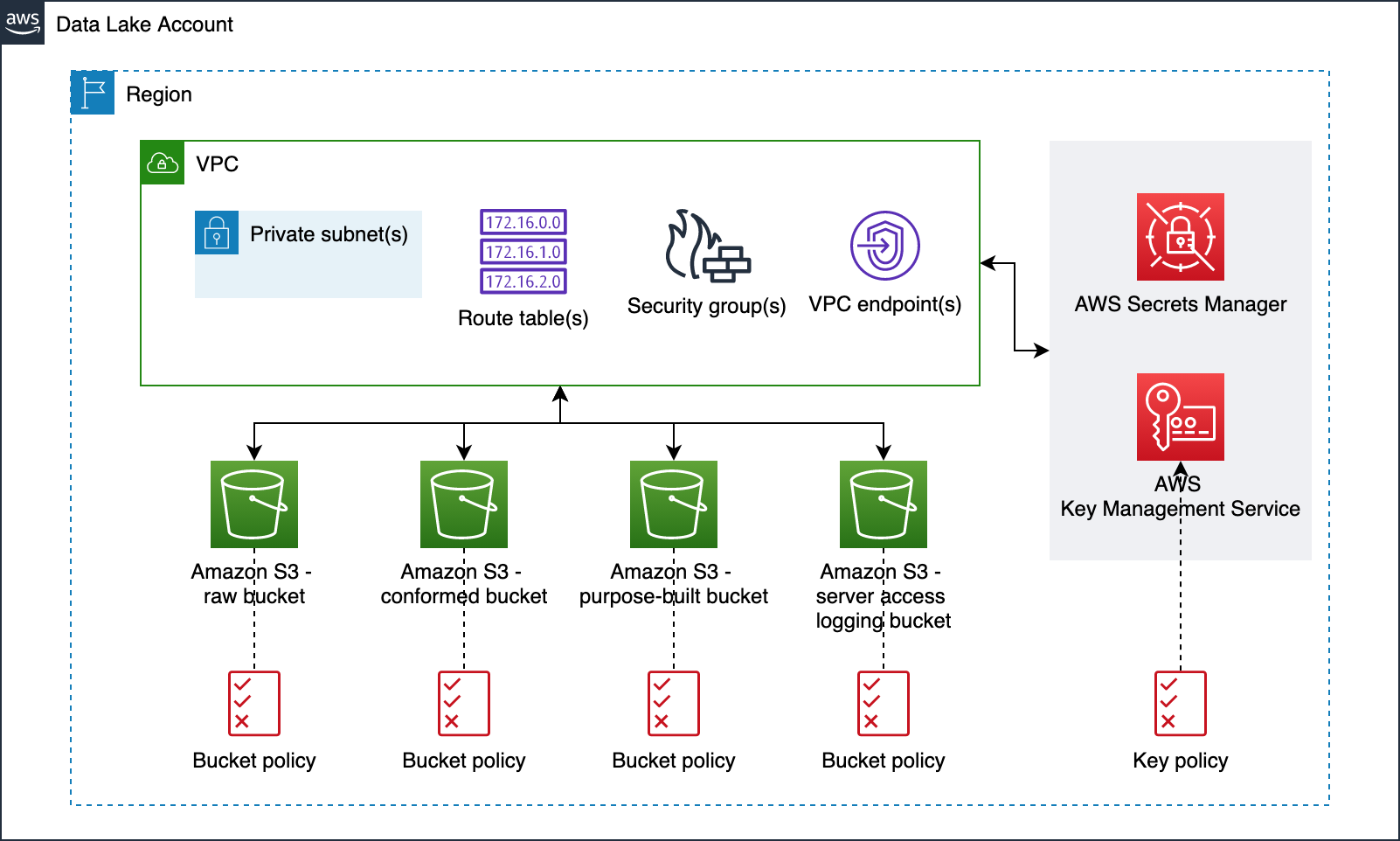

Data lake infrastructure

Our data lake infrastructure provisioning includes Amazon S3 buckets, S3 bucket policies, AWS Key Management Service (KMS) encryption keys, Amazon Virtual Private Cloud (Amazon VPC), subnets, route tables, security groups, VPC endpoints, and secrets in AWS Secrets Manager. The following diagram illustrates this.

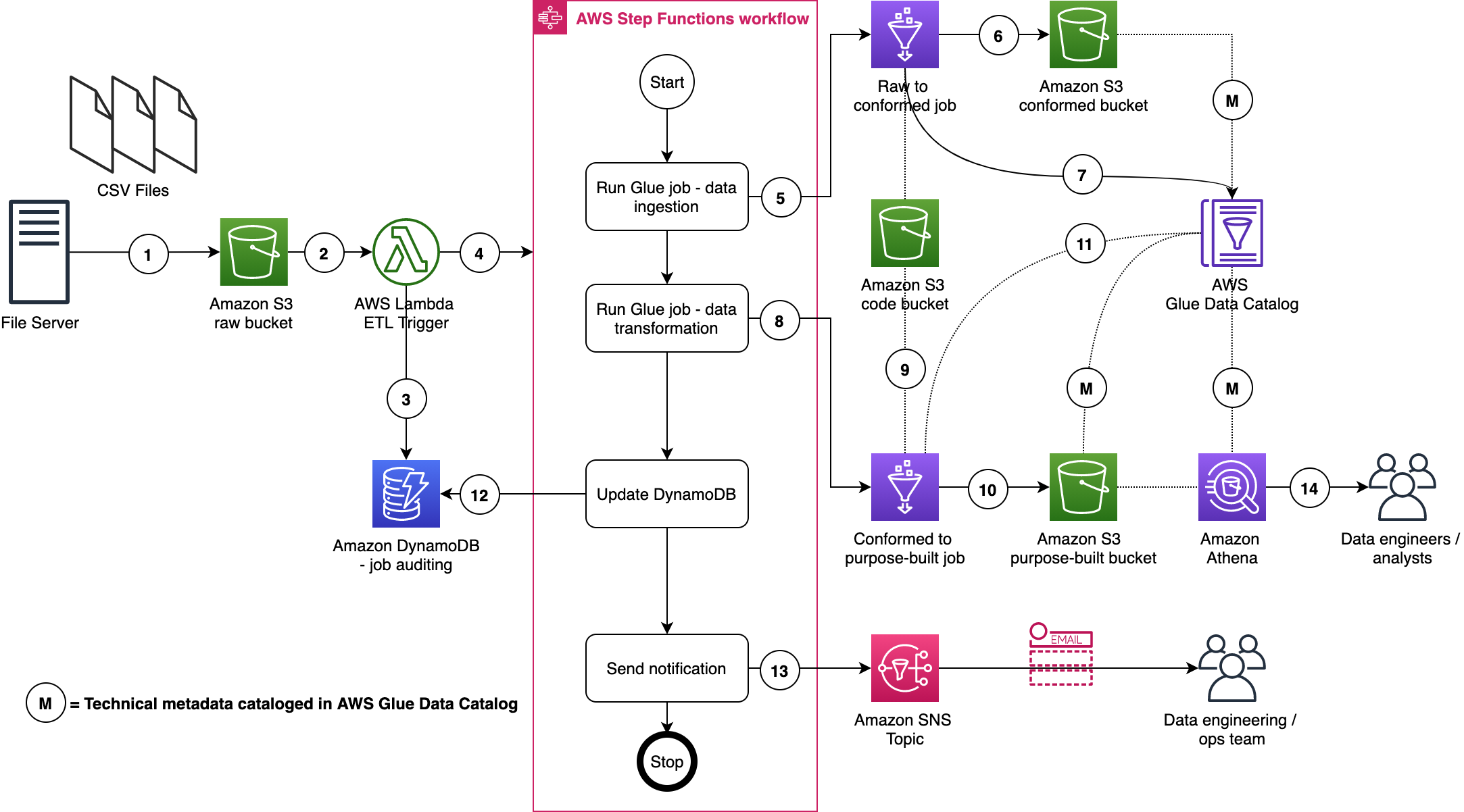

Data lake ETL jobs

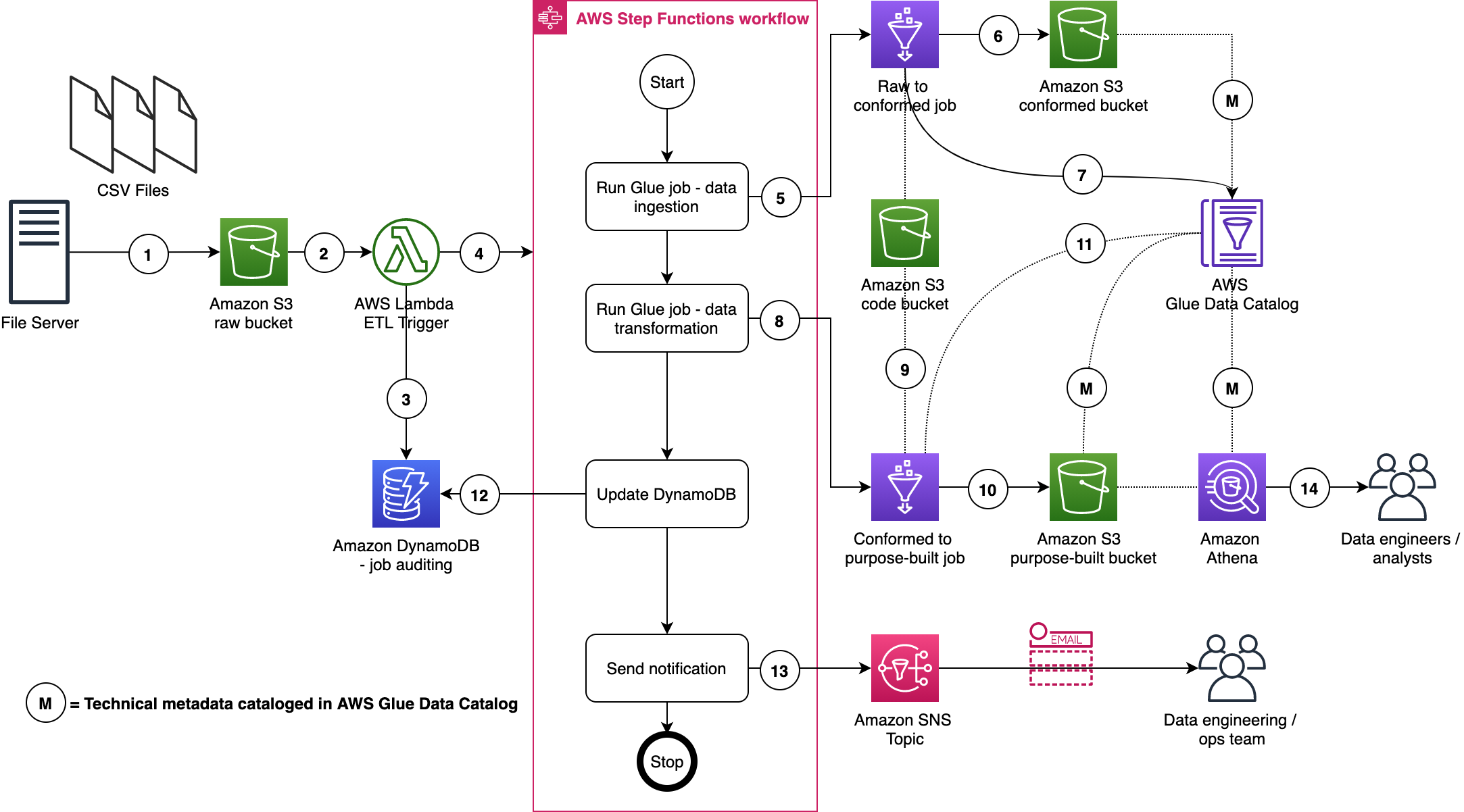

For our ETL jobs, we process New York City TLC Trip Record Data. The following figure displays our ETL process, wherein we run two ETL jobs within a Step Functions state machine.

Here are a few important details:

- A file server uploads files to the S3 raw bucket of the data lake. The file server is a data producer and source for the data lake. We assume that the data is pushed to the raw bucket.

- Amazon S3 triggers an event notification to the Lambda function.

- The function inserts an item in the Amazon DynamoDB table in order to track the file processing state. The first state written indicates the AWS Step Function start.

- The function starts the state machine.

- The state machine runs an AWS Glue job (Apache Spark).

- The job processes input data from the raw zone to the data lake conformed zone. The job also converts CSV input data to Parquet formatted data.

- The job updates the Data Catalog table with the metadata of the conformed Parquet file.

- A second AWS Glue job (Apache Spark) processes the input data from the conformed zone to the purpose-built zone of the data lake.

- The job fetches ETL transformation rules from the Amazon S3 code bucket and transforms the input data.

- The job stores the result in Parquet format in the purpose-built zone.

- The job updates the Data Catalog table with the metadata of the purpose-built Parquet file.

- The job updates the DynamoDB table and updates the job status to completed.

- An Amazon Simple Notification Service (Amazon SNS) notification is sent to subscribers that states the job is complete.

- Data engineers or analysts can now analyze data via Athena.

We will discuss data formats, Glue jobs, ETL transformation logics, data cataloging, auditing, notification, orchestration, and data analysis in more detail in AWS CDK Pipelines for Data Lake ETL Deployment GitHub repository. This will be discussed in the subsequent section.

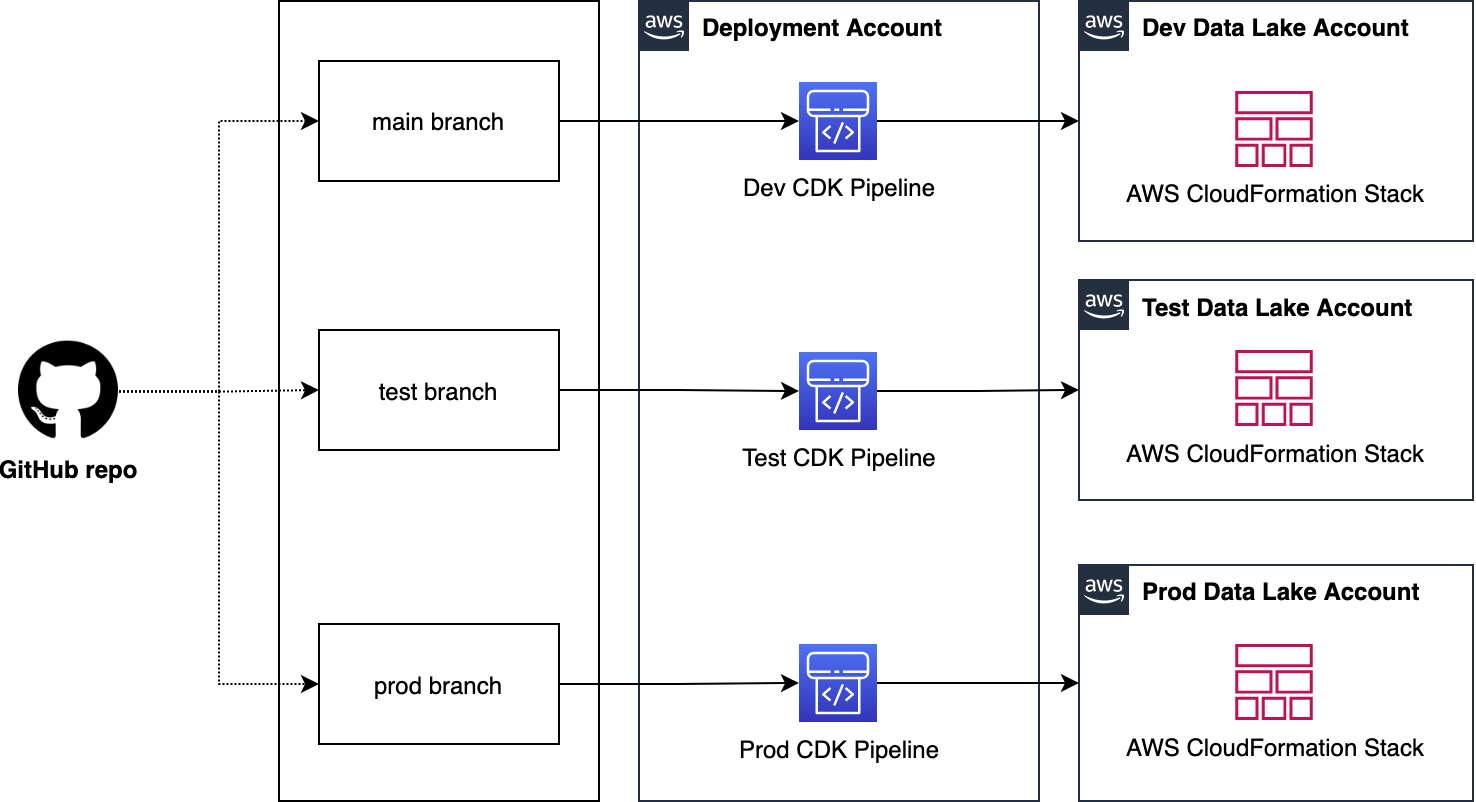

Centralized deployment

Now that we have data lake infrastructure and ETL jobs ready, let’s define our deployment model. This model is based on the following design principles:

A dedicated AWS account to run CDK pipelines.

One or more AWS accounts into which the data lake is deployed.

The data lake infrastructure has a dedicated source code repository. Typically, data lake infrastructure is a one-time deployment and rarely evolves. Therefore, a dedicated code repository provides a landing zone for your data lake.

Each ETL job has a dedicated source code repository. Each ETL job may have unique AWS service, orchestration, and configuration requirements. Therefore, a dedicated source code repository will help you more flexibly build, deploy, and maintain ETL jobs.

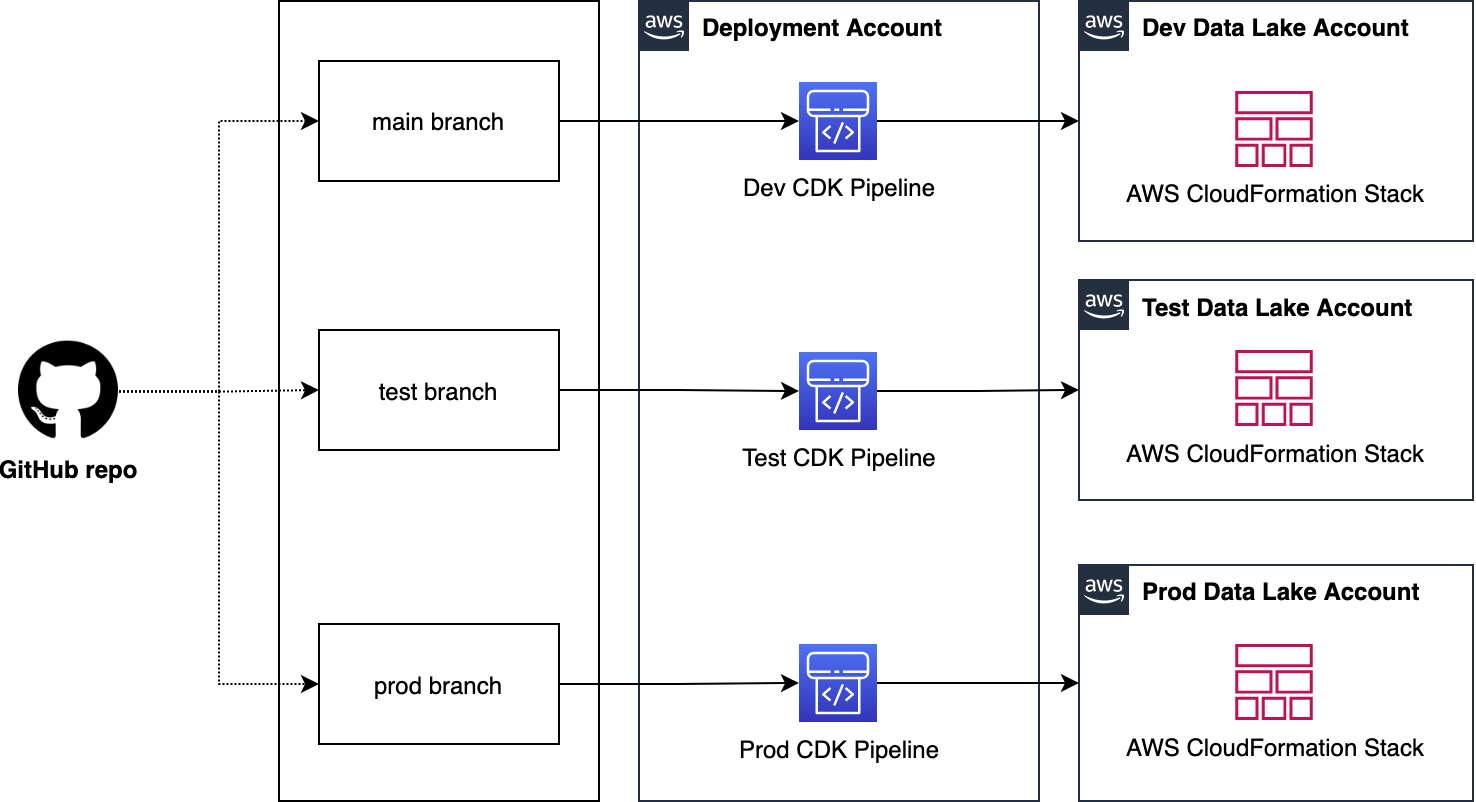

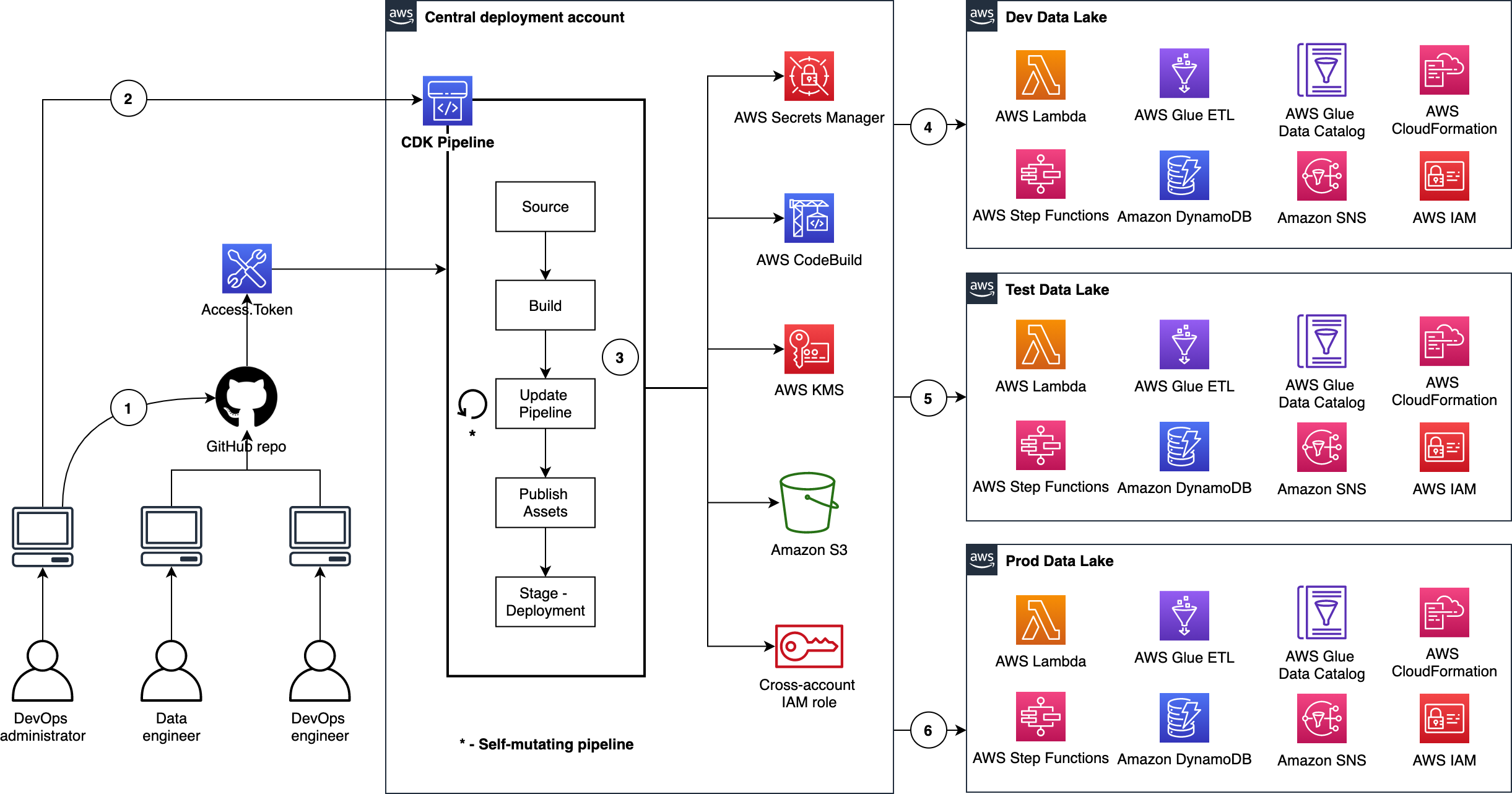

We organize our source code repo into three branches: dev (main), test, and prod. In the deployment account, we manage three separate CDK Pipelines and each pipeline is sourced from a dedicated branch. Here we choose a branch-based software development method in order to demonstrate the strategy in more complex scenarios where integration testing and validation layers require human intervention. As well, these may not immediately follow with a corresponding release or deployment due to their manual nature. This facilitates the propagation of changes through environments without blocking independent development priorities. We accomplish this by isolating resources across environments in the central deployment account, allowing for the independent management of each environment, and avoiding cross-contamination during each pipeline’s self-mutating updates. The following diagram illustrates this method.

Note: This centralized deployment strategy can be adopted for trunk-based software development with minimal solution modification.

Deploying data lake ETL jobs

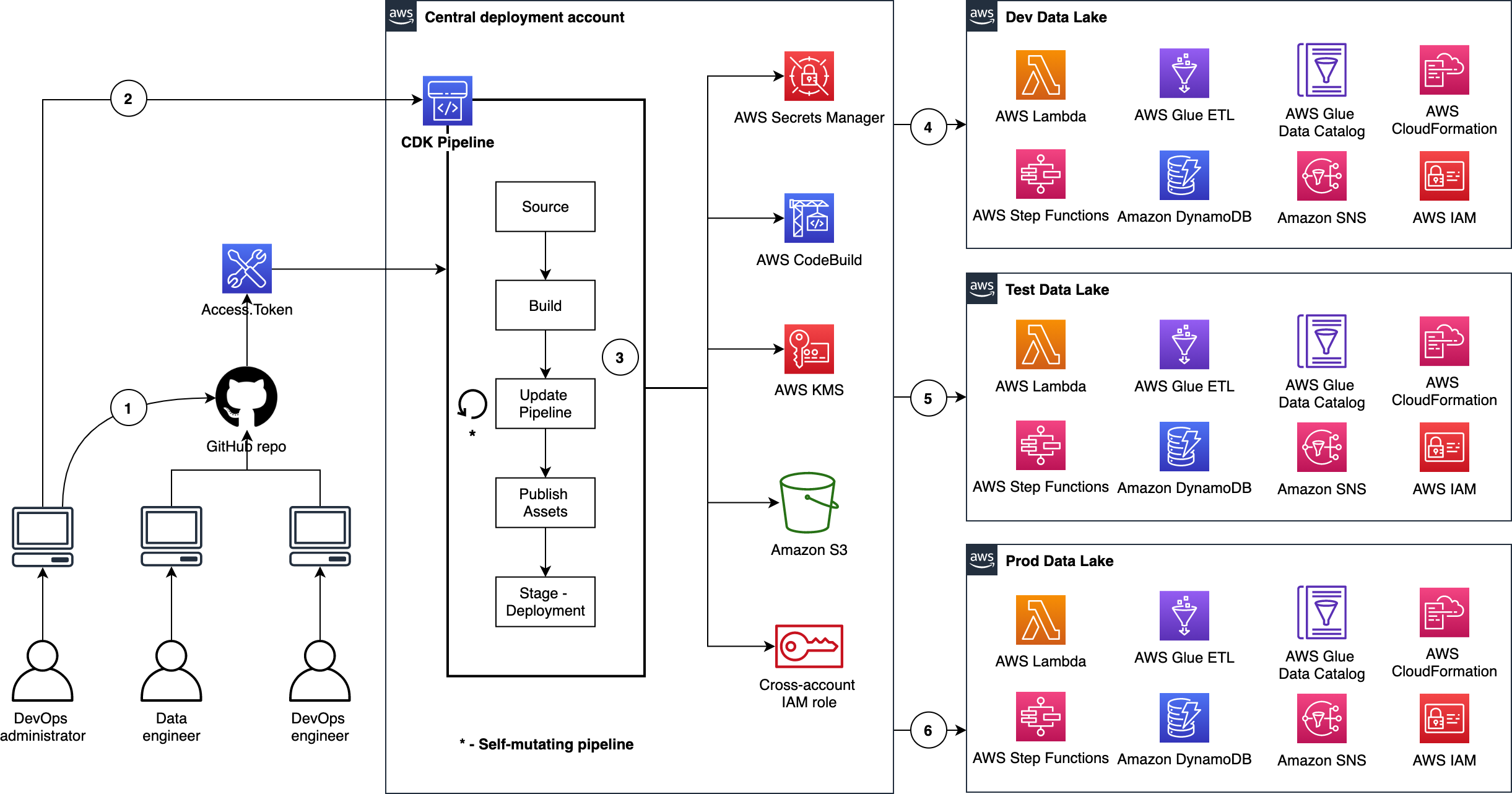

The following figure illustrates how we utilize CDK Pipelines to deploy data lake infrastructure and ETL jobs from a central deployment account. This model follows standard nomenclature from the AWS CDK. Each repository represents a cloud infrastructure code definition. This includes the pipelines construct definition. Pipelines have one or more actions, such as cloning the source code (source action) and synthesizing the stack into an AWS CloudFormation template (synth action). Each pipeline has one or more stages, such as testing and deploying. In an AWS CDK app context, the pipelines construct is a stack like any other stack. Therefore, when the AWS CDK app is deployed, a new pipeline is created in AWS CodePipeline.

This provides incredible flexibility regarding DevOps. In other words, as a developer with an understanding of AWS CDK APIs, you can harness the power and scalability of AWS services such as CodePipeline, AWS CodeBuild, and AWS CloudFormation.

Here are a few important details:

- The DevOps administrator checks in the code to the repository.

- The DevOps administrator (with elevated access) facilitates a one-time manual deployment on a target environment. Elevated access includes administrative privileges on the central deployment account and target AWS environments.

- CodePipeline periodically listens to commit events on the source code repositories. This is the self-mutating nature of CodePipeline. It’s configured to work with and can update itself according to the provided definition.

- Code changes made to the main repo branch are automatically deployed to the data lake dev environment.

- Code changes to the repo test branch are automatically deployed to the test environment.

- Code changes to the repo prod branch are automatically deployed to the prod environment.

CDK Pipelines starter kits for data lakes

Want to get going quickly with CDK Pipelines for your data lake? Start by cloning our two GitHub repositories. Here is a summary:

CDK Pipelines for Data Lake Infrastructure Deployment

This repository contains the following reusable resources:

CDK Application

CDK Pipelines stack

CDK Pipelines deploy stage

Amazon VPC stack

Amazon S3 stack

It also contains the following automation scripts:

AWS environments configuration

Deployment account bootstrapping

Target account bootstrapping

Account secrets configuration (e.g., GitHub access tokens)

CDK Pipelines for Data Lake ETL Deployment

This repository contains the following reusable resources:

CDK Application

CDK Pipelines stack

CDK Pipelines deploy stage

Amazon DynamoDB stack

AWS Glue stack

AWS Step Functions stack

It also contains the following:

AWS Lambda scripts

AWS Glue scripts

AWS Step Functions State machine script

Advantages

This section summarizes some of the advantages offered by this solution.

Scalable and centralized deployment model

We utilize a scalable and centralized deployment model to deliver end-to-end automation. This allows DevOps and data engineers to use the single responsibility principal while maintaining precise control over the deployment strategy and code quality. The model can readily be expanded to more accounts, and the pipelines are responsive to custom controls within each environment, such as a production approval layer.

Configuration-driven deployment

Configuration in the source code and AWS Secrets Manager allow deployments to utilize targeted values that are declared globally in a single location. This provides consistent management of global configurations and dependencies such as resource names, AWS account Ids, Regions, and VPC CIDR ranges. Similarly, the CDK Pipelines export outputs from CloudFormation stacks for later consumption via other resources.

Repeatable and consistent deployment of new ETL jobs

Continuous integration and continuous delivery (CI/CD) pipelines allow teams to deploy to production more frequently. Code changes can be safely and securely propagated through environments and released for deployment. This allows rapid iteration on data processing jobs, and these jobs can be changed in isolation from pipeline changes, resulting in reliable workflows.

Cleaning up

You may delete the resources provisioned by utilizing the starter kits. You can do this by running the cdk destroy command using AWS CDK Toolkit. For detailed instructions, refer to the Clean up sections in the starter kit README files.

Conclusion

In this post, we showed how to utilize CDK Pipelines to deploy infrastructure and data processing ETL jobs of your data lake in dev, test, and production AWS environments. We provided two GitHub repositories for you to test and realize the full benefits of this solution first hand. We encourage you to fork the repositories, bring your ETL scripts, bootstrap your accounts, configure account parameters, and continuously delivery your data lake ETL jobs.

Let’s stay in touch via the GitHub—CDK Pipelines for Data Lake Infrastructure Deployment and CDK Pipelines for Data Lake ETL Deployment.

Secure and analyse your Terraform code using AWS CodeCommit, AWS CodePipeline, AWS CodeBuild and tfsec

=======================

Introduction

More and more customers are using Infrastructure-as-Code (IaC) to design and implement their infrastructure on AWS. This is why it is essential to have pipelines with Continuous Integration/Continuous Deployment (CI/CD) for infrastructure deployment. HashiCorp Terraform is one of the popular IaC tools for customers on AWS.

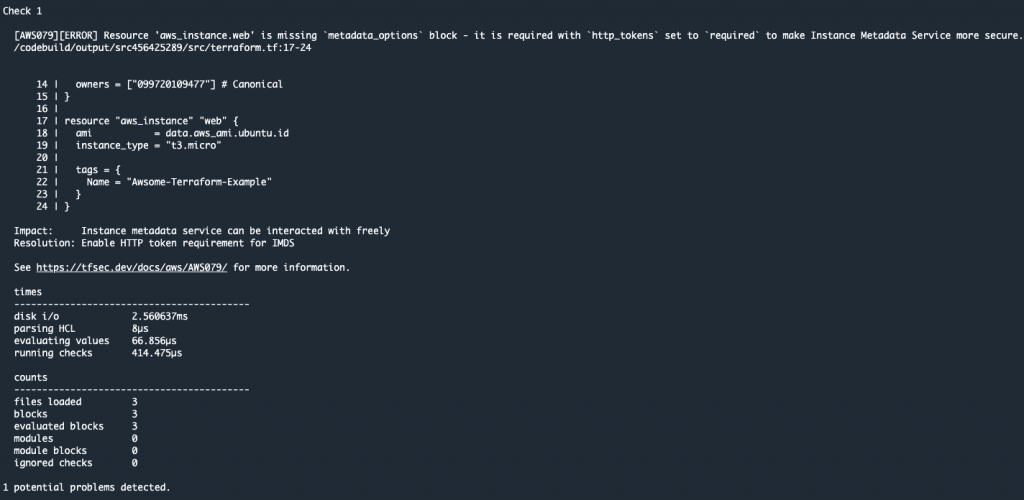

In this blog, I will guide you through building a CI/CD pipeline on AWS to analyze and identify possible configurations issues in your Terraform code templates. This will help mitigate security risks within our infrastructure deployment pipelines as part of our CI/CD. To do this, we utilize AWS tools and the Open Source tfsec tool, a static analysis security scanner for your Terraform code, including more than 90 preconfigured checks with the ability to add custom checks.

Solutions Overview

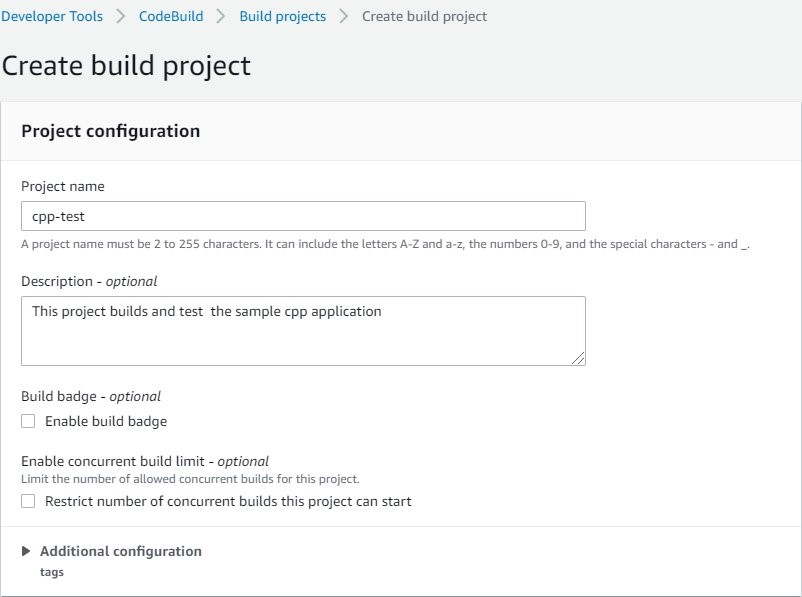

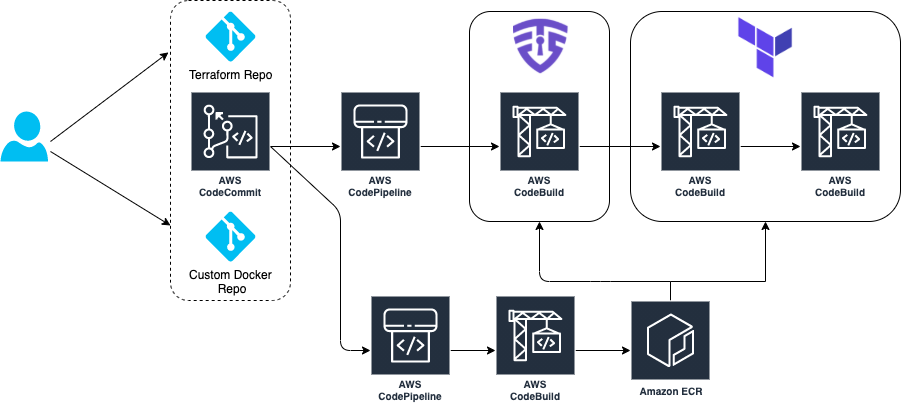

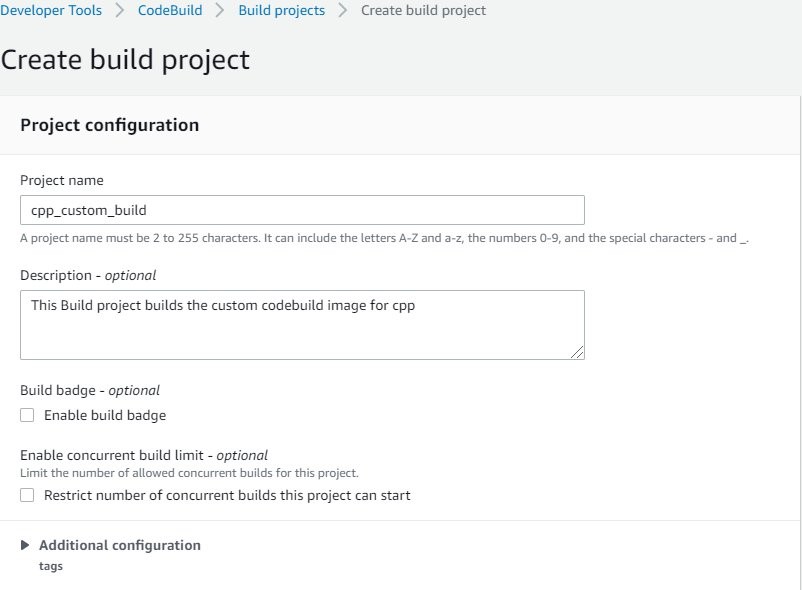

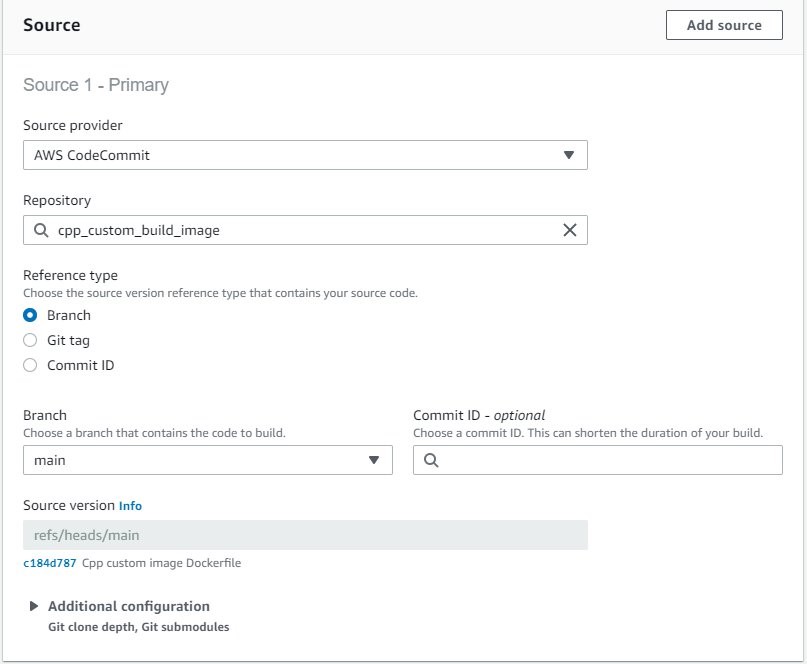

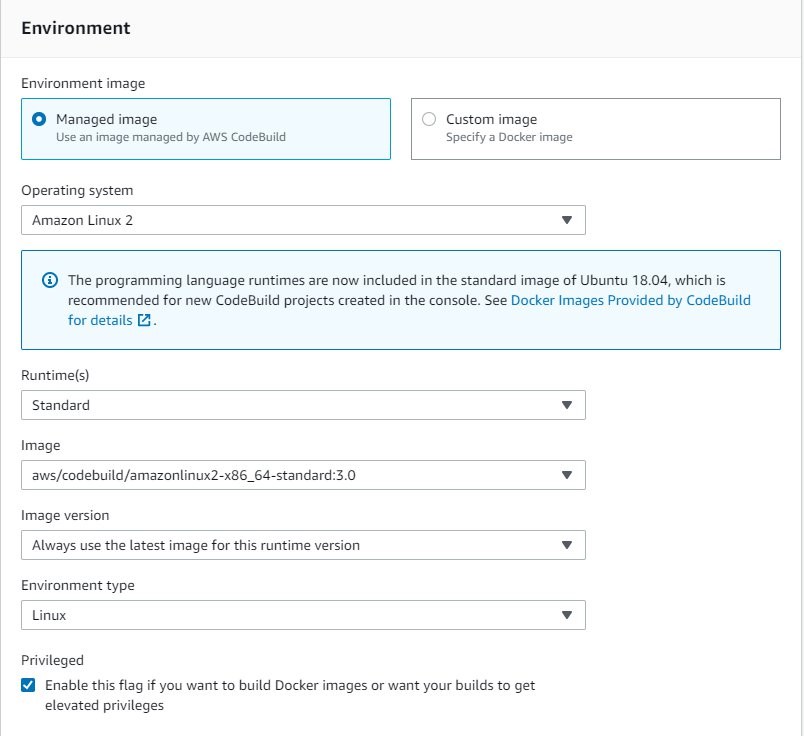

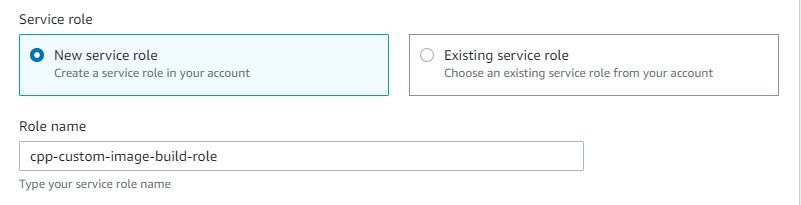

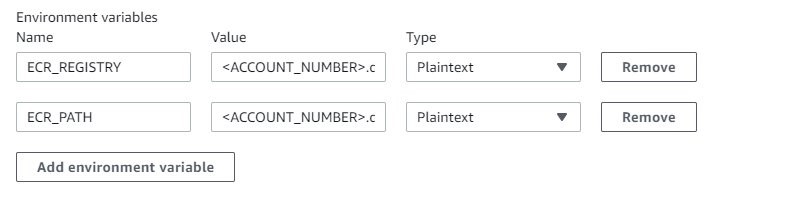

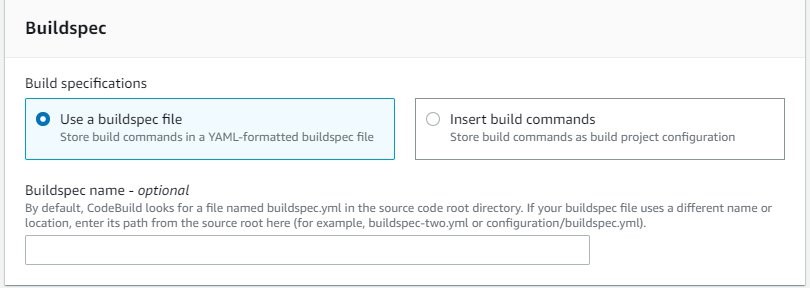

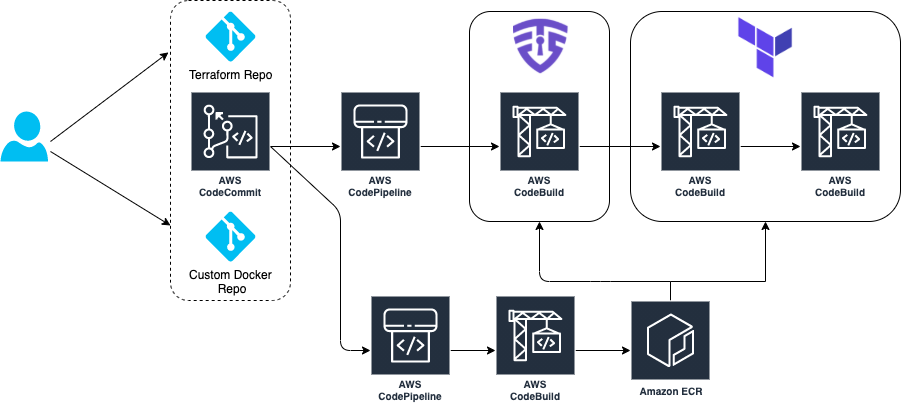

The architecture goes through a CI/CD pipeline created on AWS using AWS CodeCommit, AWS CodePipeline, AWS CodeBuild, and Amazon ECR.

Our demo has two separate pipelines:

- CI/CD Pipeline to build and push our custom Docker image to Amazon ECR

- CI/CD Pipeline where our tfsec analysis is executed and Terraform provisions infrastructure

The tfsec configuration and Terraform goes through a buildspec specification file defined within an AWS CodeBuild action. This action will calculate how many potential security risks we currently have within our Terraform templates, which will be displayed in our manual acceptance process for verification.

Provisioning the infrastructure

We have created an AWS Cloud Development Kit (AWS CDK) app hosted in a Git Repository written in Python. Here you can deploy the two main pipelines in order to manage this scenario. For a list of the deployment prerequisites, see the README.md file.

Clone the repo in your local machine. Then, bootstrap and deploy the CDK stack:

git clone https://github.com/aws-samples/aws-cdk-tfsec

cd aws-cdk-tfsec

pip install -r requirements.txt

cdk bootstrap aws://account_id/eu-west-1

cdk deploy --all

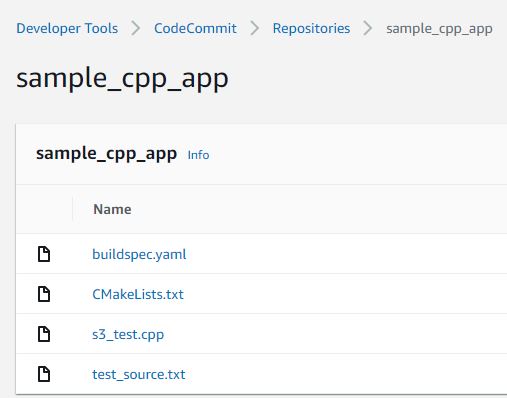

The infrastructure creation takes around 5-10 minutes due the AWS CodePipelines and referenced repository creation. Once the CDK has deployed the infrastructure, clone the two new AWS CodeCommit repos that have already been created and push the example code. First, one for the custom Docker image, and later for your Terraform code, like this:

git clone https://git-codecommit.eu-west-1.amazonaws.com/v1/repos/awsome-terraform-example-container

cd awsome-terraform-example-container

git checkout -b main

cp repos/docker_image/* .

git add .

git commit -am "First commit"

git push origin main

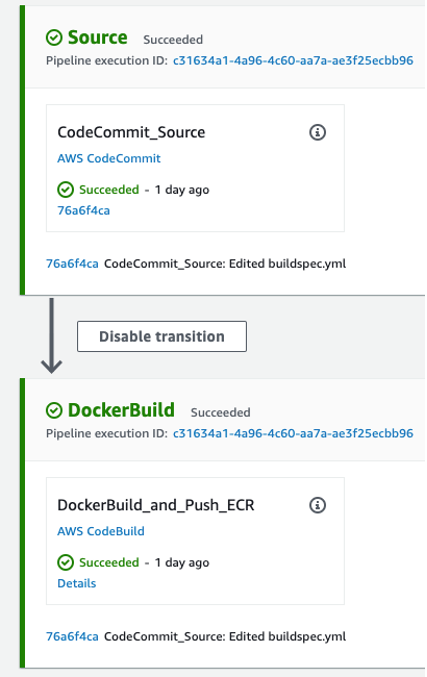

Once the Docker image is built and pushed to the Amazon ECR, proceed with Terraform repo. Check the pipeline process on the AWS CodePipeline console.

git clone https://git-codecommit.eu-west-1.amazonaws.com/v1/repos/awsome-terraform-example

cd awsome-terraform-example

git checkout -b main

cp -aR repos/terraform_code/* .

git add .

git commit -am "First commit"

git push origin main

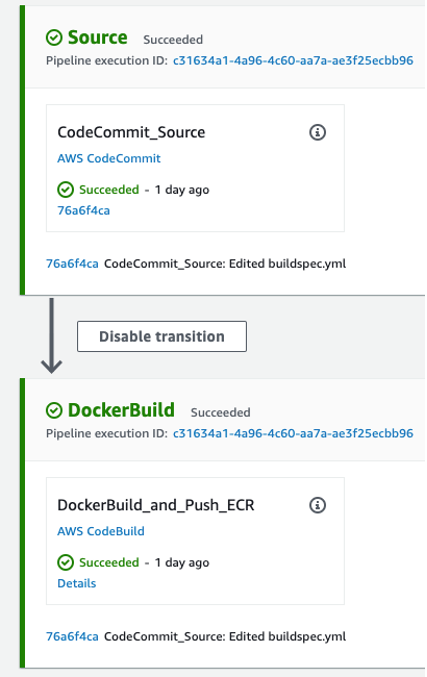

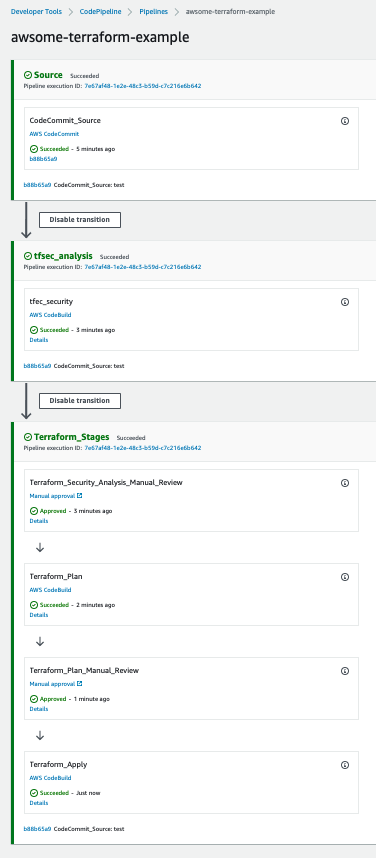

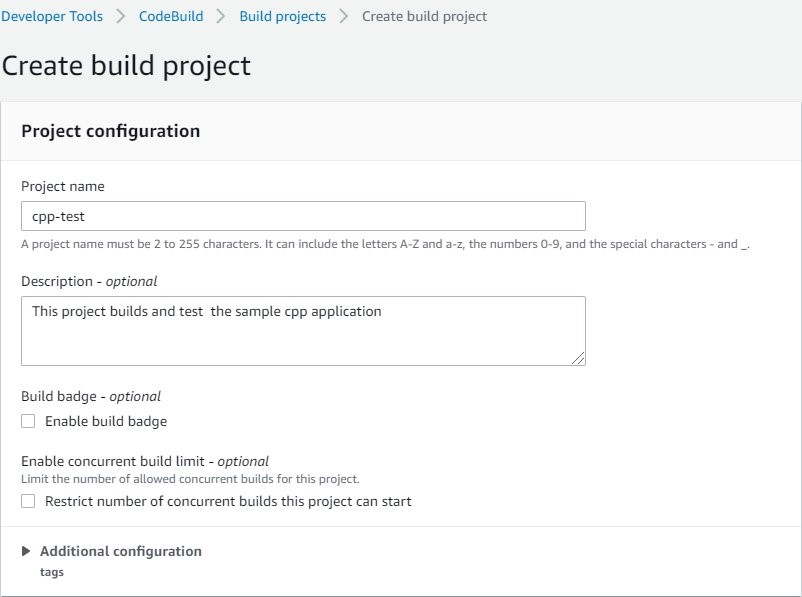

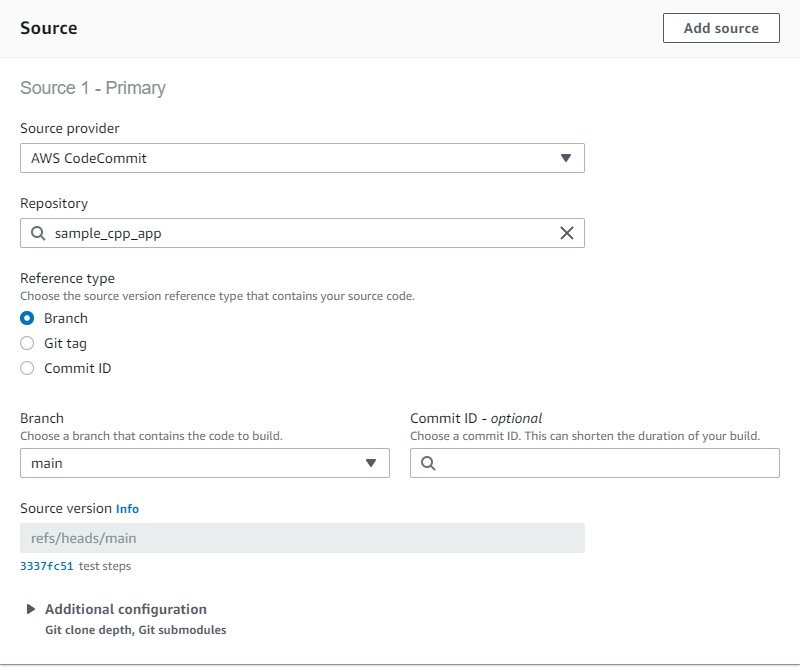

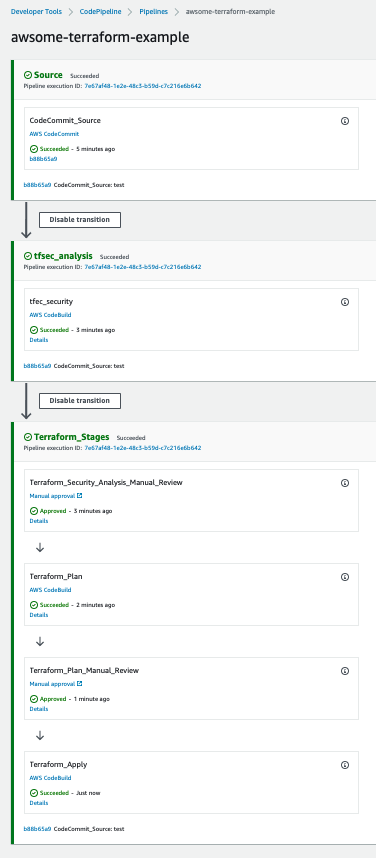

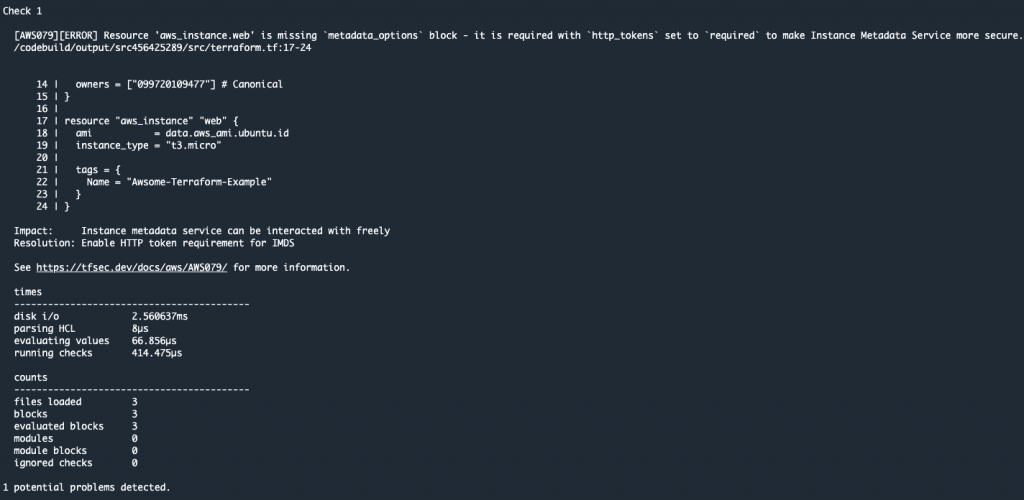

The Terraform provisioning AWS CodePipeline has the following aspect:

The pipeline has three main stages:

Source – AWS CodeCommit stores the Terraform repository infrastructure and every time we push code to the main branch the AWS CodePipeline will be triggered.

tfsec analysis – AWS CodeBuild looks for a buildspec to execute the tfsec actions configured on the same buildspec.

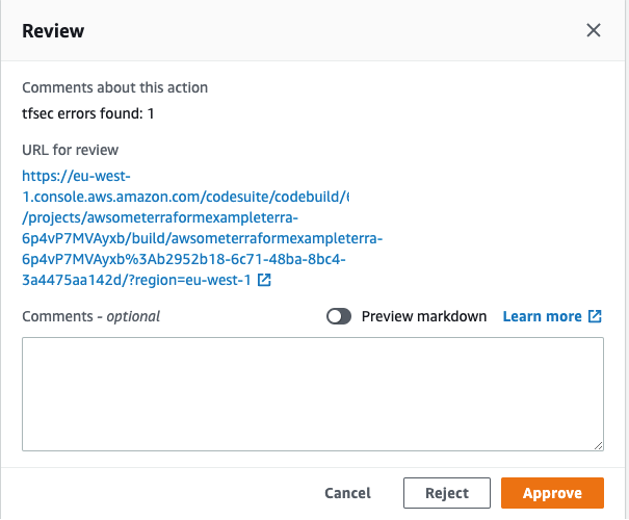

The output shows the potential security issues detected by tfsec for our Terraform code. The output is linking to the different security issues already defined on tfsec. Check the security checks defined by tfsec here. After tfsec execution, a manual approval action is set up to decide if we should go for the next steps or if we reject and stop the AWS CodePipeline execution.

The URL for review is linking to our tfsec output console.

Terraform plan and Terraform apply – This will be applied to our infrastructure plan. After the Terraform plan command and before the Terraform apply, a manual action is set up to decide if we can apply the changes.

After going through all of the stages, our Terraform infrastructure should be created.

Clean up

After completing your demo, feel free to delete your stack using the CDK cli:

cdk destroy --all

Conclusion

At AWS, security is our top priority. This post demonstrates how to build a CI/CD pipeline by using AWS Services to automate and secure your infrastructure as code via Terraform and tfsec.

Learn more about tfsec through the official documentation: https://tfsec.dev/

Blue/Green deployment with AWS Developer tools on Amazon EC2 using Amazon EFS to host application source code

=======================

Many organizations building modern applications require a shared and persistent storage layer for hosting and deploying data-intensive enterprise applications, such as content management systems, media and entertainment, distributed applications like machine learning training, etc. These applications demand a centralized file share that scales to petabytes without disrupting running applications and remains concurrently accessible from potentially thousands of Amazon EC2 instances.

Simultaneously, customers want to automate the end-to-end deployment workflow and leverage continuous methodologies utilizing AWS developer tools services for performing a blue/green deployment with zero downtime. A blue/green deployment is a deployment strategy wherein you create two separate, but identical environments. One environment (blue) is running the current application version, and one environment (green) is running the new application version. The blue/green deployment strategy increases application availability by generally isolating the two application environments and ensuring that spinning up a parallel green environment won’t affect the blue environment resources. This isolation reduces deployment risk by simplifying the rollback process if a deployment fails.

Amazon Elastic File System (Amazon EFS) provides a simple, scalable, and fully-managed elastic NFS file system for use with AWS Cloud services and on-premises resources. It scales on demand, thereby eliminating the need to provision and manage capacity in order to accommodate growth. Utilize Amazon EFS to create a shared directory that stores and serves code and content for numerous applications. Your application can treat a mounted Amazon EFS volume like local storage. This means you don’t have to deploy your application code every time the environment scales up to multiple instances to distribute load.

In this blog post, I will guide you through an automated process to deploy a sample web application on Amazon EC2 instances utilizing Amazon EFS mount to host application source code, and utilizing a blue/green deployment with AWS code suite services in order to deploy the application source code with no downtime.

How this solution works

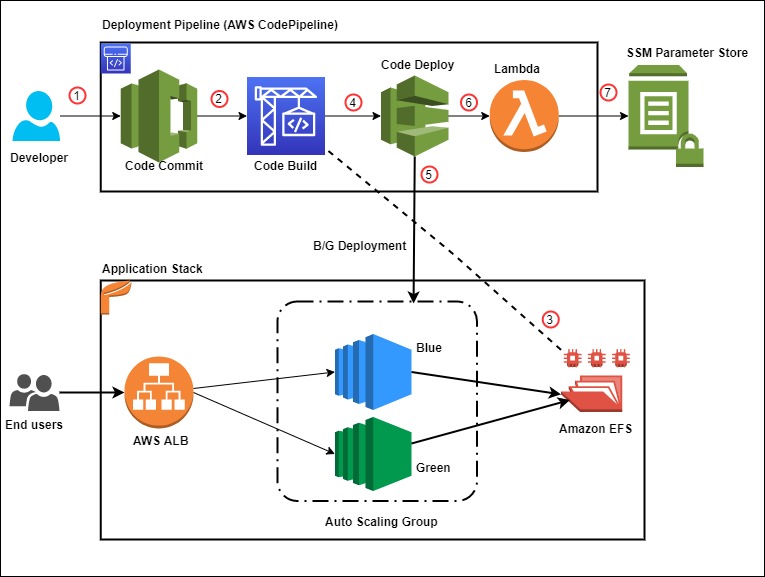

This blog post includes a CloudFormation template to provision all of the resources needed for this solution. The CloudFormation stack deploys a Hello World application on Amazon Linux 2 EC2 Instances running behind an Application Load Balancer and utilizes Amazon EFS mount point to store the application content. The AWS CodePipeline project utilizes AWS CodeCommit as the version control, AWS CodeBuild for installing dependencies and creating artifacts, and AWS CodeDeploy to conduct deployment on EC2 instances running in an Amazon EC2 Auto Scaling group.

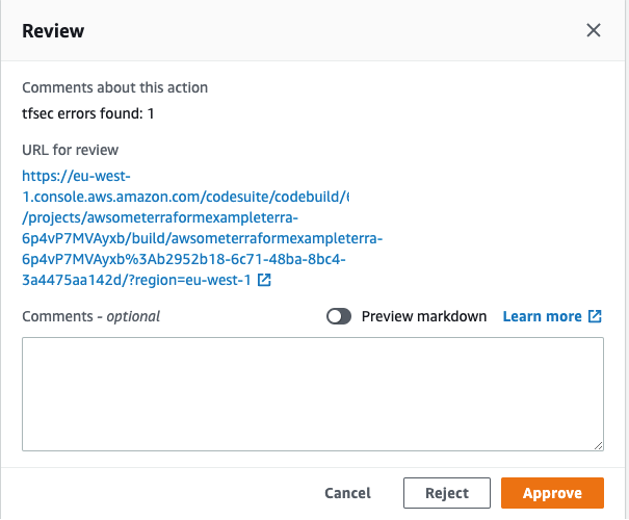

Figure 1 below illustrates our solution architecture.

Figure 1: Sample solution architecture

The event flow in Figure 1 is as follows:

- A developer commits code changes from their local repo to the CodeCommit repository. The commit triggers CodePipeline execution.

- CodeBuild execution begins to compile source code, install dependencies, run custom commands, and create deployment artifact as per the instructions in the Build specification reference file.

- During the build phase, CodeBuild copies the source-code artifact to Amazon EFS file system and maintains two different directories for current (green) and new (blue) deployments.

- After successfully completing the build step, CodeDeploy deployment kicks in to conduct a Blue/Green deployment to a new Auto Scaling Group.

- During the deployment phase, CodeDeploy mounts the EFS file system on new EC2 instances as per the CodeDeploy AppSpec file reference and conducts other deployment activities.

- After successful deployment, a Lambda function triggers in order to store a deployment environment parameter in Systems Manager parameter store. The parameter stores the current EFS mount name that the application utilizes.

- The AWS Lambda function updates the parameter value during every successful deployment with the current EFS location.

Prerequisites

For this walkthrough, the following are required:

An AWS account

Access to an AWS account with administrator or PowerUser (or equivalent) AWS Identity and Access Management(IAM) role policies attached

Git Command Line installed and configured in your local environment

Deploy the solution

Once you’ve assembled the prerequisites, download or clone the GitHub repo and store the files on your local machine. Utilize the commands below to clone the repo:

mkdir -p ~/blue-green-sample/

cd ~/blue-green-sample/

git clone https://github.com/aws-samples/blue-green-deployment-pipeline-for-efs

Once completed, utilize the following steps to deploy the solution in your AWS account:

- Create a private Amazon Simple Storage Service (Amazon S3) bucket by using this documentation

Figure 2: AWS S3 console view when creating a bucket

- Upload the cloned or downloaded GitHub repo files to the root of the S3 bucket. the S3 bucket objects structure should look similar to Figure 3:

Figure 3: AWS S3 bucket object structure

- Go to the S3 bucket and select the template name solution-stack-template.yml, and then copy the object URL.

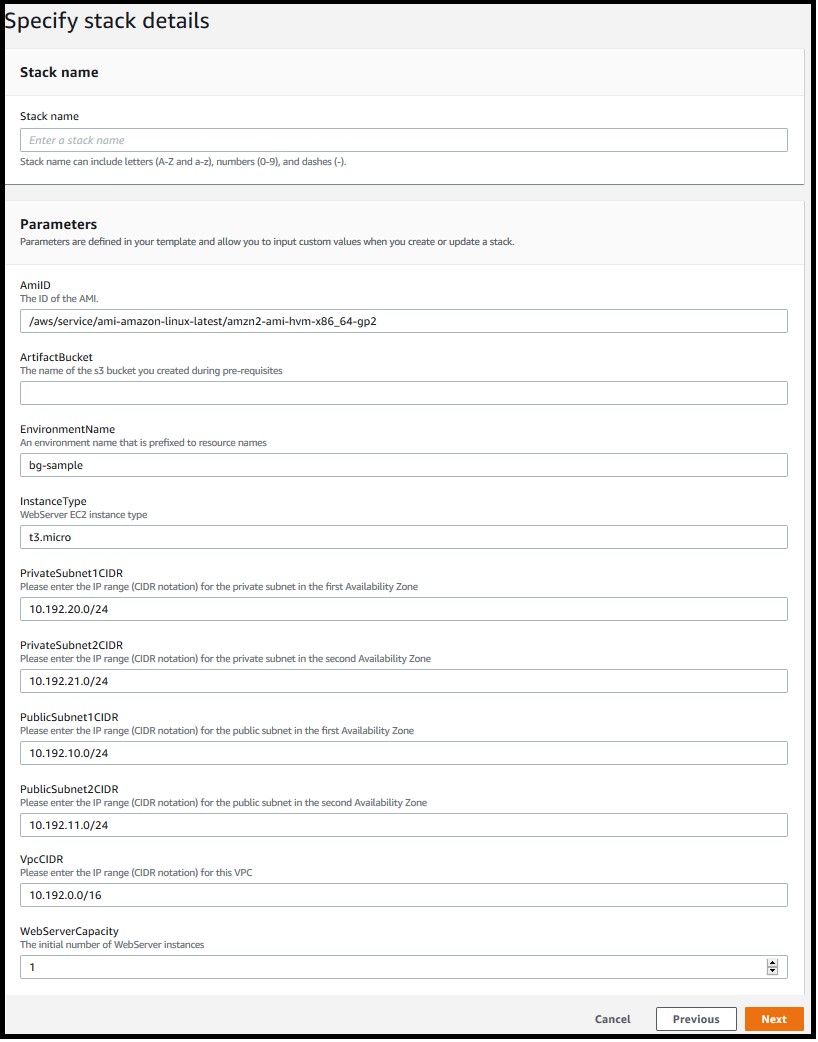

- Open the CloudFormation console. Choose the appropriate AWS Region, and then choose Create Stack. Select With new resources.

- Select Amazon S3 URL as the template source, paste the object URL that you copied in Step 3, and then choose Next.

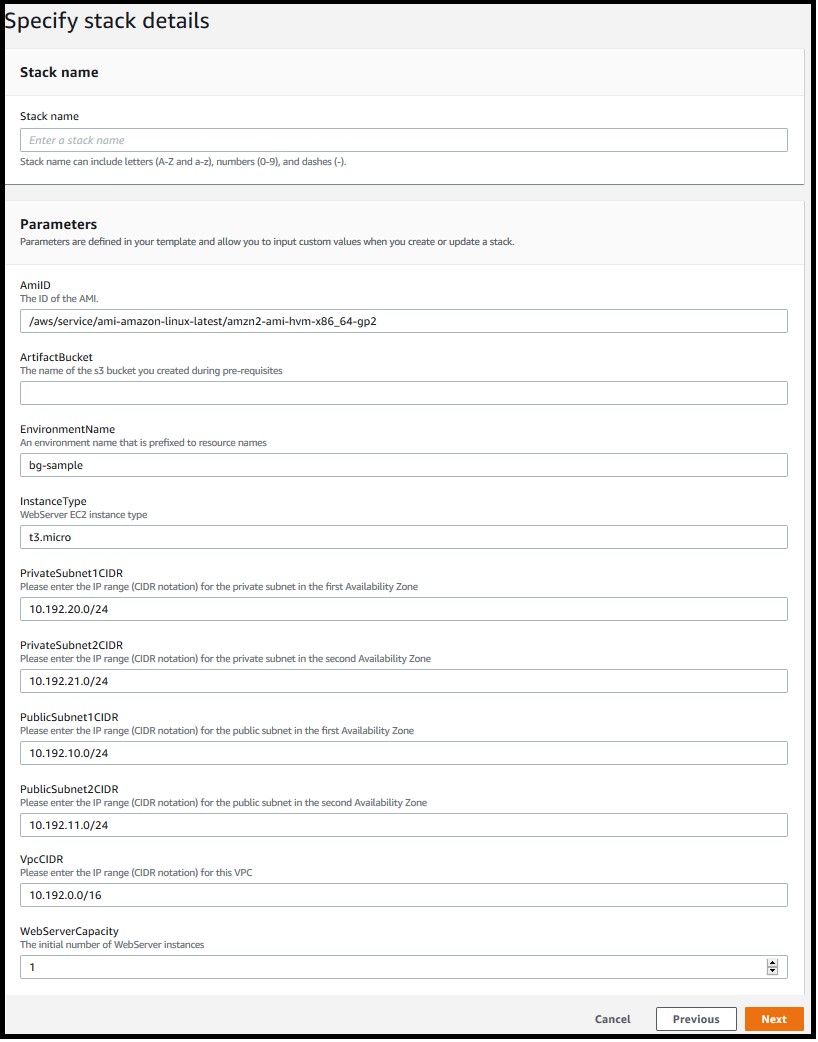

- On the Specify stack details page, enter a name for the stack and provide the following input parameter. Modify the default values for other parameters in order to customize the solution for your environment. You can leave everything as default for this walkthrough.

ArtifactBucket– The name of the S3 bucket that you created in the first step of the solution deployment. This is a mandatory parameter with no default value.

Figure 4: Defining the stack name and input parameters for the CloudFormation stack

- Choose Next.

- On the Options page, keep the default values and then choose Next.

- On the Review page, confirm the details, acknowledge that CloudFormation might create IAM resources with custom names, and then choose Create Stack.

- Once the stack creation is marked as CREATE_COMPLETE, the following resources are created:

A virtual private cloud (VPC) configured with two public and two private subnets.

NAT Gateway, an EIP address, and an Internet Gateway.

Route tables for private and public subnets.

Auto Scaling Group with a single EC2 Instance.

Application Load Balancer and a Target Group.

Three security groups—one each for ALB, web servers, and EFS file system.

Amazon EFS file system with a mount target for each Availability Zone.

CodePipeline project with CodeCommit repository, CodeBuild, and CodeDeploy resources.

SSM parameter to store the environment current deployment status.

Lambda function to update the SSM parameter for every successful pipeline execution.

Required IAM Roles and policies.

Note: It may take anywhere from 10-20 minutes to complete the stack creation.

Test the solution

Now that the solution stack is deployed, follow the steps below to test the solution:

- Validate CodePipeline execution status

After successfully creating the CloudFormation stack, a CodePipeline execution automatically triggers to deploy the default application code version from the CodeCommit repository.

In the AWS console, choose Services and then CloudFormation. Select your stack name. On the stack Outputs tab, look for the CodePipelineURL key and click on the URL.

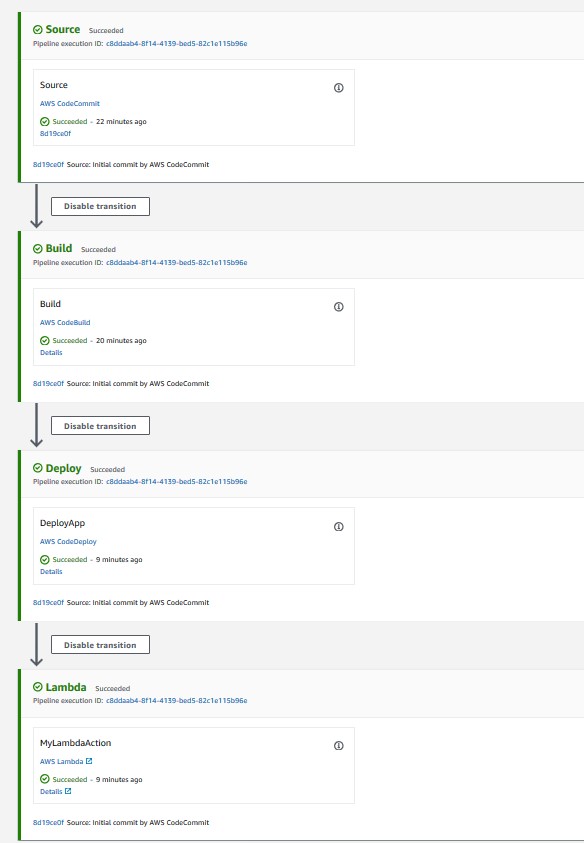

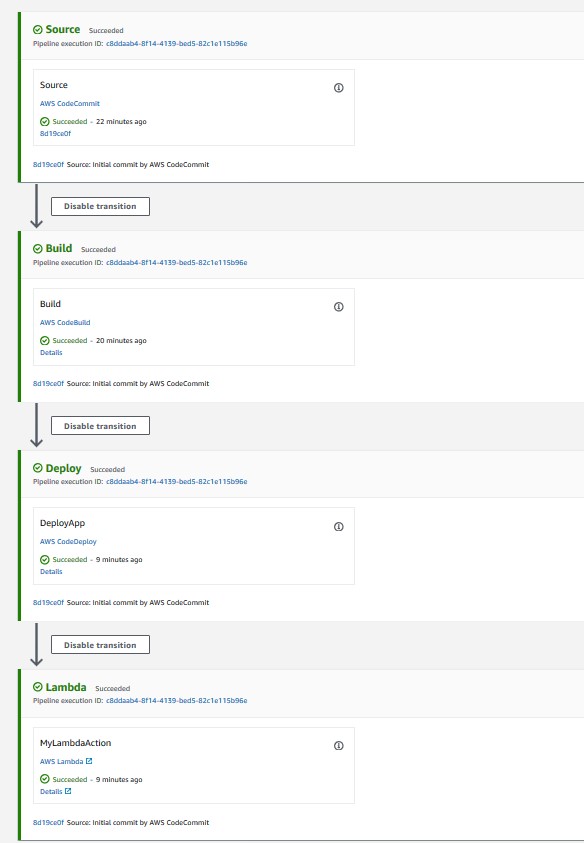

Validate that all steps have successfully completed. For a successful CodePipeline execution, you should see something like Figure 5. Wait for the execution to complete in case it is still in progress.

Figure 5: CodePipeline console showing execution status of all stages

- Validate the Website URL

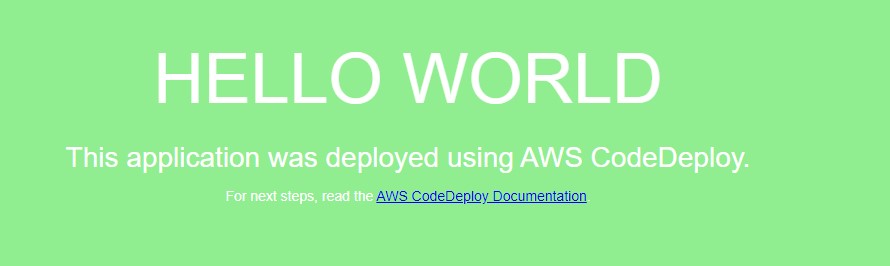

After completing the pipeline execution, hit the website URL on a browser to check if it’s working.

On the stack Outputs tab, look for the WebsiteURL key and click on the URL.

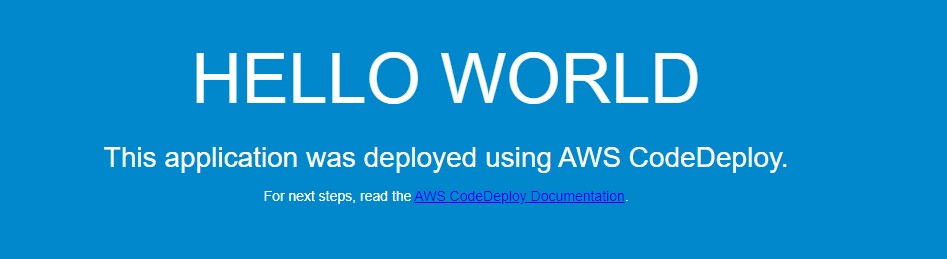

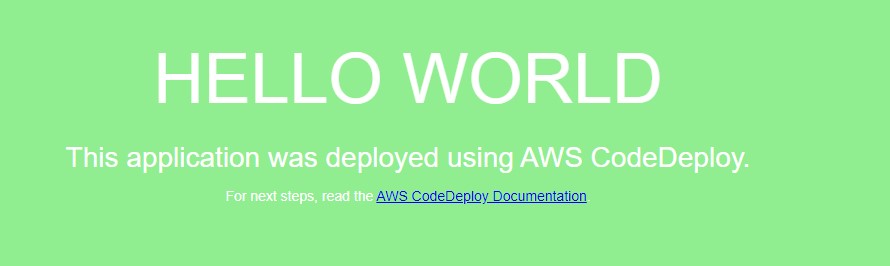

For a successful deployment, it should open a default page similar to Figure 6.

Figure 6: Sample “Hello World” application (Green deployment)

- Validate the EFS share

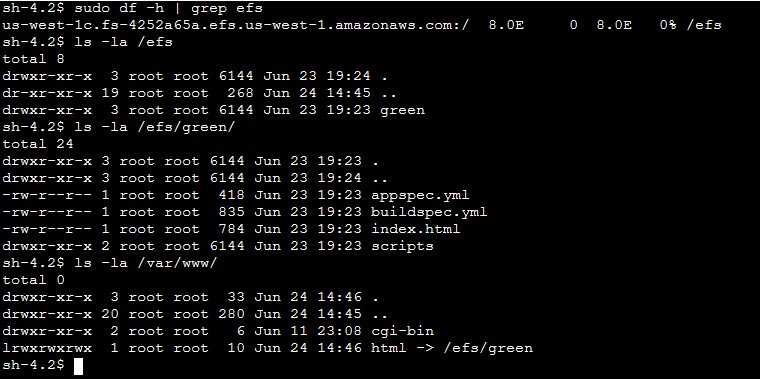

After the website deployed successfully, we will get into the application server and validate the EFS mount point and the application source code directory.

Open the Amazon EC2 console, and then choose Instances in the left navigation pane.

Select the instance named bg-sample and choose

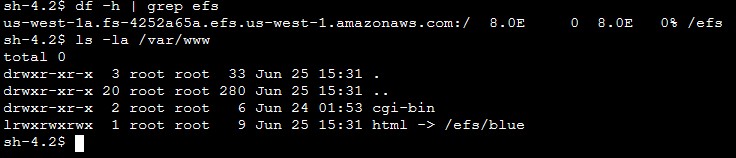

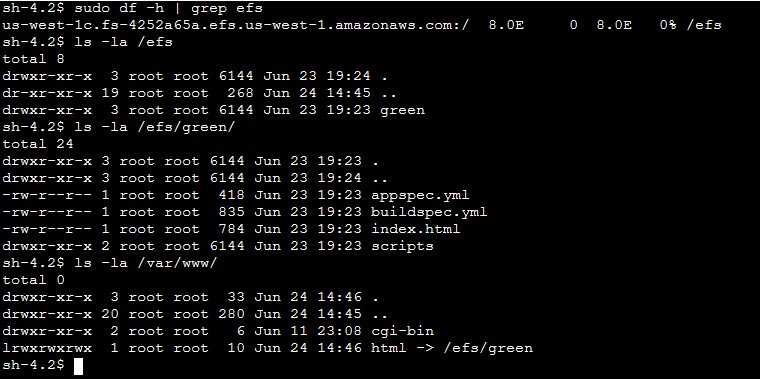

For Connection method, choose Session Manager, and then choose connect

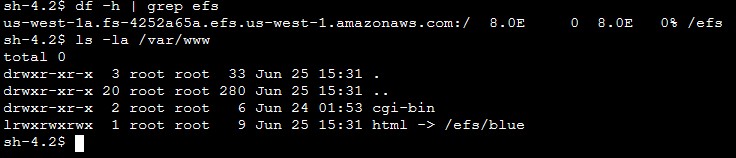

After the connection is made, run the following bash commands to validate the EFS mount and the deployed content. Figure 7 shows a sample output from running the bash commands.

sudo df –h | grep efs

ls –la /efs/green

ls –la /var/www/

Figure 7: Sample output from the bash command (Green deployment)

- Deploy a new revision of the application code

After verifying the application status and the deployed code on the EFS share, commit some changes to the CodeCommit repository in order to trigger a new deployment.

On the stack Outputs tab, look for the CodeCommitURL key and click on the corresponding URL.

Click on the file html.

Click on

Uncomment line 9 and comment line 10, so that the new lines look like those below after the changes:

background-color: #0188cc;

#background-color: #90ee90;

Add Author name, Email address, and then choose Commit changes.

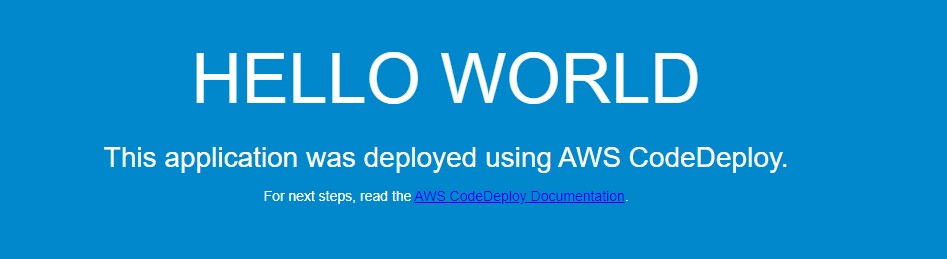

After you commit the code, the CodePipeline triggers and executes Source, Build, Deploy, and Lambda stages. Once the execution completes, hit the Website URL and you should see a new page like Figure 8.

Figure 8: New Application version (Blue deployment)

On the EFS side, the application directory on the new EC2 instance now points to /efs/blue as shown in Figure 9.

Figure 9: Sample output from the bash command (Blue deployment)

Solution review

Let’s review the pipeline stages details and what happens during the Blue/Green deployment:

1) Build stage

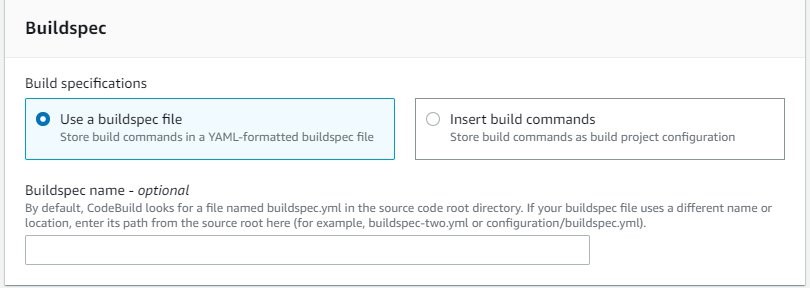

For this sample application, the CodeBuild project is configured to mount the EFS file system and utilize the buildspec.yml file present in the source code root directory to run the build. Following is the sample build spec utilized in this solution:

version: 0.2

phases:

install:

runtime-versions:

php: latest

build:

commands:

- current_deployment=$(aws ssm get-parameter --name $SSM_PARAMETER --query "Parameter.Value" --region $REGION --output text)

- echo $current_deployment

- echo $SSM_PARAMETER

- echo $EFS_ID $REGION

- if [[ "$current_deployment" == "null" ]]; then echo "this is the first GREEN deployment for this project" ; dir='/efs/green' ; fi

- if [[ "$current_deployment" == "green" ]]; then dir='/efs/blue' ; else dir='/efs/green' ; fi

- if [ ! -d $dir ]; then mkdir $dir >/dev/null 2>&1 ; fi

- echo $dir

- rsync -ar $CODEBUILD_SRC_DIR/ $dir/

artifacts:

files:

- '**/*'

During the build job, the following activities occur:

Installs latest php runtime version.

Reads the SSM parameter value in order to know the current deployment and decide which directory to utilize. The SSM parameter value flips between green and blue for every successful deployment.

Synchronizes the latest source code to the EFS mount point.

Creates artifacts to be utilized in subsequent stages.

Note: Utilize the default buildspec.yml as a reference and customize it further as per your requirement. See this link for more examples.

2) Deploy Stage

The solution is utilizing CodeDeploy blue/green deployment type for EC2/On-premises. The deployment environment is configured to provision a new EC2 Auto Scaling group for every new deployment in order to deploy the new application revision. CodeDeploy creates the new Auto Scaling group by copying the current one. See this link for more details on blue/green deployment configuration with CodeDeploy. During each deployment event, CodeDeploy utilizes the appspec.yml file to run the deployment steps as per the defined life cycle hooks. Following is the sample AppSpec file utilized in this solution.

version: 0.0

os: linux

hooks:

BeforeInstall:

- location: scripts/install_dependencies

timeout: 180

runas: root

AfterInstall:

- location: scripts/app_deployment

timeout: 180

runas: root

BeforeAllowTraffic :

- location: scripts/check_app_status

timeout: 180

runas: root

Note: The scripts mentioned in the AppSpec file are available in the scripts directory of the CodeCommit repository. Utilize these sample scripts as a reference and modify as per your requirement.

For this sample, the following steps are conducted during a deployment:

BeforeInstall:

Installs required packages on the EC2 instance.

Mounts the EFS file system.

Creates a symbolic link to point the apache home directory /var/www/html to the appropriate EFS mount point. It also ensures that the new application version deploys to a different EFS directory without affecting the current running application.

AfterInstall:

Stops apache web server.

Fetches current EFS directory name from Systems Manager.

Runs some clean up commands.

Restarts apache web server.

BeforeAllowTraffic:

Checks application status if running fine.

Exits the deployment with error if the app returns a non 200 HTTP status code.

3) Lambda Stage

After completing the deploy stage, CodePipeline triggers a Lambda function in order to update the SSM parameter value with the updated EFS directory name. This parameter value alternates between “blue” and “green” to help CodePipeline identify the right EFS file system path during the next deployment.

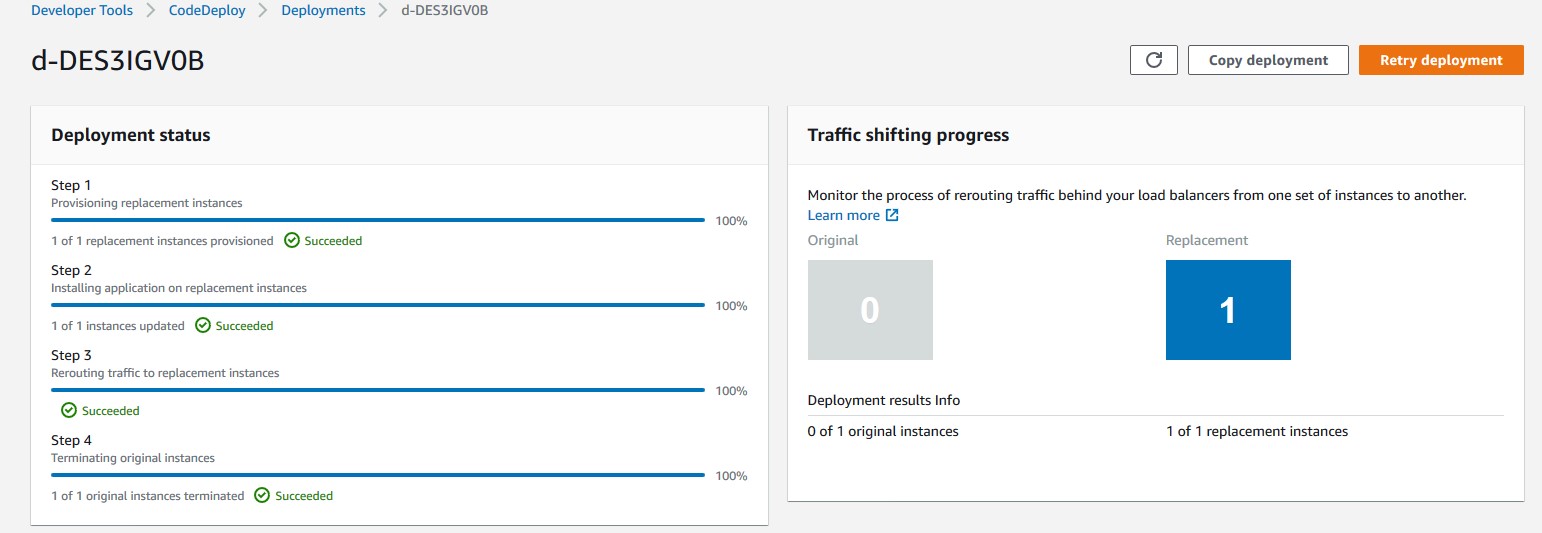

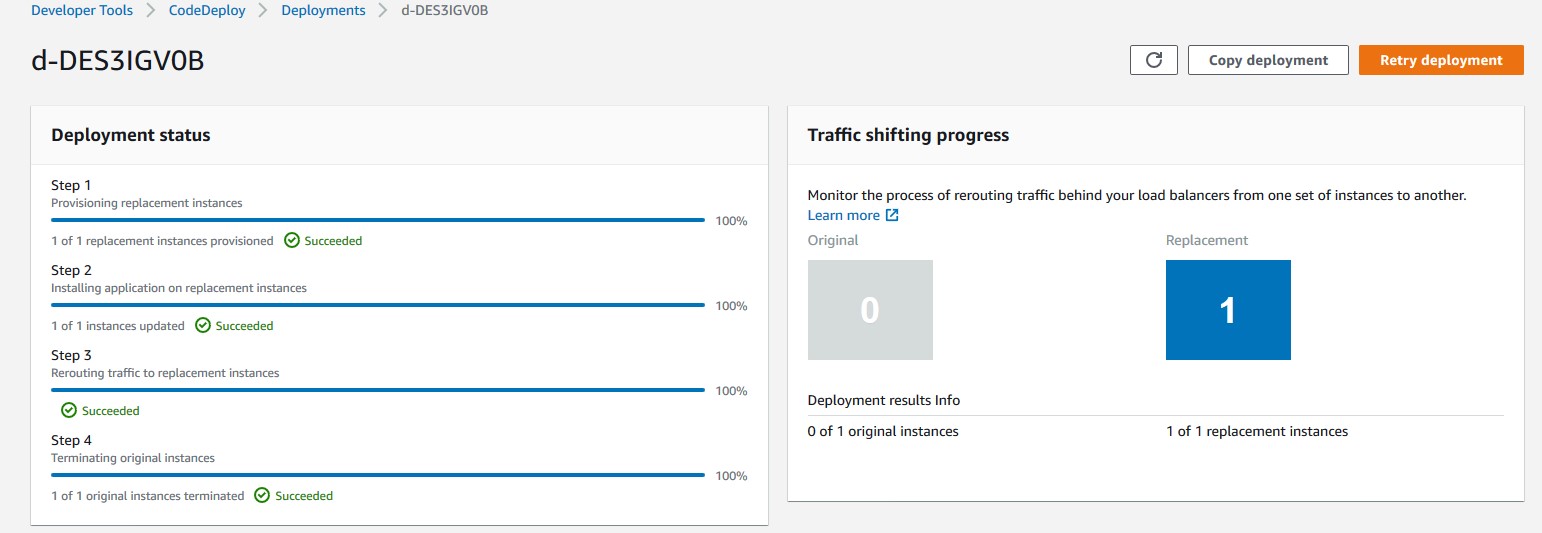

CodeDeploy Blue/Green deployment

Let’s review the sequence of events flow during the CodeDeploy deployment:

- CodeDeploy creates a new Auto Scaling group by copying the original one.

- Provisions a replacement EC2 instance in the new Auto Scaling Group.

- Conducts the deployment on the new instance as per the instructions in the yml file.

- Sets up health checks and redirects traffic to the new instance.

- Terminates the original instance along with the Auto Scaling Group.

- After completing the deployment, it should appear as shown in Figure 10.

Figure 10: AWS console view of a Blue/Green CodeDeploy deployment on Ec2

Troubleshooting

To troubleshoot any service-related issues, see the following links:

Troubleshooting AWS CloudFormation

Troubleshooting AWS CodePipeline

Troubleshooting AWS CodeDeploy

Troubleshooting AWS CodeCommit

Troubleshooting AWS CodeBuild

Troubleshooting Amazon EFS file system

More information

Now that you have tested the solution, here are some additional points worth noting:

The sample template and code utilized in this blog can work in any AWS region and are mainly intended for demonstration purposes. Utilize the sample as a reference and modify it further as per your requirement.

This solution works with single account, Region, and VPC combination.

For this sample, we have utilized AWS CodeCommit as version control, but you can also utilize any other source supported by AWS CodePipeline like Bitbucket, GitHub, or GitHub Enterprise Server

Clean up

Follow these steps to delete the components and avoid any future incurring charges:

- Open the AWS CloudFormation console.

- On the Stacks page in the CloudFormation console, select the stack that you created for this blog post. The stack must be currently running.

- In the stack details pane, choose Delete.

- Select Delete stack when prompted.

- Empty and delete the S3 bucket created during deployment step 1.

Conclusion

In this blog post, you learned how to set up a complete CI/CD pipeline for conducting a blue/green deployment on EC2 instances utilizing Amazon EFS file share as mount point to host application source code. The EFS share will be the central location hosting your application content, and it will help reduce your overall deployment time by eliminating the need for deploying a new revision on every EC2 instance local storage. It also helps to preserve any dynamically generated content when the life of an EC2 instance ends.

Author bio

Rakesh Singh

Rakesh Singh

Rakesh is a Senior Technical Account Manager at Amazon. He loves automation and enjoys working directly with customers to solve complex technical issues and provide architectural guidance. Outside of work, he enjoys playing soccer, singing karaoke, and watching thriller movies.

Choosing a Well-Architected CI/CD approach: Open Source on AWS

=======================

Introduction

When building a CI/CD platform, it is important to make an informed decision regarding every underlying tool. This post explores evaluating the criteria for selecting each tool focusing on a balance between meeting functional and non-functional requirements, and maximizing value.

Your first decision: source code management.

Source code is potentially your most valuable asset, and so we start by choosing a source code management tool. These tools normally have high non-functional requirements in order to protect your assets and to ensure they are available to the organization when needed. The requirements usually include demand for high durability, high availability (HA), consistently high throughput, and strong security with role-based access controls.

At the same time, source code management tools normally have many specific functional requirements as well. For example, the ability to provide collaborative code review in the UI, flexible and tunable merge policies including both automated and manual gates (code checks), and out-of-box UI-level integrations with numerous other tools. These kinds of integrations can include enabling monitoring, CI, chats, and agile project management.

Many teams also treat source code management tools as their portal into other CI/CD tools. They make them shareable between teams, and might prefer to stay within one single context and user interface throughout the entire DevOps cycle. Many source code management tools are actually a stack of services that support multiple steps of your CI/CD workflows from within a single UI. This makes them an excellent starting point for building your CI/CD platforms.

The first decision your need to make is whether to go with an open source solution for managing code or with AWS-managed solutions, such as AWS CodeCommit. Open source solutions include (but are not limited to) the following: Gerrit, Gitlab, Gogs, and Phabricator.

You decision will be influenced by the amount of benefit your team can gain from the flexibility provided through open source, and how well your team can support deploying and managing these solutions. You will also need to consider the infrastructure and management overhead cost.

Engineering teams that have the capacity to develop their own plugins for their CI/CD platforms, or whom even contribute directly to open source projects, will often prefer open source solutions for the flexibility they provide. This will be especially true if they are fluent in designing and supporting their own cloud infrastructure. If the team gets more value by trading the flexibility of open source for not having to worry about managing infrastructure (especially if High Availability, Scalability, Durability, and Security are more critical) an AWS-managed solution would be a better choice.

Source Code Management Solution

When the choice is made in favor of an open-source code management solution (such as Gitlab), the next decision will be how to architect the deployment. Will the team deploy to a single instance, or design for high availability, durability, and scalability? Teams that want to design Gitlab for HA can use the following guide to proceed: Installing GitLab on Amazon Web Services (AWS)

By adopting AWS services (such as Amazon RDS, Amazon ElastiCache for Redis, and Autoscaling Groups), you can lower the management burden of supporting the underlying infrastructure in this self-managed HA scenario.

High level overview of self-managed HA Gitlab deployment

Your second decision: Continuous Integration engine

Selecting your CI engine, you might be able to benefit from additional features of previously selected solutions. Gitlab provides both source control services, as well as built-in CI tools, called Gitlab CI. Gitlab Runners are responsible for running CI jobs, and the actual jobs are described as YML files stored in Gitlab’s git repository along with product code. For security and performance reasons, GitLab Runners should be on resources separate from your GitLab instance.

You could manage those resources or you could use one of the AWS services that can support deploying and managing Runners. The use of an on-demand service removes the expense of implementing and managing a capability that is undifferentiated heavy lifting for you. This provides cost optimization and enables operational excellence. You pay for what you use and the service team manages the underlying service.

Continuous Integration engine Solution

In an architecture example (below), Gitlab Runners are deployed in containers running on Amazon EKS. The team has less infrastructure to manage, can start focusing on development faster by not having to implement the capability, and can provision resources in an optimal way for their on-demand needs.

To further optimize costs, you can use EC2 Spot Instances for your EKS nodes. CI jobs are normally compute intensive and limited in run time. The runner jobs can easily be restarted on a different resource with little impact. This makes them tolerant of failure and the use of EC2 Spot instances very appealing. Amazon EKS and Spot Instances are supported out-of-box in Gitlab. As a result there is no integration to develop, only configuration is required.

To support infrastructure as code best practices, Runners are deployed with Helm and are stored and versioned as Helm charts. All of the infrastructure as code information used to implement the CI/CD platform itself is stored in templates such as Terraform.

High level overview of Infrastructure as Code on Gitlab and Gitlab CI

Your third decision: Container Registry

You will be unable to deploy Runners if the container images are not available. As a result, the primary non-functional requirements for your production container registry are likely to include high availability, durability, transparent scalability, and security. At the same time, your functional requirements for a container registry might be lower. It might be sufficient to have a simple UI, and simple APIs supporting basic flows. Customers looking for a managed solution can use Amazon ECR, which is OCI compliant and supports Helm Charts.

Container Registry Solution

For this set of requirements, the flexibility and feature velocity of open source tools does not provide an advantage. Self-supporting high availability and strengthened security could be costly in implementation time and long-term management. Based on [Blog post 1 Diagram 1], an AWS-managed solution provides cost advantages and has no management overhead. In this case, an AWS-managed solution is a better choice for your container registry than an open-source solution hosted on AWS. In this example, Amazon ECR is selected. Customers who prefer to go with open-source container registries might consider solutions like Harbor.

High level overview of Gitlab CI with Amazon ECR

Additional Considerations

Now that the main services for the CI/CD platform are selected, we will take a high level look at additional important considerations. You need to make sure you have observability into both infrastructure and applications, that backup tools and policies are in place, and that security needs are addressed.

There are many mechanisms to strengthen security including the use of security groups. Use IAM for granular permission control. Robust policies can limit the exposure of your resources and control the flow of traffic. Implement policies to prevent your assets leaving your CI environment inappropriately. To protect sensitive data, such as worker secrets, encrypt these assets while in transit and at rest. Select a key management solution to reduce your operational burden and to support these activities such as AWS Key Management Service (AWS KMS). To deliver secure and compliant application changes rapidly while running operations consistently with automation, implement DevSecOps.

Amazon S3 is durable, secure, and highly available by design making it the preferred choice to store EBS-level backups by many customers. Amazon S3 satisfies the non-functional requirements for a backup store. It also supports versioning and tiered storage classes, making it a cost-effective as well.

Your observability requirements may emphasize versatility and flexibility for application-level monitoring. Using Amazon CloudWatch to monitor your infrastructure and then extending your capabilities through an open-source solutions such as Prometheus may be advantageous. You can get many of the benefits of both open-source Prometheus and AWS services with Amazon Managed Service for Prometheus (AMP). For interactive visualization of metrics, many customers choose solutions such as open-source Grafana, available as an AWS service Amazon Managed Service for Grafana (AMG).

CI/CD Platform with Gitlab and AWS

Conclusion

We have covered how making informed decisions can maximize value and synergy between open-source solutions on AWS, such as Gitlab, and AWS-managed services, such as Amazon EKS and Amazon ECR. You can find the right balance of open-source tools and AWS services that will meet your functional and non-functional requirements, and help maximizing the value you get from those resources.

Pete Goldberg, Director of Partnerships at GitLab: “When aligning your development process to AWS Well Architected Framework, GitLab allows customers to build and automate processes to achieve Operational Excellence. As a single tool designed to facilitate collaboration across the organization, GitLab simplifies the process to follow the Fully Separated Operating Model where Engineering and Operations come together via automated processes that remove the historical barriers between the groups. This gives organizations the ability to efficiently and rapidly deploy new features and applications that drive the business while providing the risk mitigation and compliance they require. By allowing operations teams to define infrastructure as code in the same tool that the engineering teams are storing application code, and allowing your automation bring those together for your CI/CD workflows companies can move faster while having compliance and controls built-in, providing the entire organization greater transparency. With GitLab’s integrations with different AWS compute options (EC2, Lambda, Fargate, ECS or EKS), customers can choose the best type of compute for the job without sacrificing the controls required to maintain Operational Excellence.”

Author bio

Mikhail is a Solutions Architect for RUS-CIS. Mikhail supports customers on their cloud journeys with Well-architected best practices and adoption of DevOps techniques on AWS. Mikhail is a fan of ChatOps, Open Source on AWS and Operational Excellence design principles.

Mikhail is a Solutions Architect for RUS-CIS. Mikhail supports customers on their cloud journeys with Well-architected best practices and adoption of DevOps techniques on AWS. Mikhail is a fan of ChatOps, Open Source on AWS and Operational Excellence design principles.

Use the Snyk CLI to scan Python packages using AWS CodeCommit, AWS CodePipeline, and AWS CodeBuild

=======================

One of the primary advantages of working in the cloud is achieving agility in product development. You can adopt practices like continuous integration and continuous delivery (CI/CD) and GitOps to increase your ability to release code at quicker iterations. Development models like these demand agility from security teams as well. This means your security team has to provide the tooling and visibility to developers for them to fix security vulnerabilities as quickly as possible.

Vulnerabilities in cloud-native applications can be roughly classified into infrastructure misconfigurations and application vulnerabilities. In this post, we focus on enabling developers to scan vulnerable data around Python open-source packages using the Snyk Command Line Interface (CLI).

The world of package dependencies

Traditionally, code scanning is performed by the security team; they either ship the code to the scanning instance, or in some cases ship it to the vendor for vulnerability scanning. After the vendor finishes the scan, the results are provided to the security team and forwarded to the developer. The end-to-end process of organizing the repositories, sending the code to security team for scanning, getting results back, and remediating them is counterproductive to the agility of working in the cloud.

Let’s take an example of package A, which uses package B and C. To scan package A, you scan package B and C as well. Similar to package A having dependencies on B and C, packages B and C can have their individual dependencies too. So the dependencies for each package get complex and cumbersome to scan over time. The ideal method is to scan all the dependencies in one go, without having manual intervention to understand the dependencies between packages.

Building on the foundation of GitOps and Gitflow

GitOps was introduced in 2017 by Weaveworks as a DevOps model to implement continuous deployment for cloud-native applications. It focuses on the developer ability to ship code faster. Because security is a non-negotiable piece of any application, this solution includes security as part of the deployment process. We define the Snyk scanner as declarative and immutable AWS Cloud Development Kit (AWS CDK) code, which instructs new Python code committed to the repository to be scanned.

Another continuous delivery practice that we base this solution on is Gitflow. Gitflow is a strict branching model that enables project release by enforcing a framework for managing Git projects. As a brief introduction on Gitflow, typically you have a main branch, which is the code sent to production, and you have a development branch where new code is committed. After the code in development branch passes all tests, it’s merged to the main branch, thereby becoming the code in production. In this solution, we aim to provide this scanning capability in all your branches, providing security observability through your entire Gitflow.

AWS services used in this solution

We use the following AWS services as part of this solution:

AWS CDK – The AWS CDK is an open-source software development framework to define your cloud application resources using familiar programming languages. In this solution, we use Python to write our AWS CDK code.

AWS CodeBuild – CodeBuild is a fully managed build service in the cloud. CodeBuild compiles your source code, runs unit tests, and produces artifacts that are ready to deploy. CodeBuild eliminates the need to provision, manage, and scale your own build servers.

AWS CodeCommit – CodeCommit is a fully managed source control service that hosts secure Git-based repositories. It makes it easy for teams to collaborate on code in a secure and highly scalable ecosystem. CodeCommit eliminates the need to operate your own source control system or worry about scaling its infrastructure. You can use CodeCommit to securely store anything from source code to binaries, and it works seamlessly with your existing Git tools.

AWS CodePipeline – CodePipeline is a continuous delivery service you can use to model, visualize, and automate the steps required to release your software. You can quickly model and configure the different stages of a software release process. CodePipeline automates the steps required to release your software changes continuously.

Amazon EventBridge – EventBridge rules deliver a near-real-time stream of system events that describe changes in AWS resources. With simple rules that you can quickly set up, you can match events and route them to one or more target functions or streams.

AWS Systems Manager Parameter Store – Parameter Store, a capability of AWS Systems Manager, provides secure, hierarchical storage for configuration data management and secrets management. You can store data such as passwords, database strings, Amazon Machine Image (AMI) IDs, and license codes as parameter values.

Prerequisites

Before you get started, make sure you have the following prerequisites:

An AWS account (use a Region that supports CodeCommit, CodeBuild, Parameter Store, and CodePipeline)

A Snyk account

An existing CodeCommit repository you want to test on

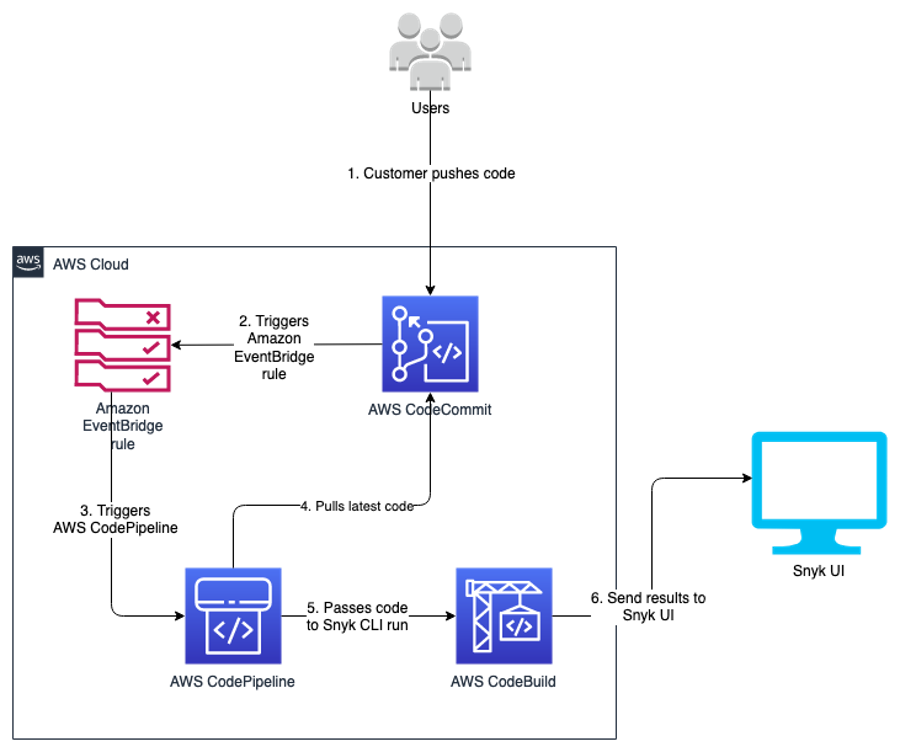

Architecture overview

After you complete the steps in this post, you will have a working pipeline that scans your Python code for open-source vulnerabilities.

We use the Snyk CLI, which is available to customers on all plans, including the Free Tier, and provides the ability to programmatically scan repositories for vulnerabilities in open-source dependencies as well as base image recommendations for container images. The following reference architecture represents a general workflow of how Snyk performs the scan in an automated manner. The design uses DevSecOps principles of automation, event-driven triggers, and keeping humans out of the loop for its run.

As developers keep working on their code, they continue to commit their code to the CodeCommit repository. Upon each commit, a CodeCommit API call is generated, which is then captured using the EventBridge rule. You can customize this event rule for a specific event or feature branch you want to trigger the pipeline for.

When the developer commits code to the specified branch, that EventBridge event rule triggers a CodePipeline pipeline. This pipeline has a build stage using CodeBuild. This stage interacts with the Snyk CLI, and uses the token stored in Parameter Store. The Snyk CLI uses this token as authentication and starts scanning the latest code committed to the repository. When the scan is complete, you can review the results on the Snyk console.

This code is built for Python pip packages. You can edit the buildspec.yml to incorporate for any other language that Snyk supports.

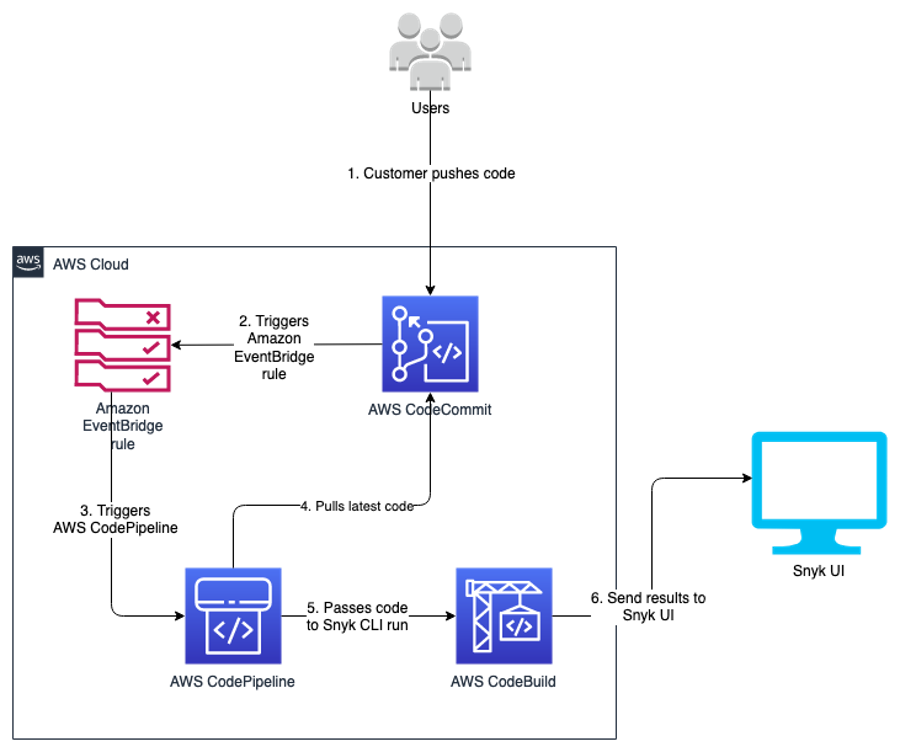

The following diagram illustrates our architecture.

Code overview

The code in this post is written using the AWS CDK in Python. If you’re not familiar with the AWS CDK, we recommend reading Getting started with AWS CDK before you customize and deploy the code.

Repository URL: https://github.com/aws-samples/aws-cdk-codecommit-snyk

This AWS CDK construct uses the Snyk CLI within the CodeBuild job in the pipeline to scan the Python packages for open-source package vulnerabilities. The construct uses CodePipeline to create a two-stage pipeline: one source, and one build (the Snyk scan stage). The construct takes the input of the CodeCommit repository you want to scan, the Snyk organization ID, and Snyk auth token.

Resources deployed

This solution deploys the following resources:

An EventBridge rule

A CodeBuild project

Four AWS Identity and Access Management (IAM) roles with inline policies

A CodePipeline pipeline

An Amazon Simple Storage Service (Amazon S3) bucket

An AWS Key Management Service (AWS KMS) key and alias

For the deployment, we use the AWS CDK construct in the codebase cdk_snyk_construct/cdk_snyk_construct_stack.py in the AWS CDK stack cdk-snyk-stack. The construct requires the following parameters:

ARN of the CodeCommit repo you want to scan

Name of the repository branch you want to be monitored

Parameter Store name of the Snyk organization ID

Parameter Store name for the Snyk auth token

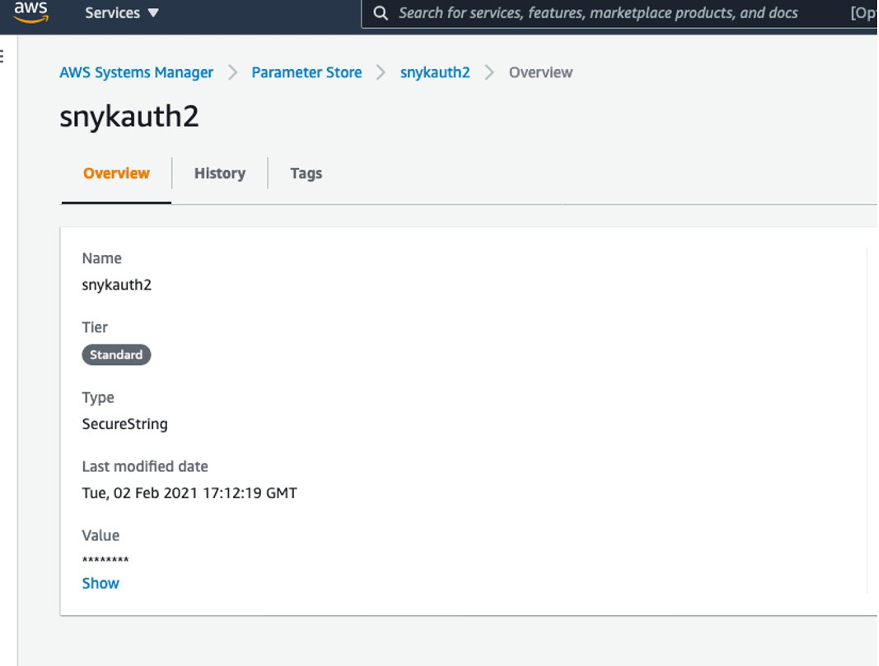

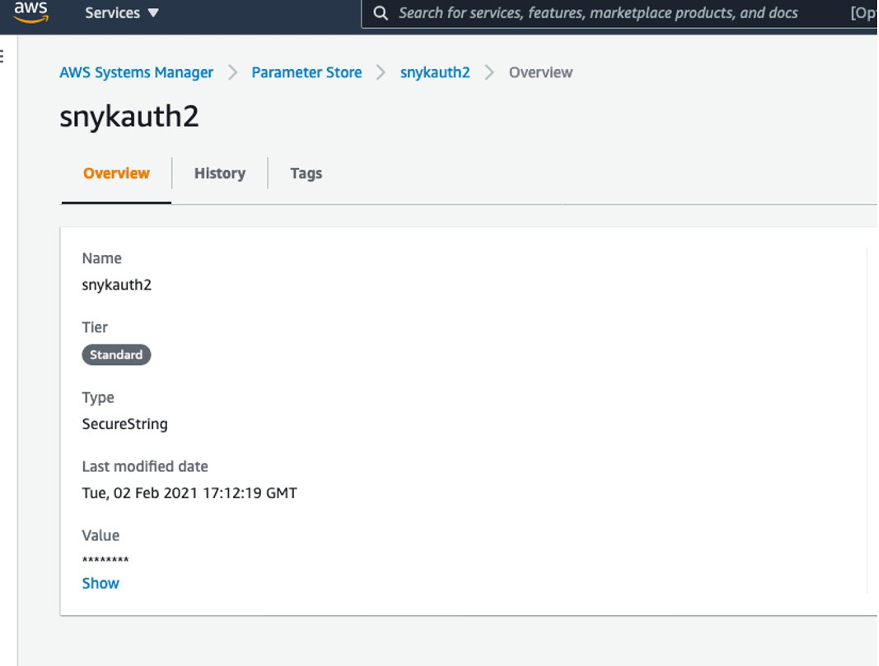

Set up the organization ID and auth token before deploying the stack. Because these are confidential and sensitive data, you should deploy them as a separate stack or manual process. In this solution, the parameters have been stored as a SecureString parameter type and encrypted using the AWS-managed KMS key.

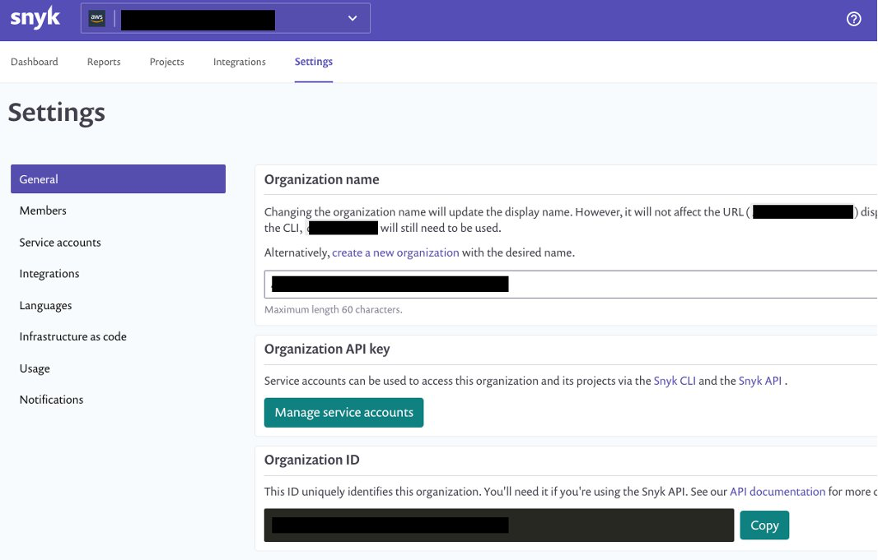

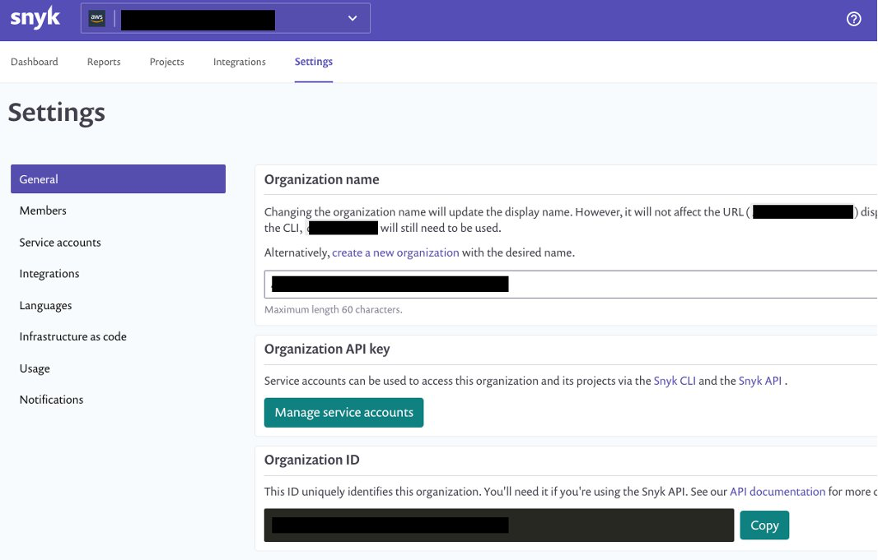

You create the organization ID and auth token on the Snyk console. On the Settings page, choose General in the navigation page to add these parameters.

You can retrieve the names of the parameters on the Systems Manager console by navigating to Parameter Store and finding the name on the Overview tab.

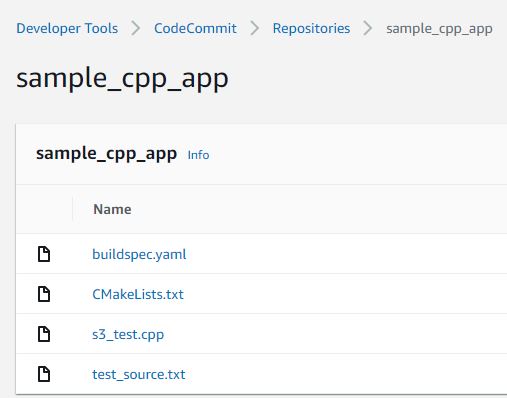

Create a requirements.txt file in the CodeCommit repository

We now create a repository in CodeCommit to store the code. For simplicity, we primarily store the requirements.txt file in our repository. In Python, a requirements file stores the packages that are used. Having clearly defined packages and versions makes it easier for development, especially in virtual environments.

For more information on the requirements file in Python, see Requirement Specifiers.

To create a CodeCommit repository, run the following AWS Command Line Interface (AWS CLI) command in your AWS accounts:

aws codecommit create-repository --repository-name snyk-repo \

--repository-description "Repository for Snyk to scan Python packages"

Now let’s create a branch called main in the repository using the following command:

aws codecommit create-branch --repository-name snyk-repo \

--branch-name main

After you create the repository, commit a file named requirements.txt with the following content. The following packages are pinned to a particular version that they have a vulnerability with. This file is our hypothetical vulnerable set of packages that have been committed into your development code.

PyYAML==5.3.1

Pillow==7.1.2

pylint==2.5.3

urllib3==1.25.8

For instructions on committing files in CodeCommit, see Connect to an AWS CodeCommit repository.

When you store the Snyk auth token and organization ID in Parameter Store, note the parameter names—you need to pass them as parameters during the deployment step.

Now clone the CDK code from the GitHub repository with the command below:

git clone https://github.com/aws-samples/aws-cdk-codecommit-snyk.git

After the cloning is complete you should see a directory named aws-cdk-codecommit-snyk on your machine.

When you’re ready to deploy, enter the aws-cdk-codecommit-snyk directory, and run the following command with the appropriate values:

cdk deploy cdk-snyk-stack \

--parameters RepoName=<name-of-codecommit-repo> \

--parameters RepoBranch=<branch-to-be-scanned> \

--parameters SnykOrgId=<value> \

--parameters SnykAuthToken=<value>

After the stack deployment is complete, you can see a new pipeline in your AWS account, which is configured to be triggered every time a commit occurs on the main branch.

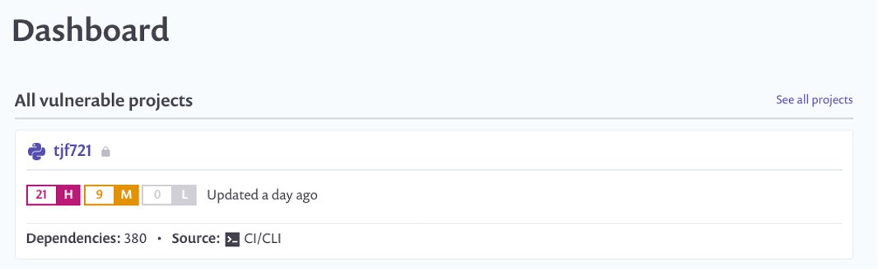

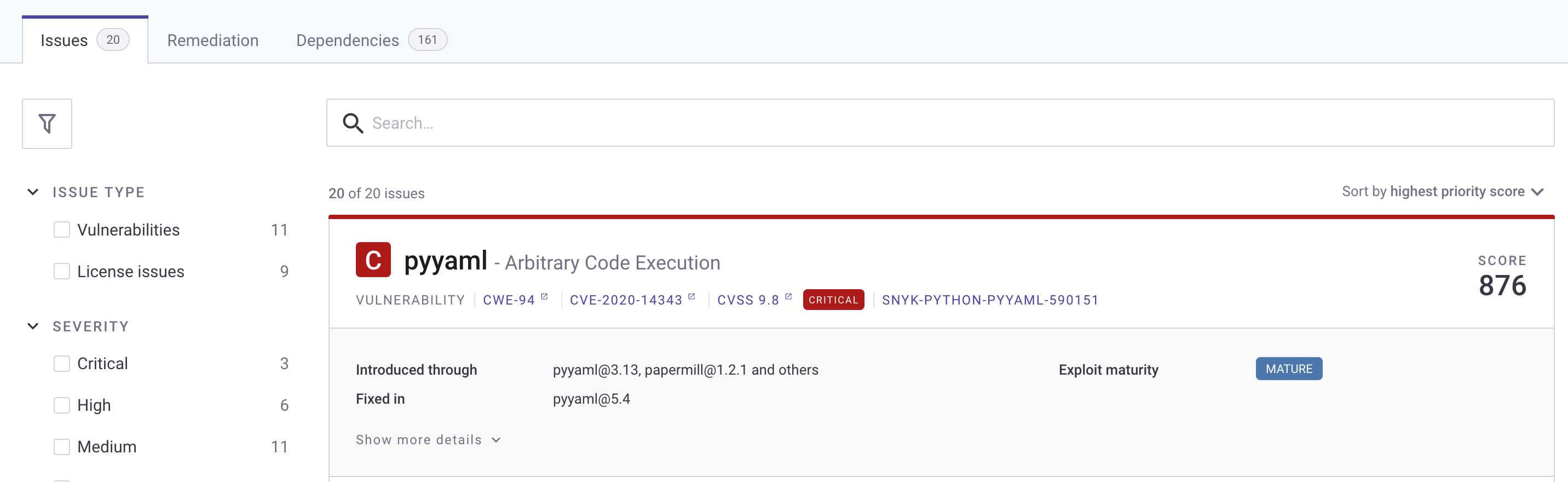

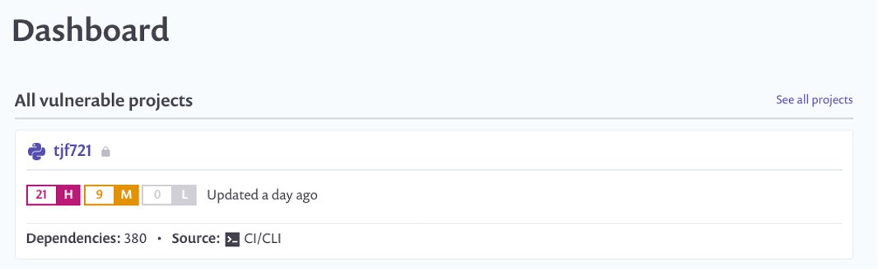

You can view the results of the scan on the Snyk console. After the pipeline runs, log in to snyk.io and you should see a project named as per your repository (see the following screenshot).

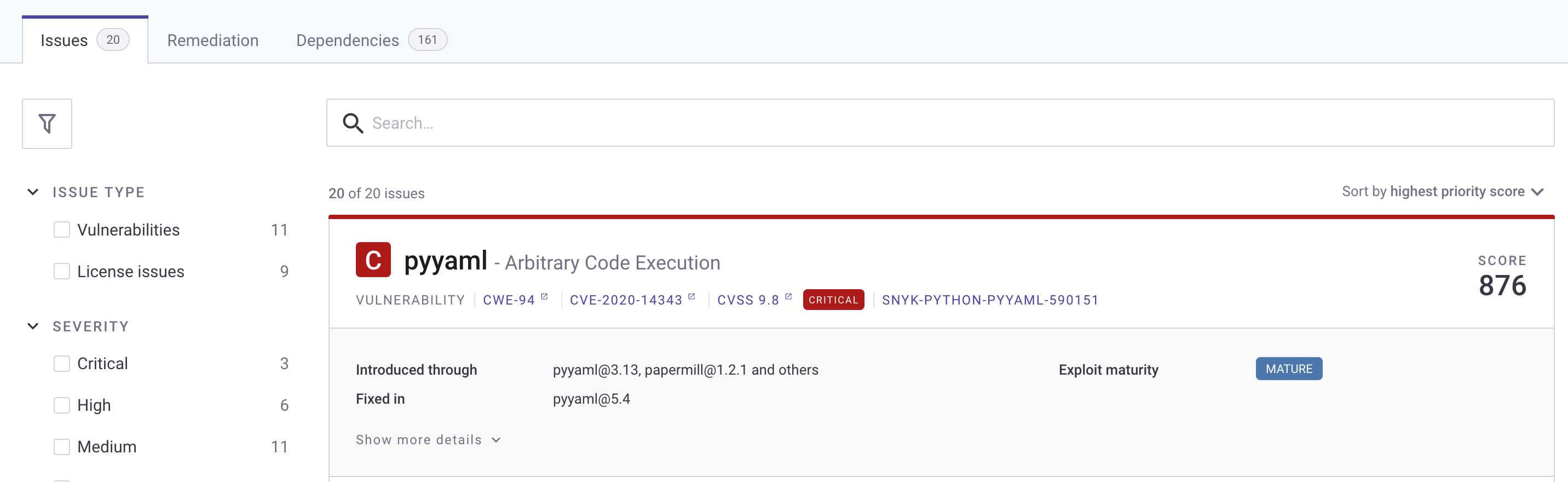

Choose the repo name to get a detailed view of the vulnerabilities found. Depending on what packages you put in your requirements.txt, your report will differ from the following screenshot.

To fix the vulnerability identified, you can change the version of these packages in the requirements.txt file. The edited requirements file should look like the following:

PyYAML==5.4

Pillow==8.2.0

pylint==2.6.1

urllib3==1.25.9

After you update the requirements.txt file in your repository, push your changes back to the CodeCommit repository you created earlier on the main branch. The push starts the pipeline again.

After the commit is performed to the targeted branch, you don’t see the vulnerability reported on the Snyk dashboard because the pinned version 5.4 doesn’t contain that vulnerability.

Clean up

To avoid accruing further cost for the resources deployed in this solution, run cdk destroy to remove all the AWS resources you deployed through CDK.

As the CodeCommit repository was created using AWS CLI, the following command deletes the CodeCommit repository:

aws codecommit delete-repository --repository-name snyk-repo

Conclusion

In this post, we provided a solution so developers can self- remediate vulnerabilities in their code by monitoring it through Snyk. This solution provides observability, agility, and security for your Python application by following DevOps principles.

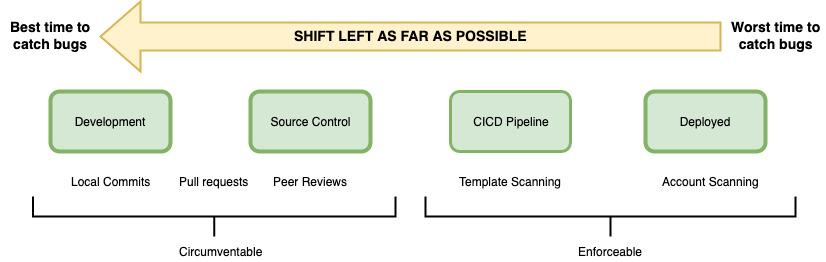

A similar architecture has been used at NFL to shift-left the security of their code. According to the shift-left design principle, security should be moved closer to the developers to identify and remediate security issues earlier in the development cycle. NFL has implemented a similar architecture which made the total process, from committing code on the branch to remediating 15 times faster than their previous code scanning setup.

Here’s what NFL has to say about their experience:

“NFL used Snyk to scan Python packages for a service launch. Traditionally it would have taken 10days to scan the packages through our existing process but with Snyk we were able to follow DevSecOps principles and get the scans completed, and reviewed within matter of days. This simplified our time to market while maintaining visibility into our security posture.” – Joe Steinke (Director, Data Solution Architect)

Building a centralized Amazon CodeGuru Profiler dashboard for multi-account scenarios

=======================

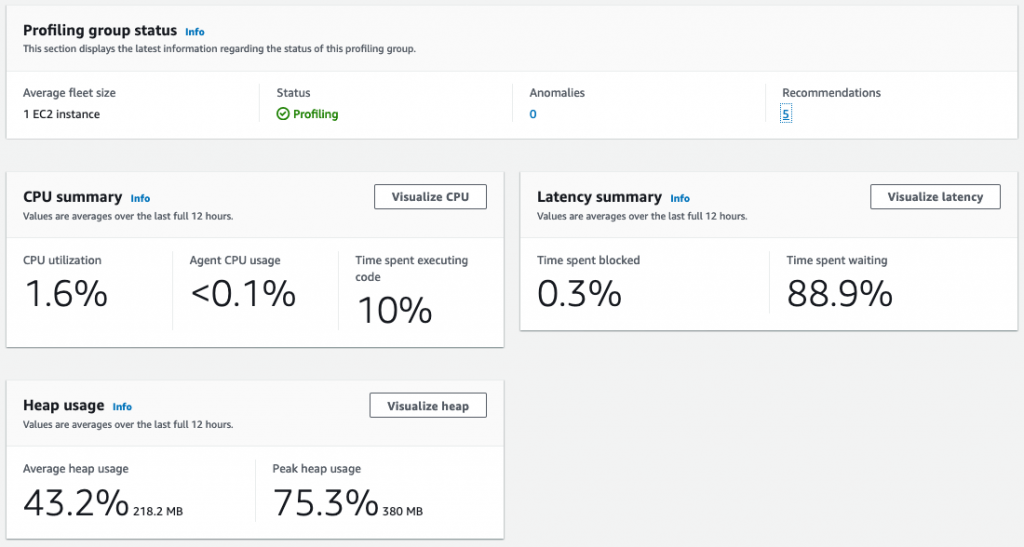

Amazon CodeGuru is a machine learning service for development teams who want to automate code reviews, identify the most expensive lines of code in their applications, and receive intelligent recommendations on how to fix or improve their code. CodeGuru has two components: CodeGuru Profiler and CodeGuru Reviewer.

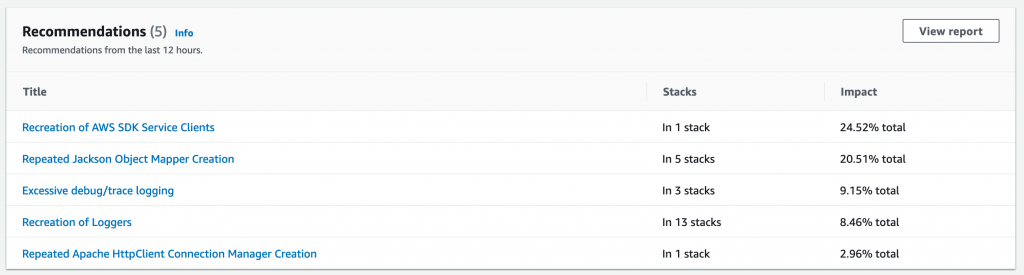

CodeGuru Profiler searches for application performance optimizations and recommends ways to fix issues such as excessive recreation of expensive objects, expensive deserialization, usage of inefficient libraries, and excessive logging. CodeGuru Profiler runs continuously, consuming minimal CPU capacity so it doesn’t significantly impact application performance. You can run an agent or import libraries into your application and send the data collected to CodeGuru, and review the findings on the CodeGuru console.

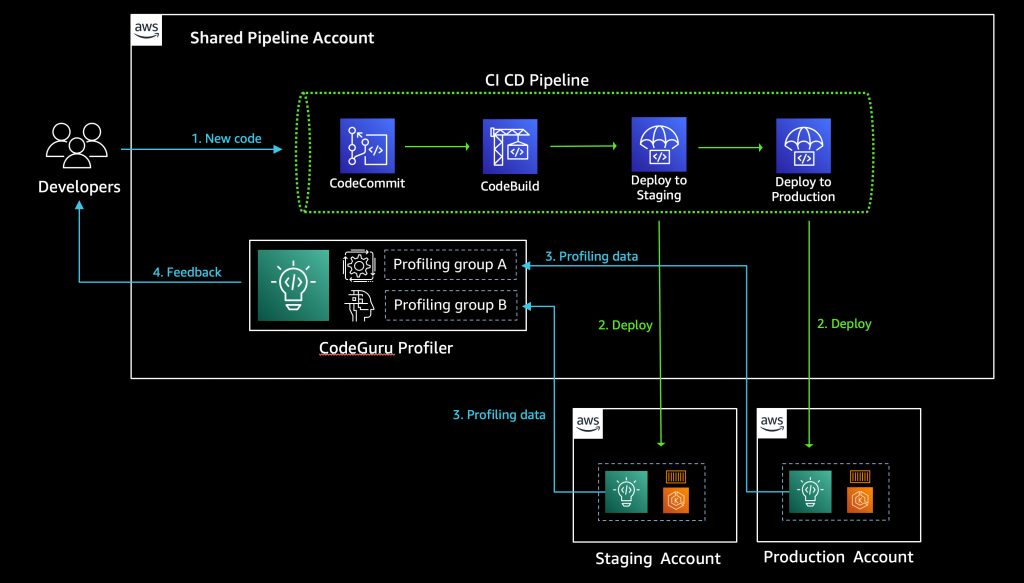

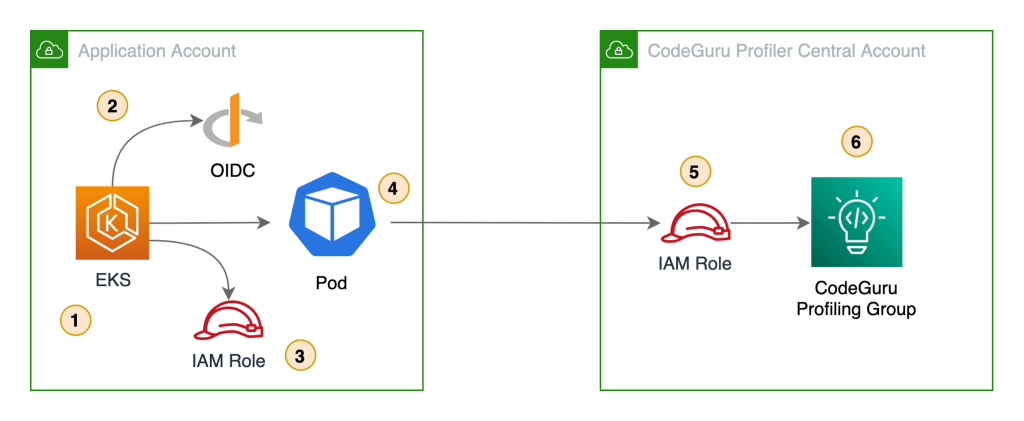

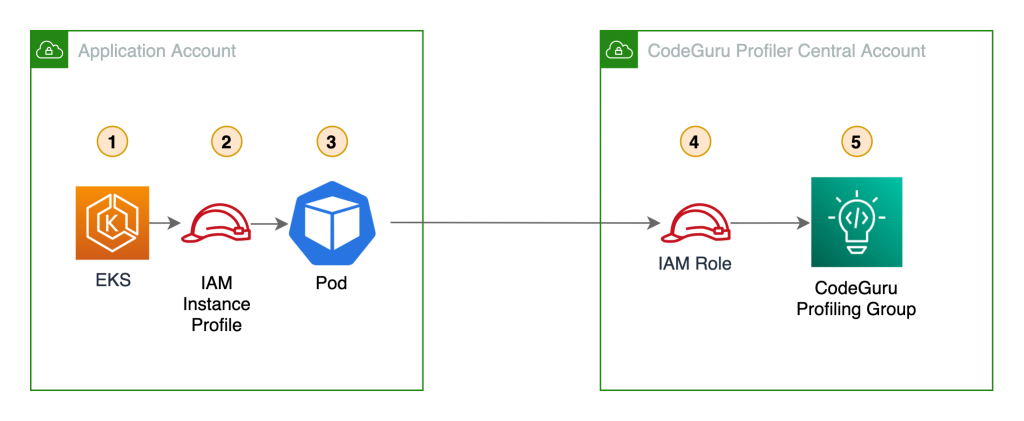

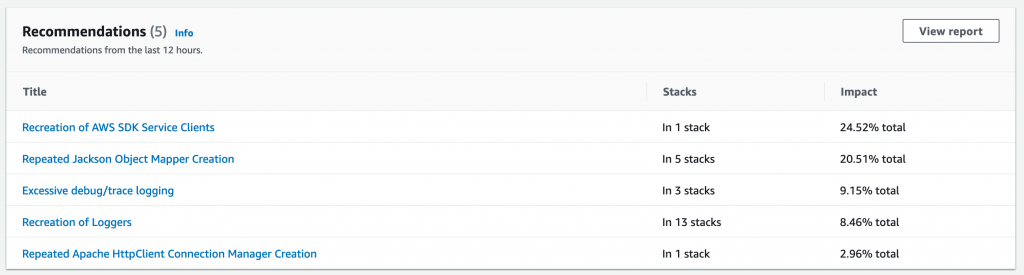

As a best practice, AWS recommends a multi-account strategy for your cloud environment. See Benefits of using multiple AWS accounts for additional details. In this scenario, your CI/CD pipeline deploys your application on the multiple software development lifecycle (SDLC) and production accounts. As a consequence, you’d have one CodeGuru Profiler dashboard per account, which makes it hard for developers to analyze the applications’ performance. This post shows you how to configure CodeGuru Profiler to collect multiple applications’ profiling data into a central account and review the applications’ performance data on one dashboard.

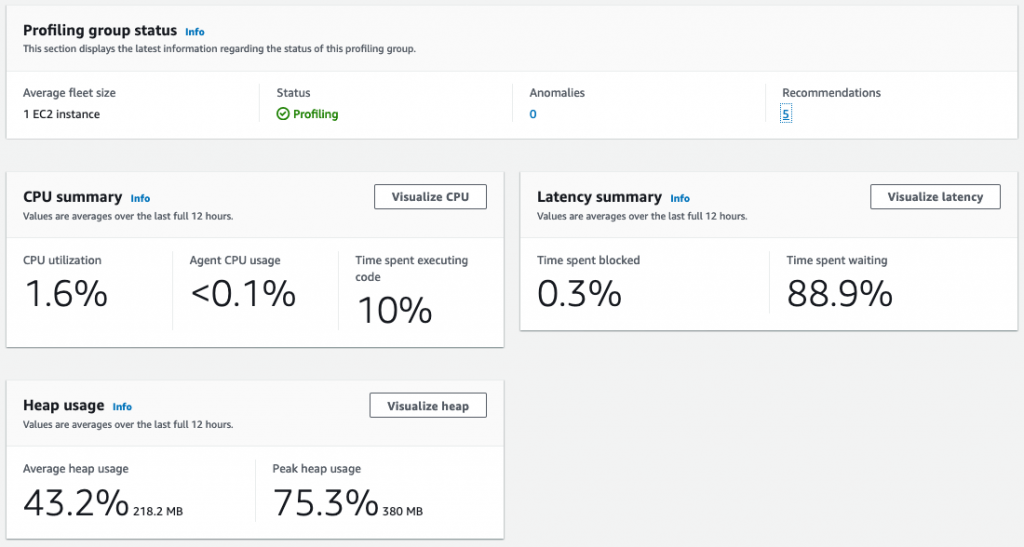

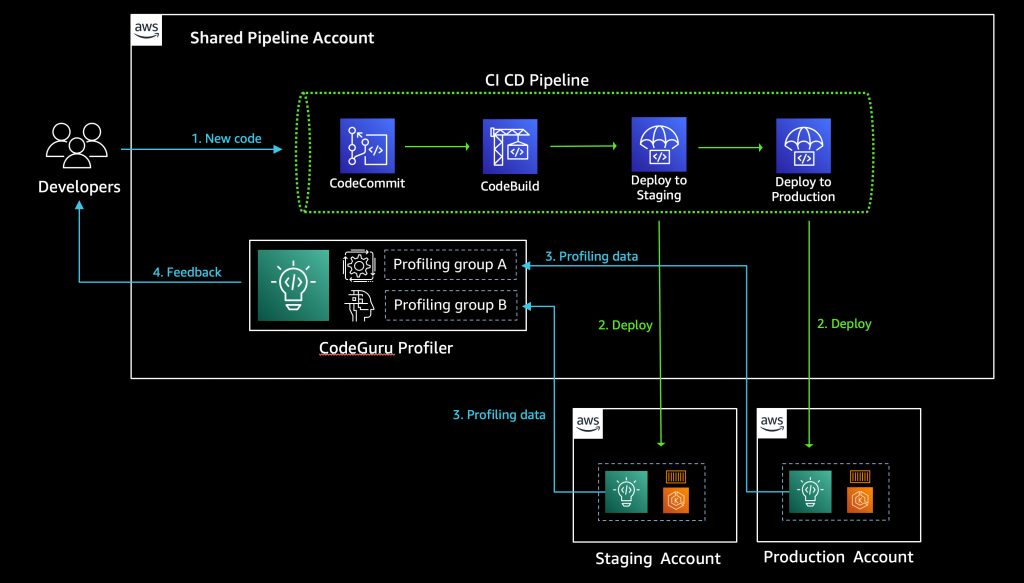

See the diagram below for a typical CI/CD pipeline on multi-account environment, and a central CodeGuru Profiler dashboard:

Solution overview

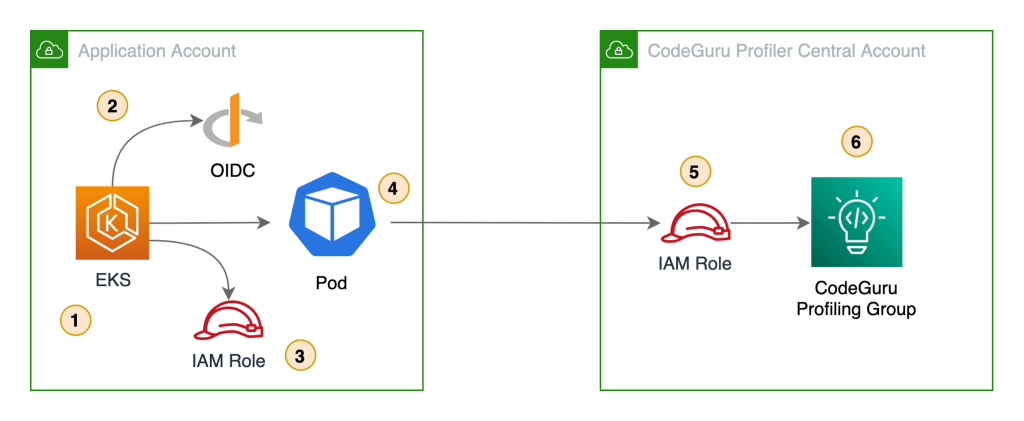

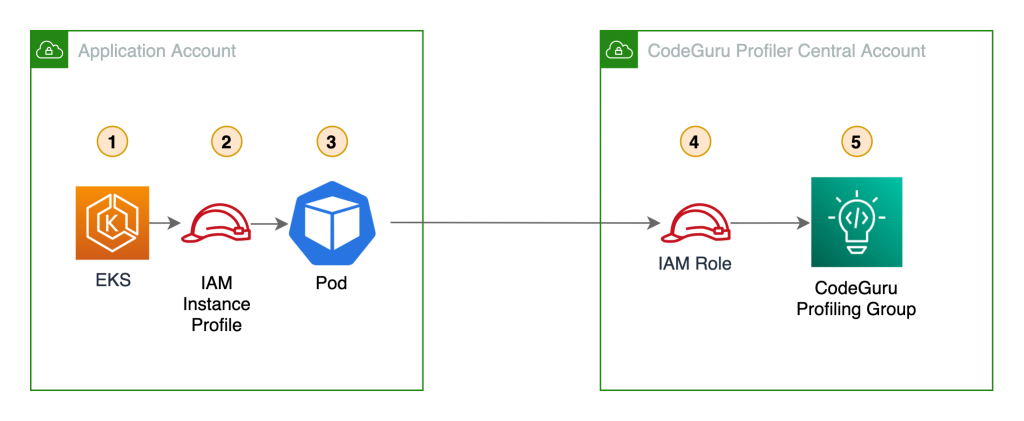

The benefits of sending CodeGuru profiling data to a central AWS account within the same region is that it gives you a single pane of glass to review all of CodeGuru Profiler’s findings and recommendations in one place. At the time of this writing, we don’t recommend sending CodeGuru profiling data across regions. Additionally, we show you how to configure the AWS Identity and Access Management (IAM) roles to assume a cross-account role when running pods on Amazon Elastic Kubernetes Service (Amazon EKS) with OpenID Connect (OIDC), as well as how to configure IAM roles to allow access when using other resources, such as Amazon Elastic Compute Cloud (EC2). On this solution we discuss how to enable the CodeGuru agent within your code, but it’s also possible enable the agent with no code changes. For more information, see Enable the agent from the command line.

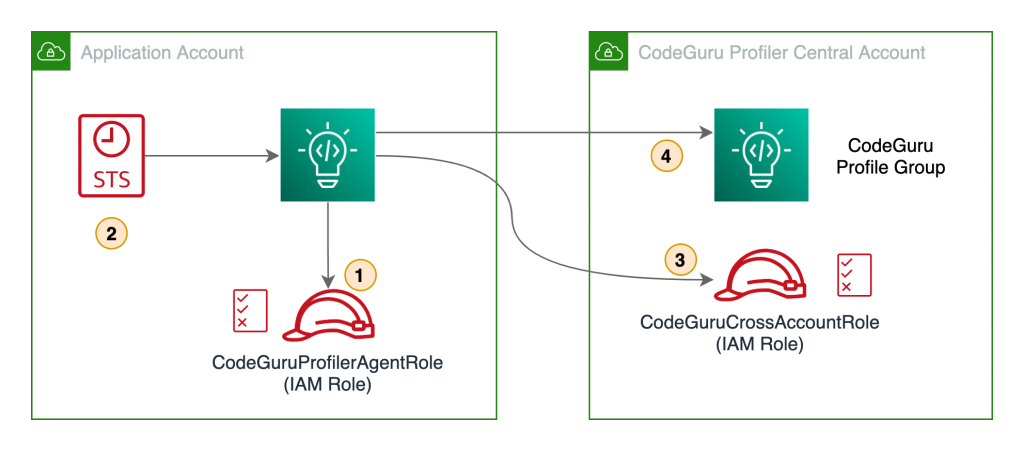

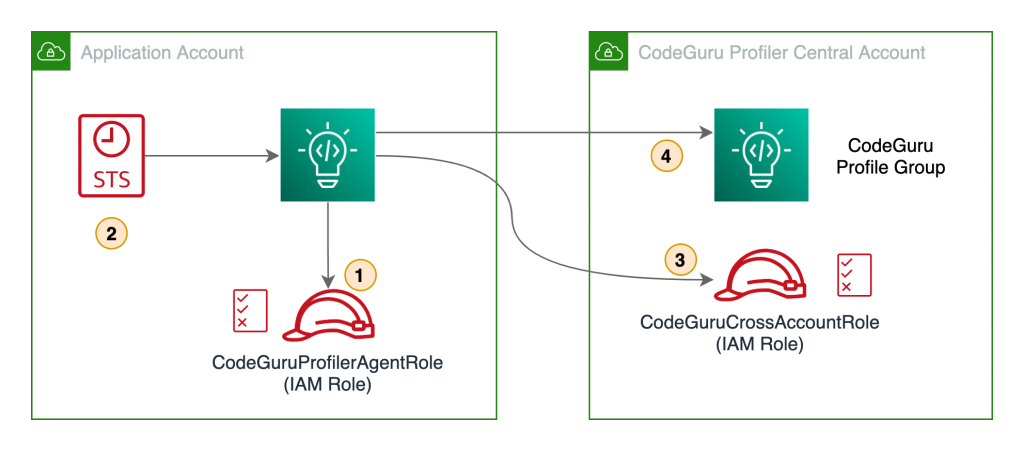

The following diagram illustrates our architecture.

Our solution has the following components:

- The application in the application account assumes the

CodeGuruProfilerAgentRole IAM role. This IAM role has a policy that allows the application to assume the role in the CodeGuru Profiler central account.

- AWS Security Token Service (AWS STS) returns temporary credentials required to assume the IAM role in the central account.

- The application assumes the

CodeGuruCrossAccountRole IAM role in the central account.

- The application using the

CodeGuruCrossAccountRole role sends CodeGuru Profiler data to the central account.

Prerequisites

Before getting started, you must have the following requirements:

Two AWS accounts:

Central account – Where the single pane of glass to review all of CodeGuru Profiler’s findings and recommendations resides. The applications from all the other AWS accounts send profiling data to CodeGuru Profiler in the central account.

Application account – The dedicated account where the profiled application resides.

Install and authenticate the AWS Command Line Interface (AWS CLI). You can authenticate with an IAM user or AWS STS token.

If you’re using Amazon EKS, you need the following installed:

kubectl – The Kubernetes command line tool.

eksctl – The Amazon EKS command line tool.

Walkthrough overview

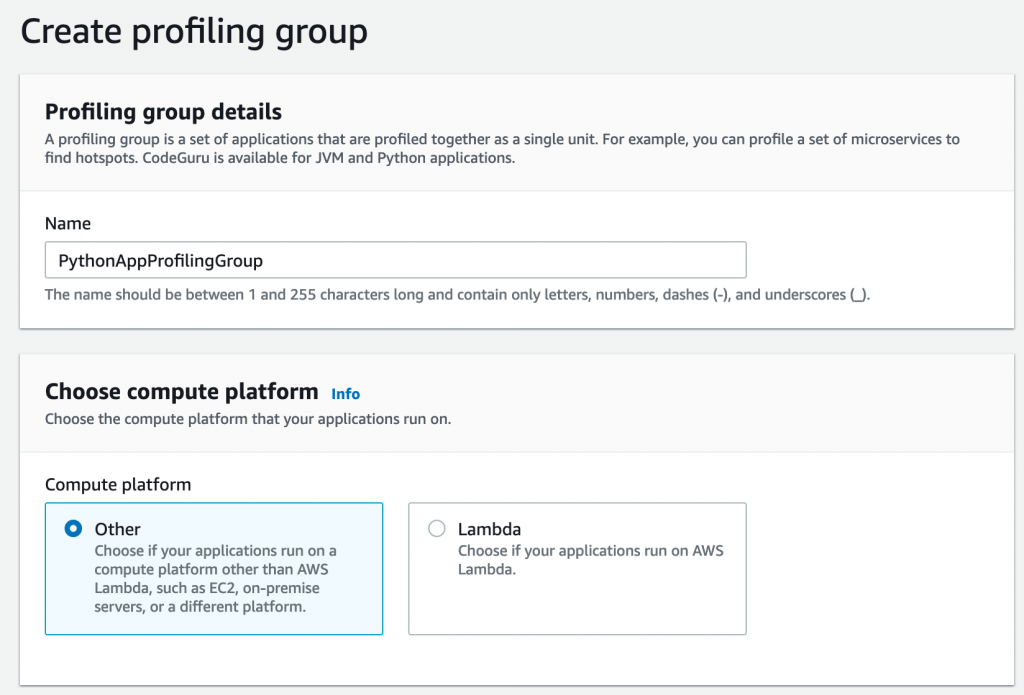

At the time of this writing, CodeGuru supports two programming languages: Python and Java. Each language has a different configuration for sending CodeGuru profiling data to a different AWS account.

Let’s consider a use case where we have two applications that we want to configure to send CodeGuru profiling data to a centralized account. The first application is running Python 3.x and the other application is running Java on JRE 1.8. We need to configure two IAM roles, one in the application account and one in the central account.

We complete the following steps:

- Configure the IAM roles and policies.

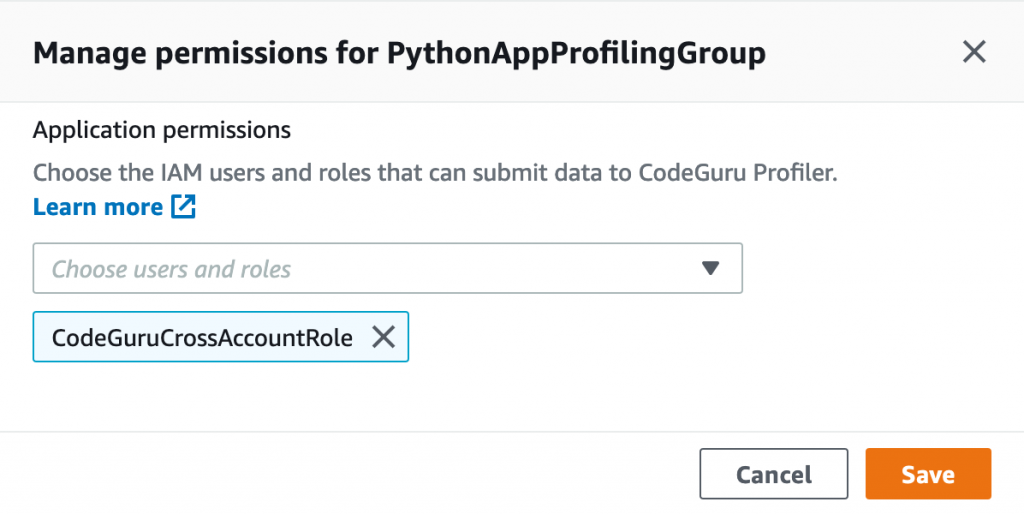

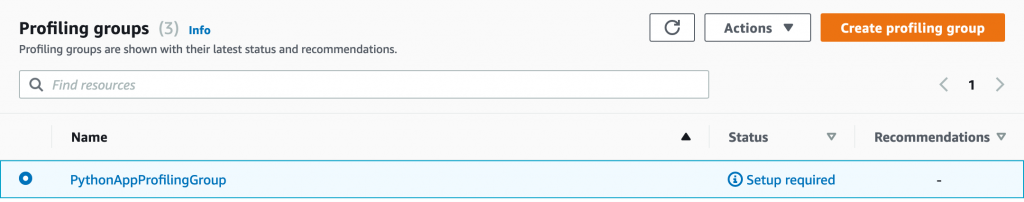

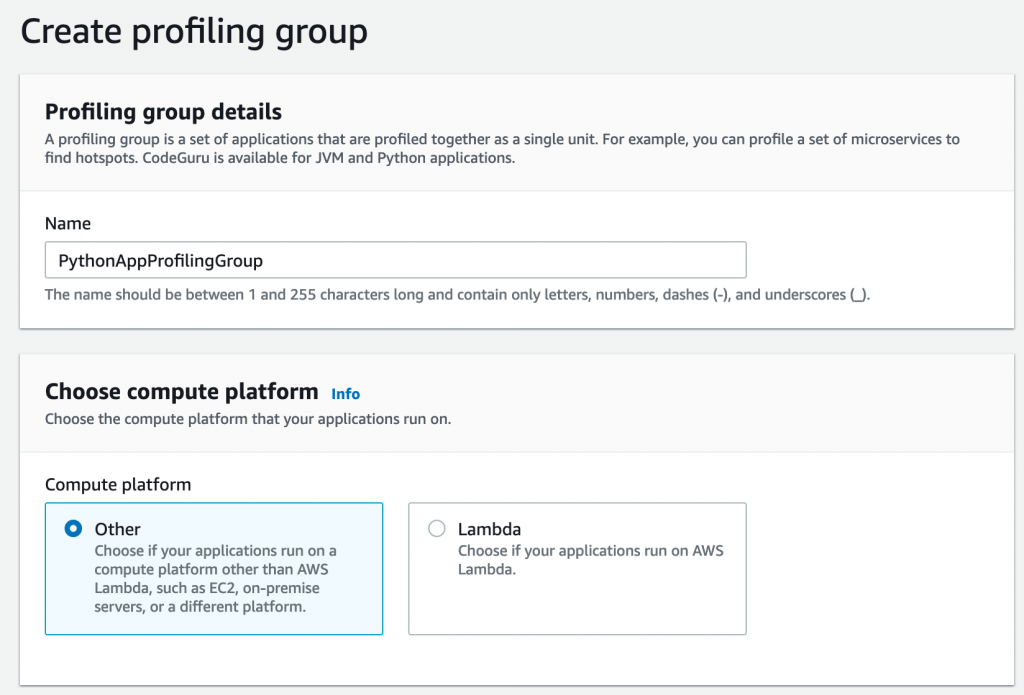

- Configure the CodeGuru profiling groups.

- Configure your Java application to send profiling data to the central account.

- Configure your Python application to send profiling data to the central account.

- Configure IAM with Amazon EKS.

- Review findings and recommendations on the CodeGuru Profiler dashboard.

Configuring IAM roles and policies

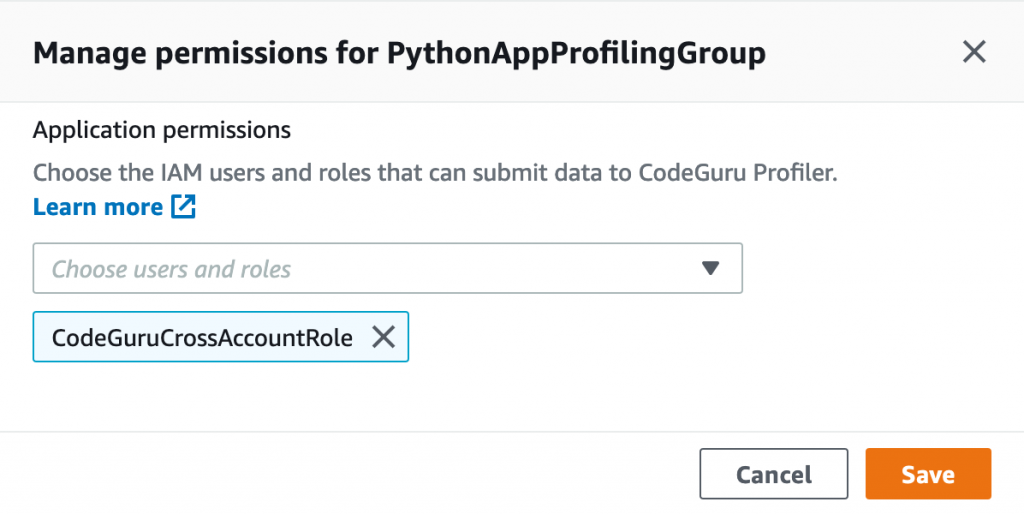

To allow a CodeGuru Profiler agent on the application account to send profiling information to CodeGuru Profiler on the central account, we need to create a cross-account IAM role in the central account that the CodeGuru Profiler agent can assume. We also need to attach a policy with the necessary privileges to the cross-account role, and configure a role in the application account to allow assuming the cross-account role in the central account.