Contents of this page is copied directly from AWS blog sites to make it Kindle friendly. Some styles & sections from these pages are removed to render this properly in 'Article Mode' of Kindle e-Reader browser. All the contents of this page is property of AWS.

Page 1|Page 2|Page 3|Page 4

Top Announcements of AWS re:Invent 2021

=======================

Welcome to AWS re:Invent! Below are some of the most noteworthy launches from our biggest event of the year. AWS Chief Evangelist Jeff Barr and our team of AWS developer advocates from around the globe share their insights and offer helpful tips for getting started with some of their favorite new AWS releases.

With so much amazing building going on at AWS, we simply can’t cover it all here. Don’t miss the Previews section at the end of this post for brief overviews of additional noteworthy launches mentioned in the keynotes, and be sure to check out these additional resources, too, to learn more:

What’s New: All the top re:Invent and 2021 AWS announcements

The Official AWS Podcast: Keynote recaps each day and more

AWS OnAir: Livestreaming from the show floor

(This post was last updated: 12:43 p.m, PST, Dec. 2, 2021.)

Quick category links:

Analytics | Application Integration | Architecture | Artificial Intelligence / Machine Learning | AWS Marketplace | Compute | Database | Developer Tools | Internet of Things (IoT) | Management Tools | Messaging | Migration & Transfer Services | Networking & Content Delivery | Robotics | Security | Storage | Quantum Technologies |

Analytics

Introducing Amazon Redshift Serverless – Run Analytics At Any Scale Without Having to Manage Data Warehouse Infrastructure

New capability makes it super easy to run analytics in the cloud with high performance at any scale. Just load your data and start querying with no need to set up and manage clusters.

Amazon Kinesis Data Streams On-Demand – Stream Data at Scale Without Managing Capacity

This new capacity mode eliminates the need for provisioning and managing the capacity for streaming data. Using Kinesis Data Streams On-demand automatically scales the capacity in response to varying data traffic.

AWS Lake Formation – General Availability of Cell-Level Security and Governed Tables with Automatic Compaction

AWS Lake Formation makes it easy to set up a secure data lake in days instead of weeks or months; newly released features further simplify loading data, optimizing storage, and managing access to a data lake.

Announcing AWS Data Exchange for APIs: Find, Subscribe to, and Use Third-party APIs with Consistent Authentication

New capability simplifies the lives of developers and IT administrators who have to integrate and secure the access to multiple third-party APIs.

Application Integration

New – Use Amazon S3 Event Notifications with Amazon EventBridge

Today we are making it even easier for you to use EventBridge to build applications that react quickly and efficiently to changes in your S3 objects. This is a new, “directly wired” model that is faster, more reliable, and more developer-friendly than ever.

Architecture

New – Sustainability Pillar for AWS Well-Architected Framework

The Sustainability Pillar contains questions aimed at evaluating the design, architecture, and implementation of your workloads to reduce their energy consumption and improve their efficiency.

Announcing AWS Well-Architected Custom Lenses: Extend the Well-Architected Framework with Your Internal Best Practices

Custom Lenses provide a consolidated view and a consistent way to measure and improve your workloads on AWS without relying on external spreadsheets or third-party systems.

Artificial Intelligence / Machine Learning

New AWS Scholarship Program Helps Underrepresented and Underserved Students Prep for Careers in AI and ML

The AWS AI & ML Scholarship program is launching as part of the all-new AWS DeepRacer Student service and Student League.

Now in Preview – Amazon SageMaker Studio Lab, a Free Service to Learn and Experiment with ML

We’re launching a free service that enables anyone to learn and experiment with ML without needing an AWS account, credit card, or cloud configuration knowledge.

Announcing Amazon SageMaker Inference Recommender

This brand-new Amazon SageMaker Studio capability automates load testing and optimizes model performance across machine learning (ML) instances.

New – Introducing SageMaker Training Compiler

New capability automatically compiles your Python training code and generates GPU kernels specifically for your model. The result? The training code will use less memory and compute, and train faster.

New – Create and Manage EMR Clusters and Spark Jobs with Amazon SageMaker Studio

With the ability to connect to and manage EMR clusters from within SageMaker Studio, data scientists no longer have to leave their familiar environment to create, configure and provision the EMR clusters where they run their workloads.

Announcing Amazon SageMaker Ground Truth Plus

Ground Truth Plus is a turn-key service that uses an expert workforce to deliver high-quality training datasets fast, and reduces costs by up to 40 percent.

New – Amazon DevOps Guru for RDS to Detect, Diagnose, and Resolve Amazon Aurora-Related Issues using ML

Now developers will have enough information to determine the exact cause for a database performance issue in Amazon Aurora, saving many hours of work trying to uncover and remediate the problems.

Announcing Amazon SageMaker Canvas – a Visual, No Code Machine Learning Capability for Business Analysts

Now business analysts can build machine learning models and generate accurate business predictions without writing code or requiring ML expertise.

Amazon CodeGuru Reviewer Introduces Secrets Detector to Identify Hardcoded Secrets and Secure Them with AWS Secrets Manager

The new automated tool helps developers detect secrets in source code or configuration files, such as passwords, API keys, SSH keys, and access tokens.

Back to Top

AWS Marketplace

New – AWS Marketplace for Containers Anywhere Lets You Deploy Your Kubernetes Cluster in Any Environment

New set of capabilities allows customers to find, subscribe to, and deploy third-party Kubernetes applications from AWS Marketplace on any Kubernetes cluster in any environment. This makes the AWS Marketplace more useful for customers who run containerized workloads.

Compute

Use New Amazon EC2 M1 Mac Instances to Build and Test Apps for iPhone, iPad, Mac, Apple Watch, and Apple TV

The availability (in preview) of EC2 M1 Mac instances lets you access machines built around the Apple-designed M1 System on Chip. If you are a Mac developer and re-architecting your apps to natively support Macs with Apple silicon, you may now build and test your apps and take advantage of all the benefits of AWS.

New Storage-Optimized Amazon EC2 Instances (Im4gn and Is4gen) Powered by AWS Graviton2 Processors

Introducing the two newest families of storage-optimized instances, Im4gn and Is4gen, powered by Graviton2 processors. Both instances offer up to 30 TB of NVMe storage using AWS Nitro SSD devices that are custom-built by AWS.

New – AWS Outposts Servers in Two Form Factors

We are launching three AWS Outposts servers, all powered by AWS Nitro System and with your choice of x86 or Arm/Graviton2 processors.

Join the Preview – Amazon EC2 C7g Instances Powered by New AWS Graviton3 Processors

These instances are going to be a great match for your compute-intensive workloads: HPC, batch processing, electronic design automation (EDA), media encoding, scientific modeling, ad serving, distributed analytics, and CPU-based machine learning inferencing.

New – Amazon EC2 M6a Instances Powered By 3rd Gen AMD EPYC Processors

Amazon EC2 M6a instances feature the 3rd Gen AMD EPYC processors, running at frequencies up to 3.6 GHz to offer up to 35 percent price performance versus the previous generation M5a instances.

Announcing Pull Through Cache Repositories for Amazon Elastic Container Registry

Pull through cache repositories offer developers the improved performance, security, and availability of Amazon Elastic Container Registry for container images that they source from public registries.

New – Amazon EC2 G5g Instances Powered by AWS Graviton2 Processors and NVIDIA T4G Tensor Core GPUs

The general availability of Amazon EC2 G5g instances extends Graviton2 price-performance benefits to GPU-based workloads including graphics applications and machine learning inference.

Introducing Karpenter – An Open-Source High-Performance Kubernetes Cluster Autoscaler

New open-source project helps improve your application availability and cluster efficiency by rapidly launching right-sized compute resources in response to changing application loads.

Database

New DynamoDB Table Class – Save Up To 60% in Your DynamoDB Costs

DynamoDB Standard-IA table class is designed for customers who want a cost-optimized solution for storing infrequently accessed data in DynamoDB without changing any application code.

New – Amazon RDS Custom for SQL Server Is Generally Available

This launch supports applications that have dependencies on specific configurations and third-party applications that require customizations in corporate, e-commerce, and content management systems, such as Microsoft SharePoint.

Developer Tools

AWS re:Post – A Reimagined Q&A Experience for the AWS Community

AWS re:Post is an AWS-managed Q&A service offering crowd-sourced, expert-reviewed answers to your technical questions about AWS that replaces the original AWS Forums.

Announcing General Availability of Construct Hub and AWS Cloud Development Kit Version 2

The AWS CDK is an open-source framework that simplifies working with cloud resources using familiar programming languages: C#, TypeScript, Java, Python, and Go (in developer preview).

Internet of Things (IoT)

New – FreeRTOS Extended Maintenance Plan for Up to 10 Years

FreeRTOS Extended Maintenance Plan allows embedded developers to receive critical bug fixes and security patches on their chosen FreeRTOS LTS version for up to 10 years beyond the expiration of the initial LTS period.

New – Securely Manage Your AWS IoT Greengrass Edge Devices Using AWS Systems Manager

Until today, IT administrators have had to build or integrate custom tools to make sure edge devices can be managed alongside EC2 and on-prem instances, through a consistent set of policies. Today, we have integrated AWS IoT Greengrass and AWS Systems Manager to simplify this process.

Management Tools

New for AWS Control Tower – Region Deny and Guardrails to Help You Meet Data Residency Requirements

Preventive and detective controls will prevent provisioning resources in unwanted AWS Regions by restricting access to AWS APIs through service control policies (SCPs) built and managed by AWS Control Tower.

New – AWS Control Tower Account Factory for Terraform

AFT is a new Terraform module maintained by the AWS Control Tower team that allows you to provision and customize AWS accounts through Terraform using a deployment pipeline.

New for AWS Compute Optimizer – Resource Efficiency Metrics to Estimate Savings Opportunities and Performance Risks

By applying the knowledge drawn from Amazon’s experience running diverse workloads in the cloud, AWS Compute Optimizer identifies workload patterns and recommends optimal AWS resources. Now, it also delivers resource efficiency metrics alongside its recommendations to help you assess how efficiently you are using AWS resources.

New for AWS Compute Optimizer – Enhanced Infrastructure Metrics to Extend the Look-Back Period to Three Months

AWS Compute Optimizer also now supports recommendation preferences where you can opt in or out of features that enhance resource-specific recommendations.

New – Real-User Monitoring for Amazon CloudWatch

Amazon CloudWatch helps you to build web applications that are highly scalable and highly available. The big challenge we are addressing today is monitoring those applications with the goal of understanding performance and providing an optimal experience for your end users.

New – Amazon CloudWatch Evidently – Experiments and Feature Management

This new Amazon CloudWatch capability makes it easy for developers to introduce experiments and feature management in their application code. CloudWatch Evidently may be used for two similar but distinct use-cases: implementing dark launches, also known as feature flags, and A/B testing.

Messaging

Machine Learning-Powered Amazon Connect, Now With Call Summarization

Amazon Connect adds a new capability that helps you improve customer experience and agent and supervisor productivity by automatically summarizing the important aspects of each customer call.

New – Enhanced Dead-letter Queue Management Experience for Amazon SQS Standard Queues

New functionality helps you focus on the important phase of your error handling workflow, which consists of identifying and resolving processing errors.

Migration & Transfer Services

Preview – AWS Migration Hub Refactor Spaces Helps to Incrementally Refactor Your Applications

A new capability of AWS Migration Hub lets you refactor existing applications into distributed applications, typically based on microservices.

Networking & Content Delivery

New – Site-to-Site Connectivity with AWS Direct Connect SiteLink

You no longer need to connect through the closest AWS Region and manage and configure an AWS Transit Gateway for site-to-site network connectivity.

Network Address Management and Auditing at Scale with Amazon VPC IP Address Manager

New feature provides an automated IP management workflow making it easier to organize, assign, monitor, and audit IP addresses in at-scale networks.

New – Amazon VPC Network Access Analyzer

In contrast to manual checking of network configurations, which is error-prone and hard to scale, this tool lets you analyze your AWS networks of any size and complexity.

Robotics

Preview – AWS IoT RoboRunner for Building Robot Fleet Management Applications

AWS IoT RoboRunner is a new robotics service that makes it easier for enterprises to build and deploy applications that help fleets of robots work seamlessly together.

Security

AWS Shield Advanced Update – Automatic Application Layer DDoS Mitigation

New feature automatically creates, tests, and deploys AWS WAF rules to mitigate layer 7 DDoS events on behalf of customers.

Amazon CodeGuru Reviewer Introduces Secrets Detector to Identify Hardcoded Secrets and Secure Them with AWS Secrets Manager

The new Amazon CodeGuru Reviewer Secrets Detector is an automated tool that helps developers detect secrets in source code or configuration files, such as passwords, API keys, SSH keys, and access tokens.

Improved, Automated Vulnerability Management for Cloud Workloads with a New Amazon Inspector

Amazon Inspector is a service used by organizations of all sizes to automate security assessment and management at scale. This new launch enables frictionless deployment at scale, support for an expanded set of resource types needing assessment, and a critical need to detect and remediate at speed.

Storage

Enhanced Amazon S3 Integration for Amazon FSx for Lustre

New capabilities feature a full bi-directional synchronization of your file systems with Amazon S3 and the ability to synchronize your file systems with multiple S3 buckets or prefixes.

New – Offline Tape Migration Using AWS Snowball Edge

Now you can get rid of your large and expensive storage facility, send your tape robots out to pasture, and eliminate all of the time and effort involved in moving archived data to new formats and mediums every few years.

Preview – AWS Backup Adds Support for Amazon S3

New capability lets you centrally manage your applications backups, easily restore your data, and improves backup compliance.

Amazon S3 Glacier is the Best Place to Archive Your Data – Introducing the S3 Glacier Instant Retrieval Storage Class

This new archive storage class delivers the lowest cost storage for long-lived data that is rarely accessed and requires millisecond retrieval.

New – Simplify Access Management for Data Stored in Amazon S3

A new Amazon S3 Object Ownership setting lets you disable access control lists and the Amazon S3 console policy editor now reports security warnings, errors, and suggestions powered by IAM Access Analyzer.

New for AWS Backup – Support for VMware and VMware Cloud on AWS

This new capability lets you centralize and automate data protection of virtual machines running on VMware environments.

New – Amazon FSx for OpenZFS

This new addition to the FSx family lets you use a popular file system without having to deal with hardware provisioning, software configuration, patching, backups, etc., all without having to develop the specialized expertise to set up and administer OpenZFS.

AWS Nitro SSD – High Performance Storage for your I/O-Intensive Applications

The second generation of AWS Nitro SSDs were designed to avoid latency spikes and deliver great I/O performance on real-world workloads.

New – Recycle Bin for EBS Snapshots

It’s easy to create EBS Snapshots, and just as easy to delete them – sometimes too easy. In order to give you more control, we are launching a Recycle Bin that lets you set up rules to retain deleted snapshots so that you can recover them after an accidental deletion.

New – Amazon EBS Snapshots Archive

EBS is an easy-to-use high-performance block storage service for your Amazon EC2 instances. An EBS volume mounted to your EC2 instances lets you boot an operating system and store data for your most performance-demanding workloads.

Quantum Technologies

Introducing Amazon Braket Hybrid Jobs – Set Up, Monitor, and Efficiently Run Hybrid Quantum-Classical Workloads

With the new Amazon Braket Hybrid Jobs you can avoid extensive infrastructure and software management and confidently execute your algorithms quickly and predictably, with on-demand priority access to QPUs.

Back to Top

What’s New: re:Invent Previews

Here’s a sneek peek at a few more of the noteworthy launches that have been announced at re:Invent. Be sure to keep an eye on the AWS News Blog for updates in the future.

Introducing AWS Cloud WAN (Preview)

Introducing AWS Amplify Studio (Preview)

Introducing Amazon Lex Automated Chatbot Designer (Preview)

Introducing Amazon SageMaker Serverless Inference (Preview)

Introducing AWS DMS Fleet Advisor for automated discovery and analysis of database and analytics workloads (Preview)

Announcing AWS IoT TwinMaker, a Service That Makes it Easier to Build Digital Twins (Preview)

Announcing AWS IoT FleetWise, a New Service for Transferring Vehicle Data to the Cloud More Efficiently (Preview)

Introducing Amazon EMR Serverless (Preview)

Introducing Amazon MSK Serverless (Preview)

Introducing AWS Mainframe Modernization (Preview)

Announcing Amazon EC2 Trn1 Instances (Preview)

Announcing AWS Private 5G (Preview)

AWS Chatbot now supports management of AWS resources in Slack (Preview)

Introducing AWS Migration Hub Refactor Spaces (Preview)

Introducing Amazon CloudWatch Metrics Insights (Preview)

Back to Top

Now Open – AWS Asia Pacific (Jakarta) Region

=======================

The AWS Region in Jakarta, Indonesia, is now open and you can start using it today. The official name is Asia Pacific (Jakarta) and the API name is ap-southeast-3. The AWS Asia Pacific (Jakarta) Region is the tenth active AWS Region in Asia Pacific and mainland China along with Beijing, Hong Kong, Mumbai, Ningxia, Osaka, Seoul, Singapore, Sydney, and, Tokyo. With this launch, AWS now spans 84 Availability Zones within 26 geographic regions around the world. We have also announced plans for 24 more Availability Zones and eight more AWS Regions in Australia, Canada, India, Israel, New Zealand, Spain, Switzerland, and the United Arab Emirates.

The AWS Region in Jakarta, Indonesia, is now open and you can start using it today. The official name is Asia Pacific (Jakarta) and the API name is ap-southeast-3. The AWS Asia Pacific (Jakarta) Region is the tenth active AWS Region in Asia Pacific and mainland China along with Beijing, Hong Kong, Mumbai, Ningxia, Osaka, Seoul, Singapore, Sydney, and, Tokyo. With this launch, AWS now spans 84 Availability Zones within 26 geographic regions around the world. We have also announced plans for 24 more Availability Zones and eight more AWS Regions in Australia, Canada, India, Israel, New Zealand, Spain, Switzerland, and the United Arab Emirates.

Instances and Services

Applications running in this 3-AZ region can use C5, C5d, I3, I3en, M5, M5d, R5, R5d, and T3 instances, and can use a long list of AWS services including Amazon API Gateway, Application Auto Scaling, AWS Certificate Manager (ACM), AWS CloudFormation, Amazon CloudFront, AWS CloudTrail, Amazon CloudWatch, CloudWatch Events, Amazon CloudWatch Logs, AWS CodeDeploy, AWS Config, AWS Database Migration Service, AWS Direct Connect, Amazon DynamoDB, EC2 Auto Scaling, Amazon Elastic Block Store (EBS), Amazon Elastic Compute Cloud (Amazon EC2), Amazon Elastic Container Registry, Amazon Elastic Container Service (Amazon ECS), Application Load Balancers (Classic, Network, and Application), Amazon EMR, Amazon ElastiCache, Amazon OpenSearch Service, Amazon Glacier, AWS Identity and Access Management (IAM), Amazon Kinesis Data Streams, AWS Key Management Service (KMS), AWS Lambda, AWS Marketplace, AWS Organizations, AWS Personal Health Dashboard, Amazon Redshift, Amazon Relational Database Service (RDS), Amazon Aurora, Amazon Route 53 (including Private DNS for VPCs), Amazon Simple Notification Service (SNS), Amazon Simple Queue Service (SQS), Amazon Simple Storage Service (Amazon S3), Amazon Simple Workflow Service (SWF), AWS Step Functions, AWS Support API, AWS Systems Manager, AWS Trusted Advisor, Amazon Virtual Private Cloud (VPC), and VM Import/Export.

Using the Asia Pacific (Jakarta) Region

As is the case with all of the newer AWS Regions, you need to explicitly enable this one in order to be able to create and manage resources within it. To learn how to do this, read Using the Asia Pacific (Hong Kong) Region in my post, Now Open – AWS Asia Pacific (Hong Kong) Region.

Connectivity, Edge Locations, and Latency

Jakarta is already home to a Amazon CloudFront edge location that was opened earlier this year, along with two brand-new AWS Direct Connect locations. In addition to this in-country infrastructure, there are more than sixty other edge locations and multiple regional edge caches in Asia, as detailed on the AWS Global Infrastructure page.

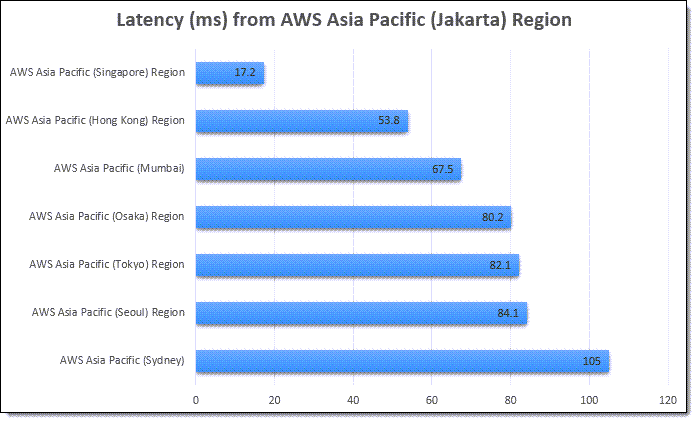

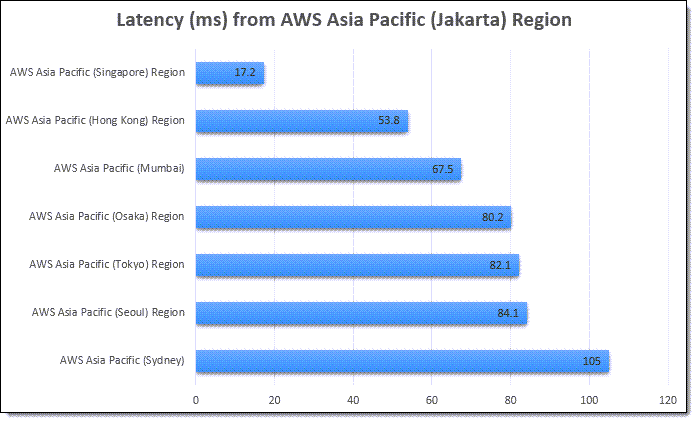

The region offers low-latency connections to other AWS regions in the area. Here are the latest numbers:

Many AWS services give you options to replicate your data across multiple AWS regions. You can replicate S3 buckets to multiple destinations (and use Multi-Region Access Points so your users access the closest one), copy EC2 AMIs between regions, set up cross-region Amazon Aurora Read Replicas, replicate container images, and more. You can set up Amazon DynamoDB Global Tables that span any desired regions, and you can set up inter-region VPC peering. To learn more about how to build applications that span regions, be sure to check out our Multi-Region Application Architecture solution.

AWS in Indonesia

With this launch we are making a long-term commitment to growing our business in Indonesia, and expect to create an average of 24,700 jobs annually over the next 15 years. This includes the direct AWS supply chain (construction, facility maintenance, electricity, and telecommunications) along with the growth that this drives in the broader Indonesian economy.

We have been investing in Southeast Asia and Indonesia for many years. The first AWS office in Jakarta opened in 2018 to help support our customers, and now employs developer advocates, solutions architects, account managers, and partner managers, with hiring for other roles now underway.

Back in 2019 we announced a goal to train and empower hundreds of thousands of Indonesians with proficiency in cloud services by 2025. In collaboration with the Indonesian government and with the help of both AWS partners and educational institutions, we have already trained over 200,000 people. We are doing this through multiple routes and programs including:

Laptops for Builders – This is a free program that teaches high school and vocational student in Bahasa, Indonesia about cloud fundamentals.

Scholarship Programs – Working closely with tech-education startup Dicoding, we are offering a free scholarship program for up to 100,000 cloud and back-end developers.

AWS Training & Certification – Attendees are gaining new skills and certifications in areas such as AWS Cloud fundamentals, big data, security, and machine learning, with several training options available.

AWS Customers in Indonesia

We have many amazing customers in Indonesia! Here are a few success stories:

Traveloka is a lifestyle superapp with a focus on Indonesia, Thailand, Vietnam, Singapore, Malaysia, the Philippines, and Australia. They offer customers in those countries an end-to-end solution that spans travel, local services, and financial services, all powered by AWS. The company was born in the cloud, and counts on AWS to let them build apps quickly and with high scalability. The Traveloka app has been downloaded over 60 million times, making it the most popular travel and lifestyle booking app in Southeast Asia.

Halodoc is an Indonesian digital health startup. They are currently running a digital reservation program to help Indonesian citizens to book and receive their COVID-19 vaccinations, while also providing the government with easier monitoring and evaluation of the vaccine rollout. During the pandemic, they have also helped to provide testing and telemedicine services, all powered by a digital platform that runs on AWS and that allows them to scale in real-time according to market demand.

Under the national movement of Learning Freedom (“Merdeka Belajar”), the Indonesian government is working to allow students to access educational resources from anywhere and at any time. Simak Online allows 300,000 students from 430 schools across Jakarta to access their learning materials and assignments, complete homework, take examples, and participate in online forum discussions. Previously hosted on-premises, Simak Online moved to AWS shortly before COVID-19 broke out in Indonesia. Before the move, they could support exams at just 50 schools simultaneously. Thanks to AWS, they can now scale up and down as needed and can support the national movement and allow students to learn online and on-demand.

A translated version of this post is available on the AWS Indonesia Blog.

— Jeff;

New – FreeRTOS Extended Maintenance Plan for Up to 10 Years

=======================

Last AWS re:Invent 2020, we announced FreeRTOS Long Term Support (LTS) that offers a more stable foundation than standard releases, as manufacturers deploy and later update devices in the field. FreeRTOS is an open source, real-time operating system for microcontrollers that makes small, low-power edge devices easy to program, deploy, secure, connect, and manage.

Last AWS re:Invent 2020, we announced FreeRTOS Long Term Support (LTS) that offers a more stable foundation than standard releases, as manufacturers deploy and later update devices in the field. FreeRTOS is an open source, real-time operating system for microcontrollers that makes small, low-power edge devices easy to program, deploy, secure, connect, and manage.

In 2021, FreeRTOS LTS released 202012.01 to include AWS IoT Over-the-Air (OTA) update, AWS IoT Device Defender, and AWS IoT Jobs libraries that provides feature stability, security patches, and critical bug fixes for the next two years.

Today, I am happy to announce FreeRTOS Extended Maintenance Plan (EMP), which allows embedded developers to receive critical bug fixes and security patches on their chosen FreeRTOS LTS version for up to 10 years beyond the expiry of the initial LTS period. FreeRTOS EMP lets developers improve device security (or helps keep devices secure) for years, save on operating system upgrade costs, and reduce the risks associated with patching their devices.

FreeRTOS EMP applies to libraries covered by FreeRTOS LTS. Therefore, developers have device lifecycles longer than the LTS period of 2 years and can continue using a version that provides feature stability, security patches, and critical bug fixes, all without having to plan a costly version upgrade.

Here are main features of FreeRTOS EMP:

| Features |

Description |

Why is it important? |

| Feature stability |

Get FreeRTOS libraries that maintain the same set of features for years |

Save upgrade costs by using a stable FreeRTOS codebase for their product lifecycle |

| API stability |

Get FreeRTOS libraries that have stable APIs for years |

| Critical fixes |

Receive security patches and critical bug* fixes on your chosen FreeRTOS libraries |

Security patches help keep their IoT devices secure for the product lifecycle |

| Notification of patches |

Receive timely notification upcoming patches |

Timely awareness of security patches helps proactively plan the deployment of patches |

| Flexible subscription plan |

Extend maintenance by a year or longer |

Continue to renew their annual subscription for a longer period to keep the same version for the entire device lifecycle, or for a shorter period to buy time before upgrading to the latest FreeRTOS version. |

* A critical bug is a defect determined by AWS to impact the functionality of the affected library and has no reasonable workaround.

Getting Started with FreeRTOS EMP

To get started, subscribe to the plan using your AWS account, and renew the subscription annually or for a longer period to either cover their product lifecycle or until you are ready to transition to a new FreeRTOS LTS release.

Before the end of the current LTS period, you will be able to use your AWS account to complete the FreeRTOS EMP registration on the FreeRTOS console, review and agree to the associated terms and conditions, select the LTS version, and buy an annual subscription. You will then gain access to the private repository where you’ll receive .zip files containing a git repo with chosen libraries, patches, and related notifications.

Before the end of the current LTS period, you will be able to use your AWS account to complete the FreeRTOS EMP registration on the FreeRTOS console, review and agree to the associated terms and conditions, select the LTS version, and buy an annual subscription. You will then gain access to the private repository where you’ll receive .zip files containing a git repo with chosen libraries, patches, and related notifications.

Under NDA, AWS will notify you via official AWS Security channels of an upcoming patch and its timelines (if AWS is reasonably able to do so and deems it appropriate). Patches will be sent to your private repository within three business days of successfully implementing and getting AWS Security approval for our mitigation.

AWS will provide technical support for FreeRTOS EMP customers via separate subscriptions to AWS Support. AWS Support is not included in FreeRTOS EMP subscriptions. You can track issues such as AWS accounts, billing, and bugs, or get access to technical experts such as patch integration issues based on your AWS Support plan.

Available Now

FreeRTOS EMP will be available for the current and all previous FreeRTOS LTS releases. Subscriptions can be renewed annually for up to 10 years from the end of the chosen LTS version’s support period. For example, a subscription for FreeRTOS 202012.01 LTS, whose LTS period ends March 2023, may be renewed annually for up to 10 years (i.e., March 2033).

You can find more information on the FreeRTOS feature page. Please send us feedback on the forum of FreeRTOS or AWS Support.

Sign up to get periodic updates on when and how you can subscribe to FreeRTOS EMP.

— Channy

AWS re:Post – A Reimagined Q&A Experience for the AWS Community

=======================

The internet is an excellent resource for well-intentioned guidance and answers. However, it can sometimes be hard to tell if what you’re reading is, in fact, advice you should follow. Also, some users have a preference toward using a single, trusted online community rather than the open internet to provide them with reliable, vetted, and up-to-date answers to their questions.

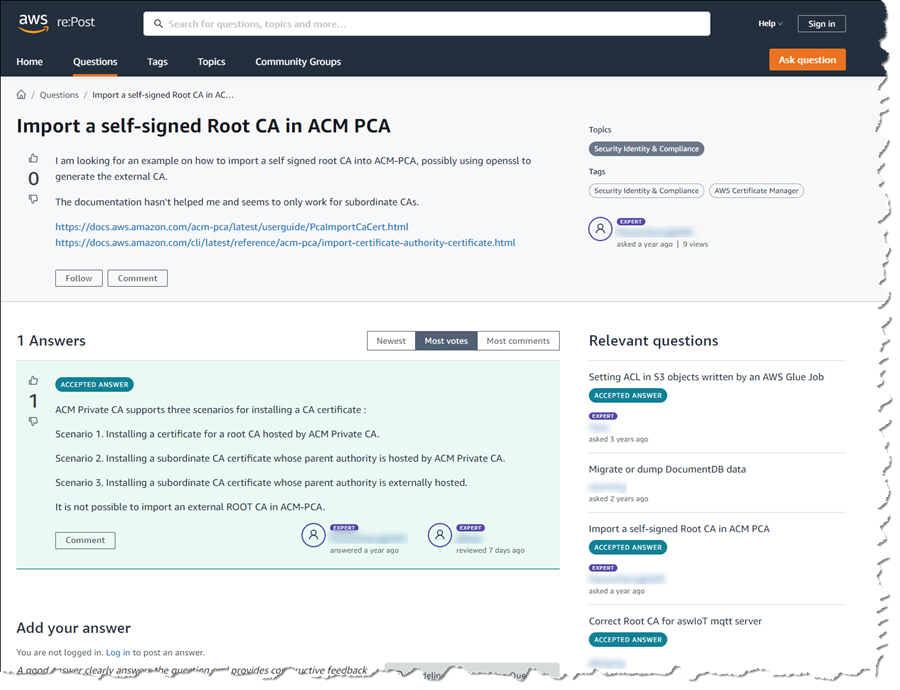

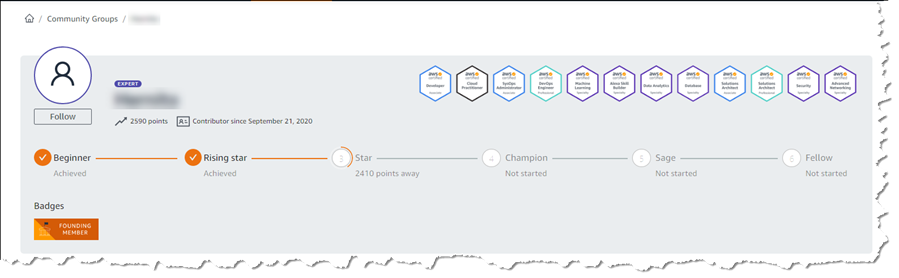

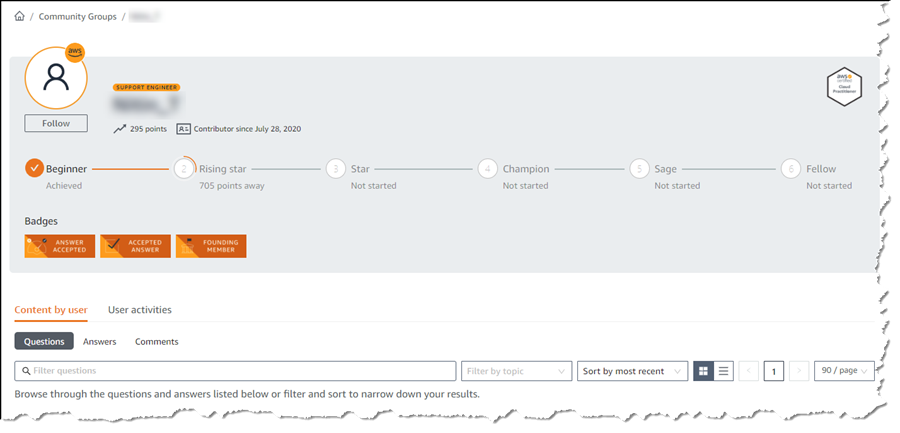

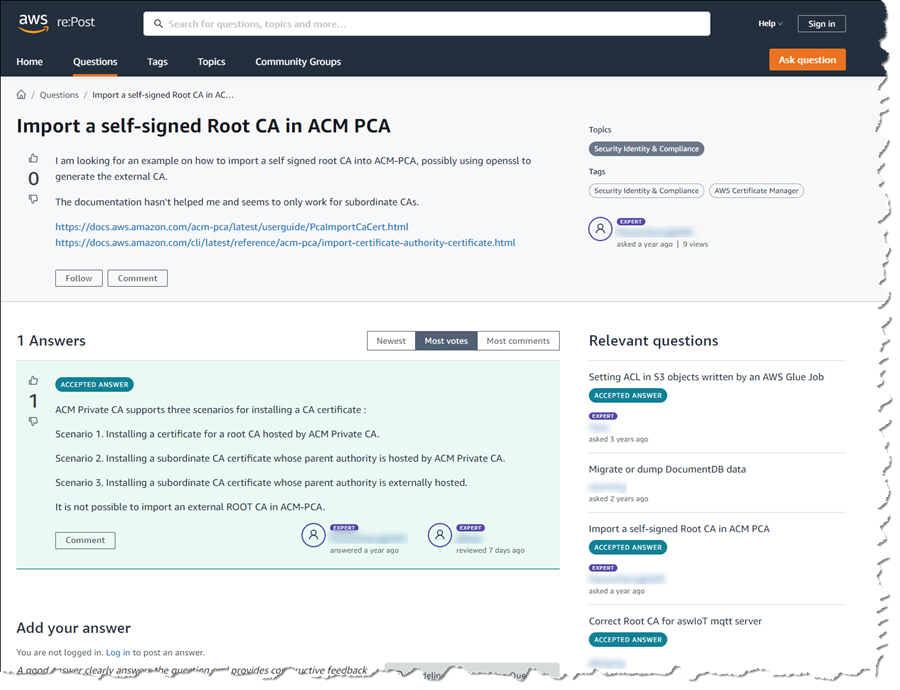

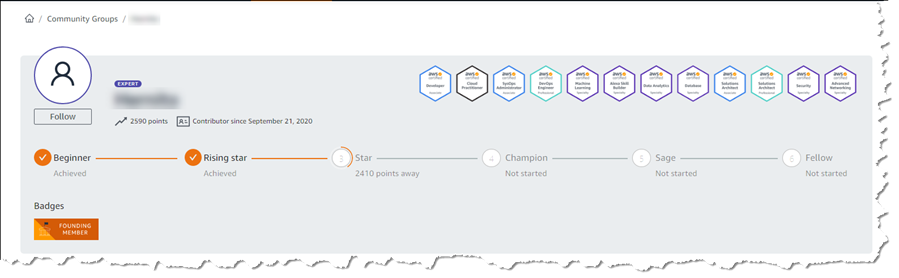

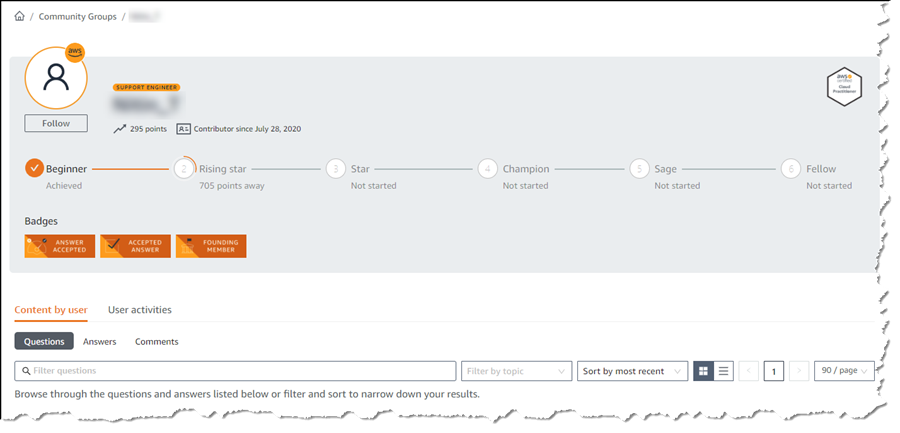

Today, I’m happy to announce AWS re:Post, a new, question and answer (Q&A) service, part of the AWS Free Tier, that is driven by the community of AWS customers, partners, and employees. AWS re:Post is an AWS-managed Q&A service offering crowd-sourced, expert-reviewed answers to your technical questions about AWS that replaces the original AWS Forums. Community members can earn reputation points to build up their community expert status by providing accepted answers and reviewing answers from other users, helping to continually expand the availability of public knowledge across all AWS services.

You’ll find AWS re:Post to be an ideal resource when:

You are building an application using AWS, and you have a technical question about an AWS service or best practices.

You are learning about AWS or preparing for an AWS certification, and you have a question on an AWS service.

Your team is debating issues related to design, development, deployment, or operations on AWS.

You’d like to share your AWS expertise with the community and build a reputation as a community expert.

There is no requirement to sign in to AWS re:Post to browse the content. For users who do choose to sign in, using their AWS account, there is the opportunity to create a profile, post questions and answers, and interact with the community. Profiles enable users to link their AWS certifications through Credly and to indicate interests in specific AWS technology domains, services, and experts. AWS re:Post automatically shares new questions with these community experts based on their areas of expertise, improving the accuracy of responses as well as encouraging responses for unanswered questions. An opt-in email is also available to receive email notifications to help users stay informed.

Over the last four years, AWS re:Post has been used internally by AWS employees helping customers with their cloud journeys. Today, that same trusted technical guidance becomes available to the entire AWS community. Additionally, all active users from the previous AWS Forums have been migrated onto AWS re:Post, as well as the most-viewed content.

Questions from AWS Premium Support customers that do not receive a response from the community are passed on to AWS Support engineers. If the question is related to a customer-specific workload, AWS support will open a support case to take the conversation into a private setting. Note, however, that AWS re:Post is not intended to be used for questions that are time-sensitive or involve any proprietary information, such as customer account details, personally identifiable information, or AWS account resource data.

Have Questions? Need Answers? Try AWS re:Post Today

If you have a technical question about an AWS service or product or are eager to get started on your journey to becoming a recognized community expert, I invite you to get started with AWS re:Post today!

New – Sustainability Pillar for AWS Well-Architected Framework

=======================

The AWS Well-Architected Framework has been helping AWS customers improve their cloud architectures since 2015. The framework consists of design principles, questions, and best practices across multiple pillars: Operational Excellence, Security, Reliability, Performance Efficiency, and Cost Optimization.

Today we are introducing a new Sustainability Pillar to help organizations learn, measure, and improve their workloads using environmental best practices for cloud computing.

Similar to the other pillars, the Sustainability Pillar contains questions aimed at evaluating the design, architecture, and implementation of your workloads to reduce their energy consumption and improve their efficiency. The pillar is designed as a tool to track your progress toward policies and best practices that support a more sustainable future, not just a simple checklist.

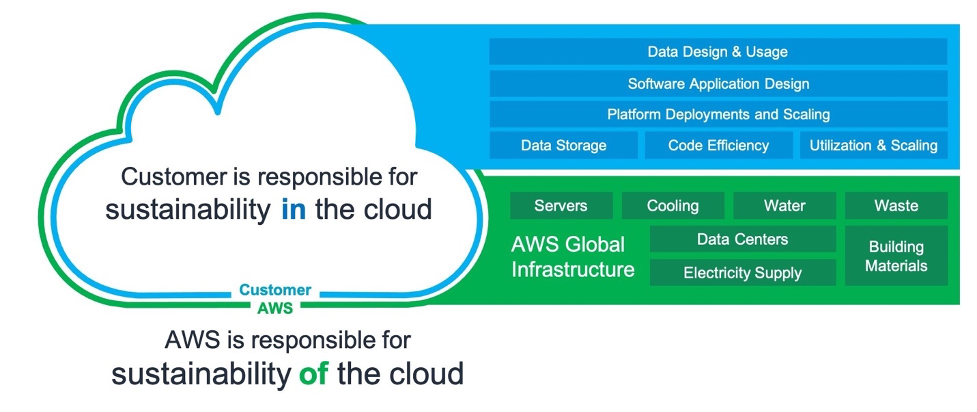

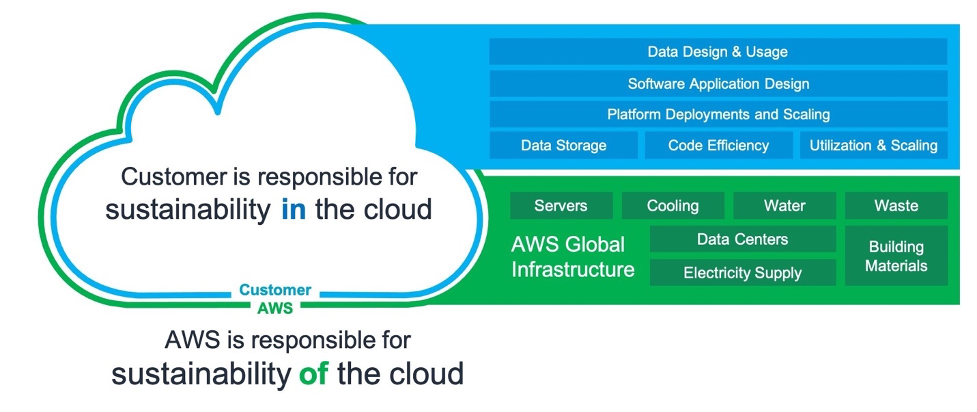

The Shared Responsibility Model of Cloud Sustainability

The shared responsibility model also applies to sustainability. AWS is responsible for the sustainability of the cloud, while AWS customers are responsible for sustainability in the cloud.

The sustainability of the cloud allows AWS customers to reduce associated energy usage by nearly 80% with respect to a typical on-premises deployment. This is possible because of the much higher server utilization, power and cooling efficiency, custom data center design, and continued progress on the path to powering AWS operations with 100% renewable energy by 2025. But we can achieve much more by collectively designing sustainable architectures.

We are introducing the new Sustainability Pillar to help organizations improve their sustainability in the cloud. This is a continuous effort focused on energy reduction and efficiency of all types of workloads. In practice, the pillar helps developers and cloud architects surface the trade-offs, highlight patterns and best practices, and avoid anti-patterns. For example, selecting an efficient programming language, adopting modern algorithms, using efficient data storage techniques, and deploying correctly sized and efficient infrastructure.

Specifically, the pillar is designed to support organizations in developing a better understanding of the state of their workloads, as well as the impact related to defined sustainability targets, how to measure against these targets, and how to model where they cannot directly measure.

In addition to building sustainable workloads in the cloud, you can use AWS technology to solve broader sustainability challenges. For example, reducing the environmental incidents caused by industrial equipment failure using Amazon Monitron to detect abnormal behavior and conduct preventative maintenance. We call this sustainability through the cloud.

Well-Architected Design Principles for Sustainability in the Cloud

The Sustainability Pillar includes design principles and operational guidance, as well as architectural and software patterns.

The design principles will facilitate good design for sustainability:

Understand your impact – Measure business outcomes and the related sustainability impact to establish performance indicators, evaluate improvements, and estimate the impact of proposed changes over time.

Establish sustainability goals – Set long-term goals for each workload, model return on investment (ROI) and give owners the resources to invest in sustainability goals. Plan for growth and design your architecture to reduce the impact per unit of work such as per user or per operation.

Maximize utilization – Right size each workload to maximize the energy efficiency of the underlying hardware, and minimize idle resources.

Anticipate and adopt new, more efficient hardware and software offerings – Support upstream improvements by your partners, continually evaluate hardware and software choices for efficiencies, and design for flexibility to adopt new technologies over time.

Use managed services – Shared services reduce the amount of infrastructure needed to support a broad range of workloads. Leverage managed services to help minimize your impact and automate sustainability best practices such as moving infrequent accessed data to cold storage and adjusting compute capacity.

Reduce the downstream impact of your cloud workloads – Reduce the amount of energy or resources required to use your services and reduce the need for your customers to upgrade their devices; test using device farms to measure impact and test directly with customers to understand the actual impact on them.

Well-Architected Best Practices for Sustainability

The design principles summarized above correspond to concrete architectural best practices that development teams can apply every day.

Some examples of architectural best practices for sustainability:

Optimize geographic placement of workloads for user locations

Optimize areas of code that consume the most time or resources

Optimize impact on customer devices and equipment

Implement a data classification policy

Use lifecycle policies to delete unnecessary data

Minimize data movement across networks

Optimize your use of GPUs

Adopt development and testing methods that allow rapid introduction of potential sustainability improvements

Increase the utilization of your build environments

Many of these best practices are generic and apply to all workloads, while others are specific to some use cases, verticals, and compute platforms. I’d highly encourage you to dive into these practices and identify the areas where you can achieve the most impact immediately.

Transforming sustainability into a non-functional requirement can result in cost effective solutions and directly translate to cost savings on AWS, as you only pay for what you use. In some cases, meeting these non-functional targets might involve tradeoffs in terms of uptime, availability, or response time. Where minor tradeoffs are required, the sustainability improvements are likely to outweigh the change in quality of service. It’s important to encourage teams to continuously experiment with sustainability improvements and embed proxy metrics in their team goals.

Available Now

The AWS Well-Architected Sustainability Pillar is a new addition to the existing framework. By using the design principles and best practices defined in the Sustainability Pillar Whitepaper, you can make informed decisions balancing security, cost, performance, reliability, and operational excellence with sustainability outcomes for your workloads on AWS.

Learn more about the new Sustainability Pillar.

— Alex

Announcing General Availability of Construct Hub and AWS Cloud Development Kit Version 2

=======================

Today, I’m happy to announce that both the Construct Hub and AWS Cloud Development Kit (AWS CDK) version 2 are now generally available (GA).

The AWS CDK is an open-source framework that simplifies working with cloud resources using familiar programming languages: C#, TypeScript, Java, Python, and Go (in developer preview). Within their applications, developers create and configure cloud resources using reusable types called constructs, which they use just as they would any other types in their chosen language. It’s also possible to write custom constructs, which can then be shared across your teams and organization.

With the new releases generally available today, defining your cloud resources using the CDK is now even more simple and convenient, and the Construct Hub enables sharing of open-source construct libraries within the wider cloud development community.

AWS Cloud Development Kit (AWS CDK) Version 2

Version 2 of the AWS CDK focuses on productivity improvements for developers working with CDK projects. The individual packages (libraries) used in version 1 to distribute and consume the constructs available for each AWS service have been consolidated into a single monolithic package. This simplifies dependency management in your CDK applications and when publishing construct libraries. It also makes working with CDK projects that reference constructs from multiple services more convenient, especially when those services have peer dependencies (for example, an Amazon Simple Storage Service (Amazon S3) bucket that needs to be configured with an AWS Key Management Service (KMS) key).

Version 1 of the CDK contained some APIs that were experimental. Over time, some of these were marked as deprecated in favor of other preferred approaches based on community experience and feedback. The deprecated APIs have been removed in version 2 to aid clarity for developers working with construct properties and methods. Additionally, the CDK team has adopted a new release process for creating and releasing experimental constructs without needing to include them in the monolithic GA package. From version 2 onwards, the monolithic CDK package will contain only stable APIs that customers can always rely on. Experimental APIs will be shipped in separate packages, making it easier for the team and community to revise them and ensure customers don’t incur the accidental breaking changes that caused some issues in version 1.

You can read about all the changes in version 2 of the AWS CDK, and how you can update your CDK applications to use it, in the Developer Guide.

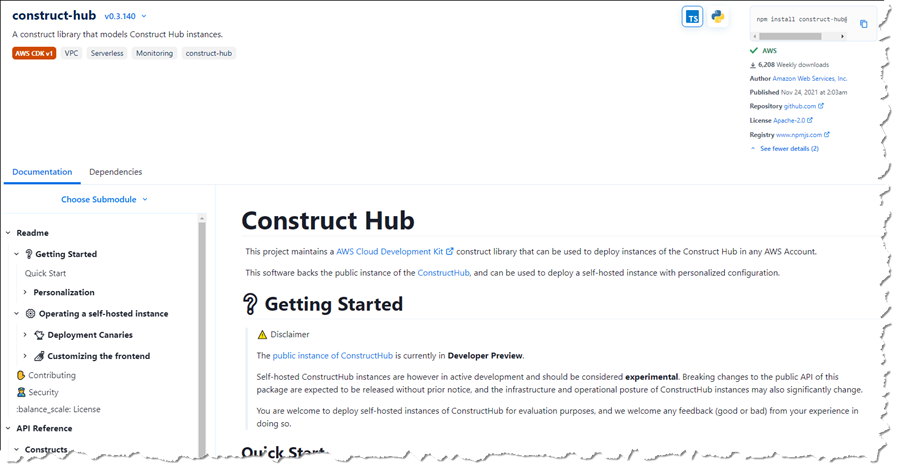

Construct Hub

The Construct Hub is a single home where the open-source community, AWS, and cloud technology providers can discover and share construct libraries for all CDKs. The most popular CDKs today are AWS CDK, which generates AWS CloudFormation templates; cdk8s, which generates Kubernetes manifests; and cdktf, which generates Terraform JSON files. Anyone can create a CDK, and we are open to adding other construct-based tools as they evolve!

As of this post’s publication, the Construct Hub contains over 700 CDK libraries, including core AWS CDK modules, to help customers build their cloud applications using their preferred programming languages, for their preferred use case, and with their preferred provisioning engine (CloudFormation, Terraform, or Kubernetes). For example, there are 99 libraries for working with containers, 210 libraries for serverless development, 53 libraries for websites, 65 libraries for integrations with cloud services providers like Datadog, Logz.io, Cloudflare, Snyk, and more, and dozens of additional libraries which integrate with Slack, Twitter, GitLab, Grafana, Prometheus, WordPress, Next.js, and more. Many of these were created by the open-source community.

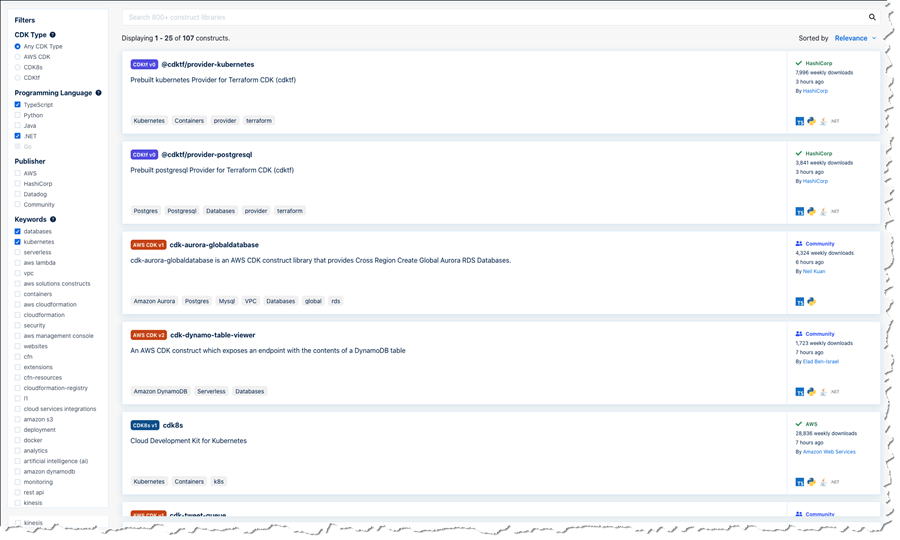

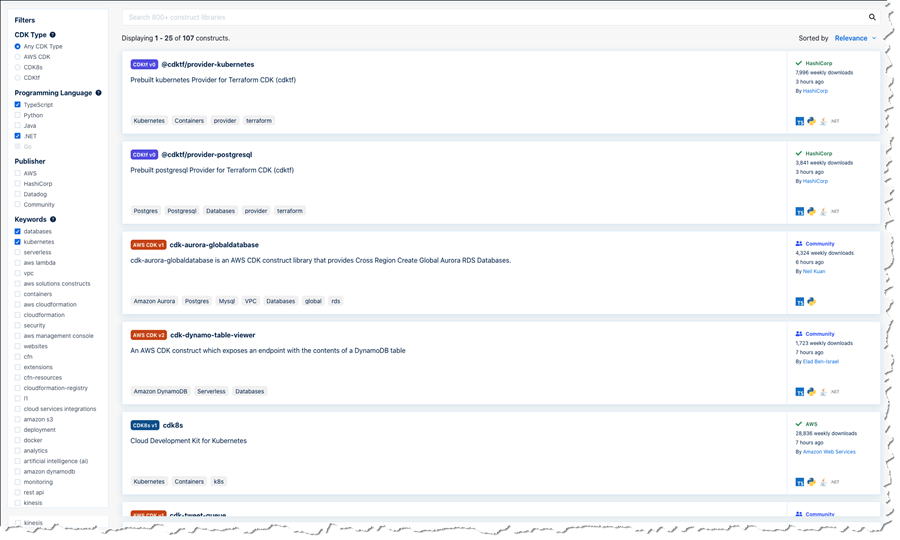

Anyone can contribute construct libraries to the Construct Hub. New libraries that you wish to share need to be published to the npm public registry and tagged. The Construct Hub will automatically detect the published libraries and make them visible and discoverable to consumers on the hub. Consumers can search and filter for construct libraries for familiar technologies, third-party integrations, AWS services, and use cases such as compliance, monitoring, websites, containers, serverless, and more. Filters are available for publisher, language, CDK type, and keywords. In the screenshot below, I’m searching the hub for .NET and TypeScript libraries related to databases and Kubernetes across all CDKs. I could also filter to a specific CDK or a CDK version.

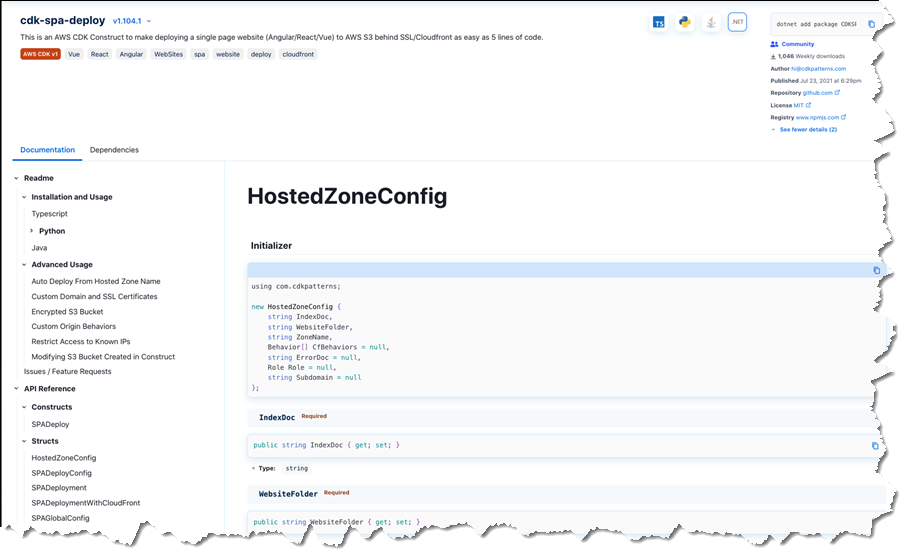

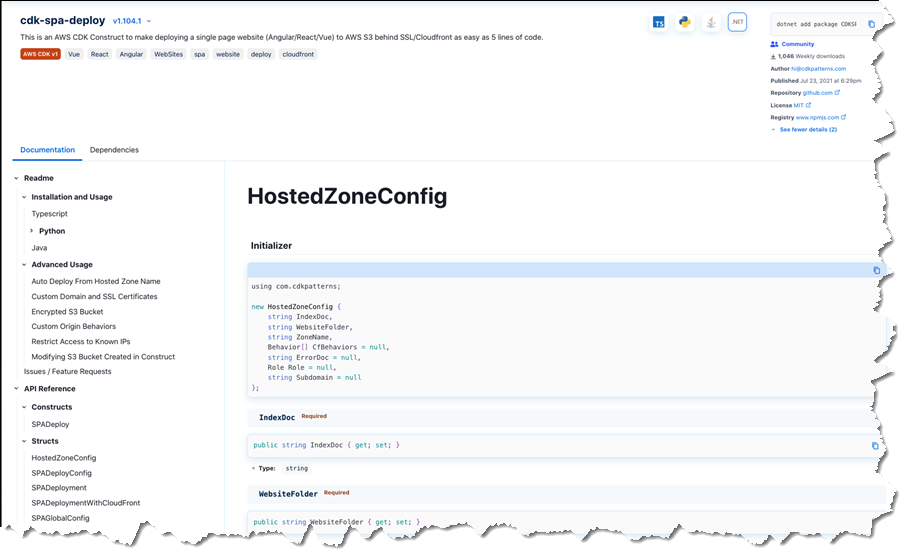

Publishers determine which programming languages should be supported by their packages. Construct Hub then automatically generates API references for all the supported languages and transliterates all code samples the authors provide to those supported languages. The screenshots below show an example of language-specific API documentation for the cdk-spa-deploy construct library, which you can use to deploy a single-page web application (SPA). First, the documentation for .NET developers working with the library:

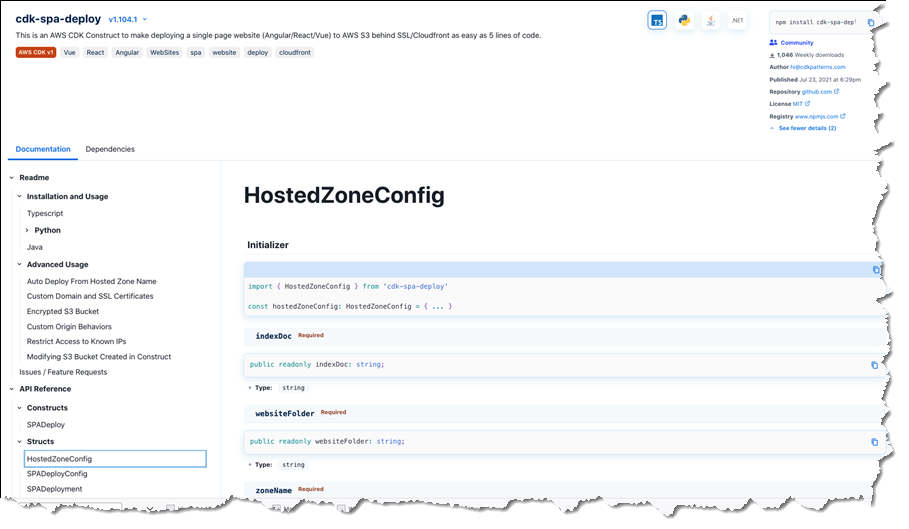

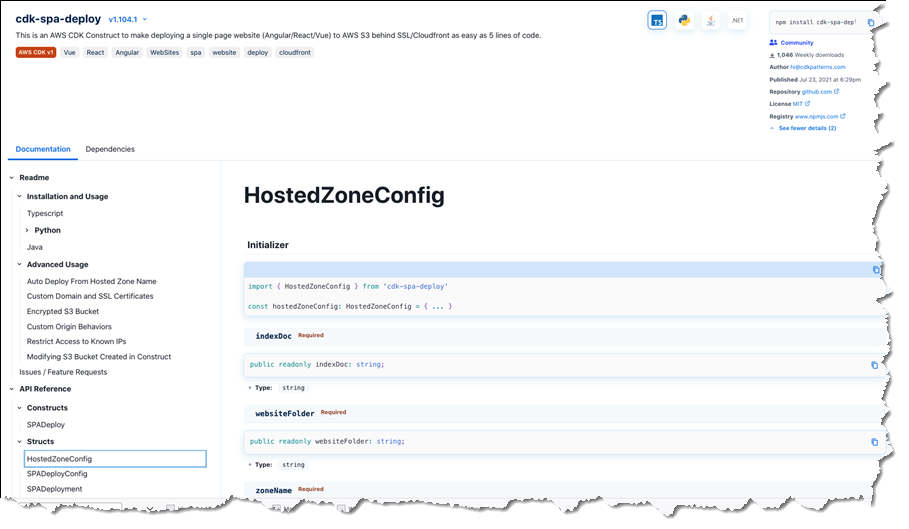

The second image below shows the generated documentation for the same construct library, but this time for TypeScript developers:

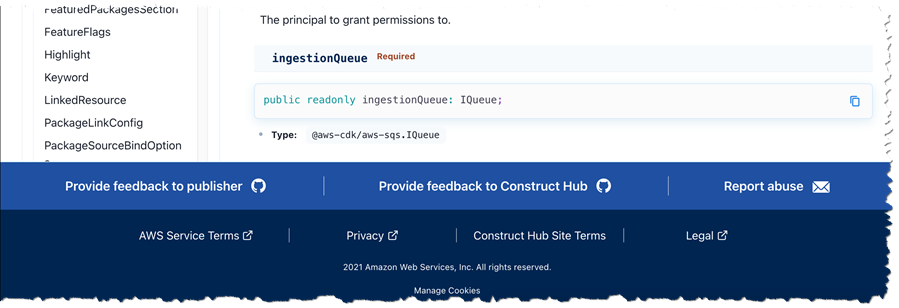

All construct libraries published to the Construct Hub must be open-source. This enables users to exercise their good judgment and perform due diligence to verify that the libraries meet their security and compliance needs, just as they would with any other third-party package source consumed in their applications. Issues with a published construct library can be raised on the library’s GitHub repository using convenient links accessible from the hub entry for the library.

The Construct Hub employs a trust-through-transparency model. Users can report libraries for abuse by clicking the ‘Report abuse’ link in the hub, which will engage AWS Support teams to investigate the issue and remove the offending packages from Construct Hub listings if problems are found. Users can also send us feedback by clicking a ‘Provide feedback to Construct Hub’ link, which allows them to open an issue on our GitHub repository. And last but not least, they can click ‘Provide feedback to publisher’, which redirects to the repository the publisher provided with the package.

Just like the AWS CDK, the Construct Hub is open-source, built as a construct, and is, in fact, itself available on the Construct Hub! If you’re interested, you can see how the CDK team uses the CDK to develop the hub in their GitHub repository.

Get Started with the AWS CDK Version 2 and the Construct Hub, Today

If you’ve built CDK applications to define your cloud infrastructure using version 1 of the AWS Cloud Development Kit (AWS CDK), then I encourage you to take a look at the documented changes for version 2 and see how the new version can help simplify your project setup going forward. And, if you’re interested in sharing new constructs with the wider community, please get involved with the Construct Hub. You can find more details on how to build and share reusable construct libraries on the Construct Hub in the CDK team’s blog post on best practices.

— Steve

Use New Amazon EC2 M1 Mac Instances to Build & Test Apps for iPhone, iPad, Mac, Apple Watch, and Apple TV

=======================

Last year at AWS re:Invent, Jeff Barr wrote about the exciting availability of Amazon Elastic Compute Cloud (Amazon EC2) Mac instances. Today, we’re announcing the preview of a new EC2 M1 Mac instance.

The introduction of EC2 Mac instances brought the flexibility, scalability, and cost benefits of AWS to all Apple developers. EC2 Mac instances are dedicated Mac mini computers attached through Thunderbolt to the AWS Nitro System, which lets the Mac mini appear and behave like another EC2 instance. It connects to your Amazon Virtual Private Cloud (VPC), boot from Amazon Elastic Block Store (EBS) volumes, and leverage EBS snapshots, security groups and other AWS services. EC2 Mac instances let you scale your build and test fleets of Macs, paying as you go. There is no hypervisor involved, and you get full bare metal performance of the underlying Mac mini. An EC2 dedicated host reserves a Mac mini for your usage.

The availability (in preview) of EC2 M1 Mac instances lets you access machines built around the Apple-designed M1 System on Chip (SoC). If you are a Mac developer and re-architecting your apps to natively support Macs with Apple silicon, you may now build and test your apps and take advantage of all the benefits of AWS. Developers building for iPhone, iPad, Apple Watch, and Apple TV will also benefit from faster builds. EC2 M1 Mac instances deliver up to 60% better price performance over the x86-based EC2 Mac instances for iPhone and Mac app build workloads.

EC2 M1 Mac instances are powered by a combination of two hardware components:

The Mac mini, featuring M1 SoC with 8 CPU cores, 8 GPU cores, 16 GiB of memory, and a 16 core Apple Neural Engine.

The AWS Nitro System, providing up to 10 Gbps of VPC network bandwidth and 8 Gbps of EBS storage bandwidth through a high-speed Thunderbolt connection.

How to Get Started

As I explained previously, when using EC2 Mac instances, there is no virtual machine involved. These are running on bare metal servers, each hosting a Mac mini. The first step, therefore, involves grabbing a dedicated server. I open the AWS Management Console, navigate to the Amazon EC2 section, then I select Dedicated Hosts. I select Allocate Dedicated Host to allocate a server to my AWS account.

Alternatively, I may use the AWS Command Line Interface (CLI).

➜ ~ aws ec2 allocate-hosts \

--instance-type mac2.metal \

--availability-zone us-east-2b \

--quantity 1

{

"HostIds": [

"h-0fxxxxxxx90"

]

}

Once the host is allocated, I start an EC2 instance on it. The procedure is no different from starting any EC2 instance type. I just have to ensure I select a macOS AMI version that suits my requirements. I select the mac2.metal instance type and select host Tenancy and the dedicated Host I just created.

Alternatively, I may use the CLI.

Alternatively, I may use the CLI.

➜ ~ aws ec2 run-instances \

--instance-type mac2.metal \

--key-name my_key \

--placement HostId=h-0fxxxxxxx90 \

--security-group-ids sg-01000000000000032 \

--image-id AWS_OR_YOUR_AMI_ID

{

"Groups": [],

"Instances": [

{

"AmiLaunchIndex": 0,

"ImageId": "ami-01xxxxbd",

"InstanceId": "i-08xxxxx5c",

"InstanceType": "mac2.metal",

"KeyName": "my_key",

"LaunchTime": "2021-11-08T16:47:39+00:00",

"Monitoring": {

"State": "disabled"

},

... redacted for brevity ....

When you use EC2 Mac instances for the first time, you’re likely to ask questions such as, “How do I connect through Apple Remote Desktop?” or “How do I increase the size of the APFS file system on the EBS volume?” The EC2 Mac documentation covers the answers for you and provides examples of commands to run on macOS to perform these common tasks.

I use SSH to connect to the newly launched instance as usual.

I may enable Apple Remote Desktop and start a VNC session to the EC2 instance. The EC2 Mac instance documentation page has the details.

Availability and Pricing

EC2 M1 Mac instances are now available in preview in US East (N. Virginia) and US West (Oregon), with other AWS Regions coming at launch.

Pricing metrics are similar to the previous generation of EC2 Mac instances. You are charged per hour of reservation of the dedicated host, not for the time the instance is running, and there is a minimum charge of 24 hours for reserving a dedicated host.

In the two preview Regions, the on-demand price is $0.6498 per hour. You can save up to 42 percent over the on-demand price with Savings Plans. Check our Dedicated Host on-demand pricing page, as well as the Savings Plans page to learn the details.

You can sign up for the preview of EC2 Mac M1 instances today!

-- seb

New – Site-to-Site Connectivity with AWS Direct Connect SiteLink

=======================

We are launching AWS Direct Connect SiteLink, a new capability of AWS Direct Connect that lets you create connections between your on-premises networks through the AWS global network backbone.

Until today, when you needed direct connectivity between your data centers or branch offices, you had to rely on public internet or expensive and hard-to-deploy fixed networks. These are geographically constrained and can be tied to long-term contracts. This rigidity becomes a pain point as you expand your businesses globally. In turn, you’re required to create custom workarounds to interconnect networks from different providers, which increases your operating costs.

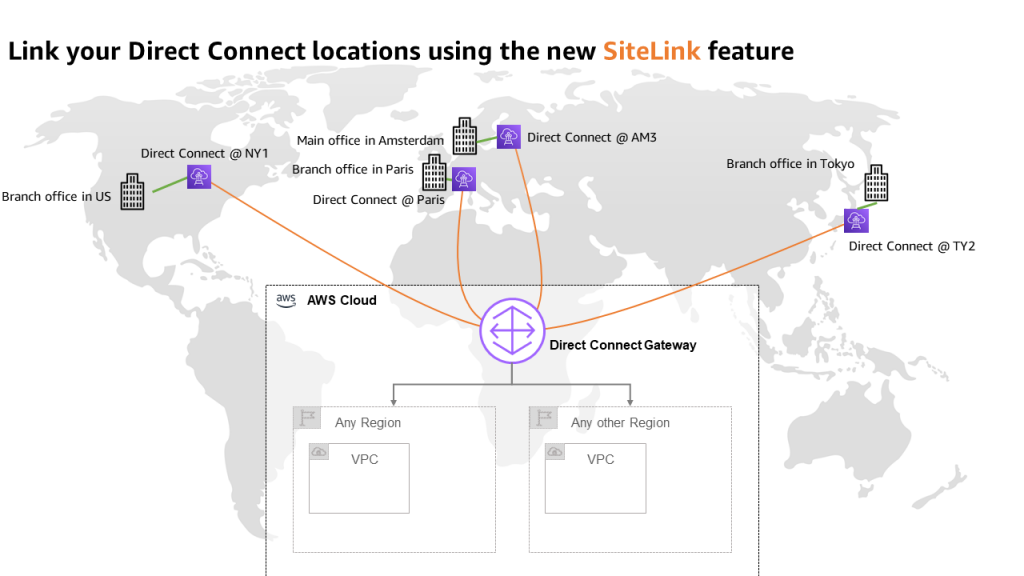

Starting today, you may connect your sites through Direct Connect locations, without sending your traffic through an AWS Region. We have 108 Direct Connect locations available in 32 countries as I am writing this post, located across Africa, Americas, Asia-Pacific, Europe, and the Middle East. Traffic flows from one Direct Connect location to another following the shortest possible path. You no longer need to connect through the closest AWS Region and manage and configure an AWS Transit Gateway for site-to-site network connectivity.

You can take advantage of Direct Connect’s reliability and global footprint to build a network that grows with your business, with no long-term contracts, flexible pay-as-you-go pricing, and a wide range of port-speeds, from 50 Mbps to 100 Gbps. SiteLink also integrates with other AWS services, letting you reach your VPCs, other AWS services, and your on-premises networks from your Direct Connect connections.

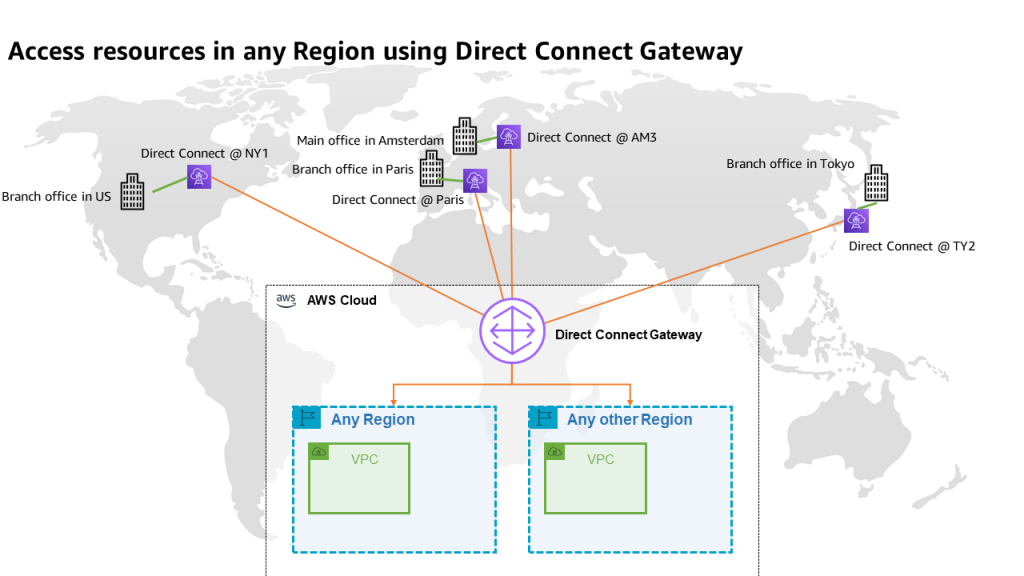

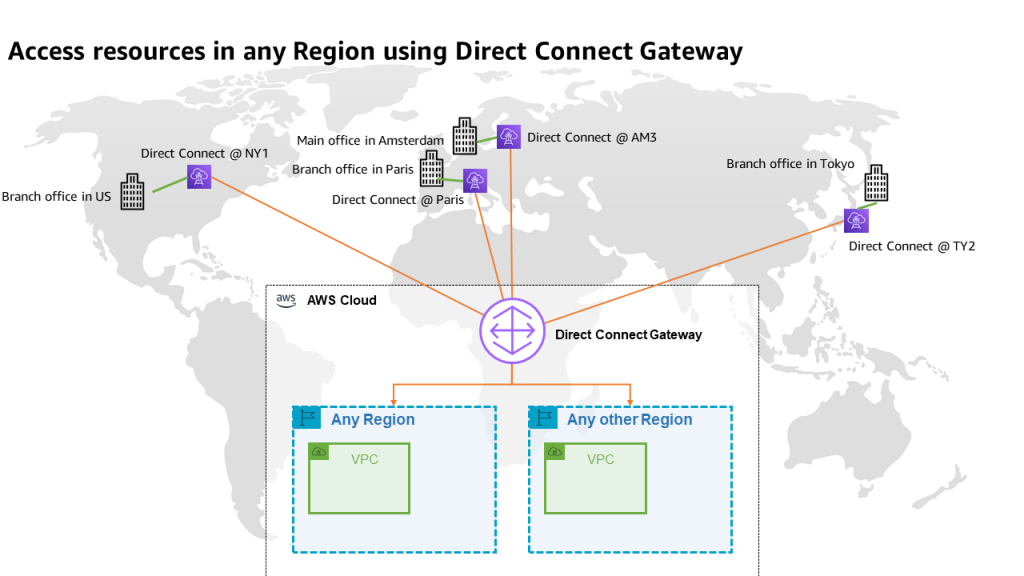

When talking about network topology, a small diagram is always more descriptive than long phrases.

The following diagram shows the way that you use Direct Connect today. Direct Connect is currently optimized to let you reach your AWS Resources running in any Region as quickly as possible. Sending data from one Direct Connect location to another is not possible.

Once you connect your locations (NY1, AM3, Paris, and TY2 in the diagram) to a Direct Connect gateway, those connections can reach any AWS Region (except the two AWS China Regions). No peering between Regions is necessary, because Direct Connect gateways are global resources.

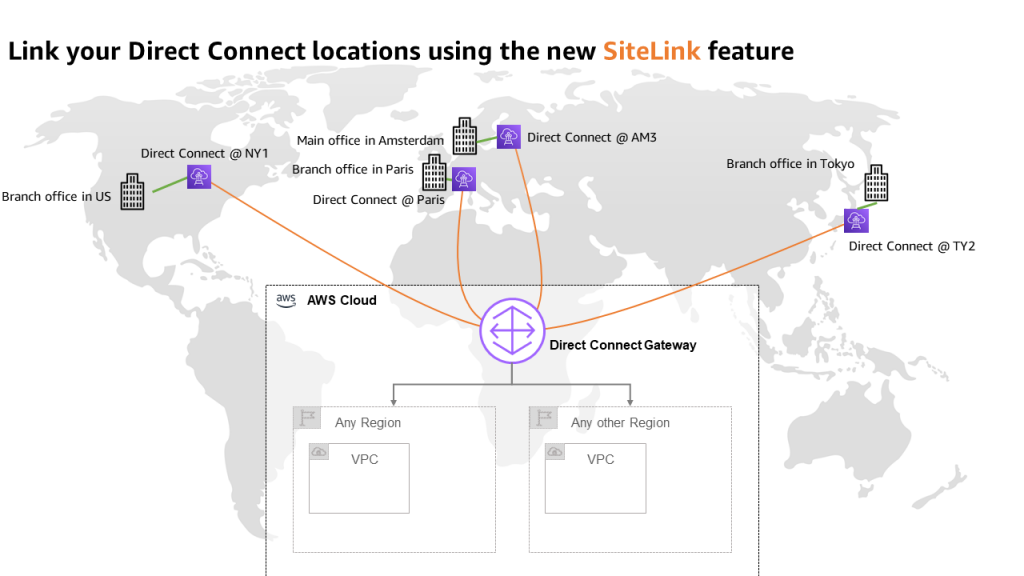

The following diagram shows how you connect multiple sites using SiteLink. The data flows between Direct Connect locations without going through an AWS Region.

How to Get Started?

Configuring these connections is very similar to what you do today. The first step is to connect my network to Direct Connect locations. After that, SiteLink can be enabled or disabled in minutes.

Using the AWS Management Console, I navigate to the Direct Connect section, and I select Create virtual interface to create a virtual interface. Under the Additional Settings section, I make sure the SiteLink switch is turned on. Obviously, I repeat this on another virtual interface, once per site, to connect.

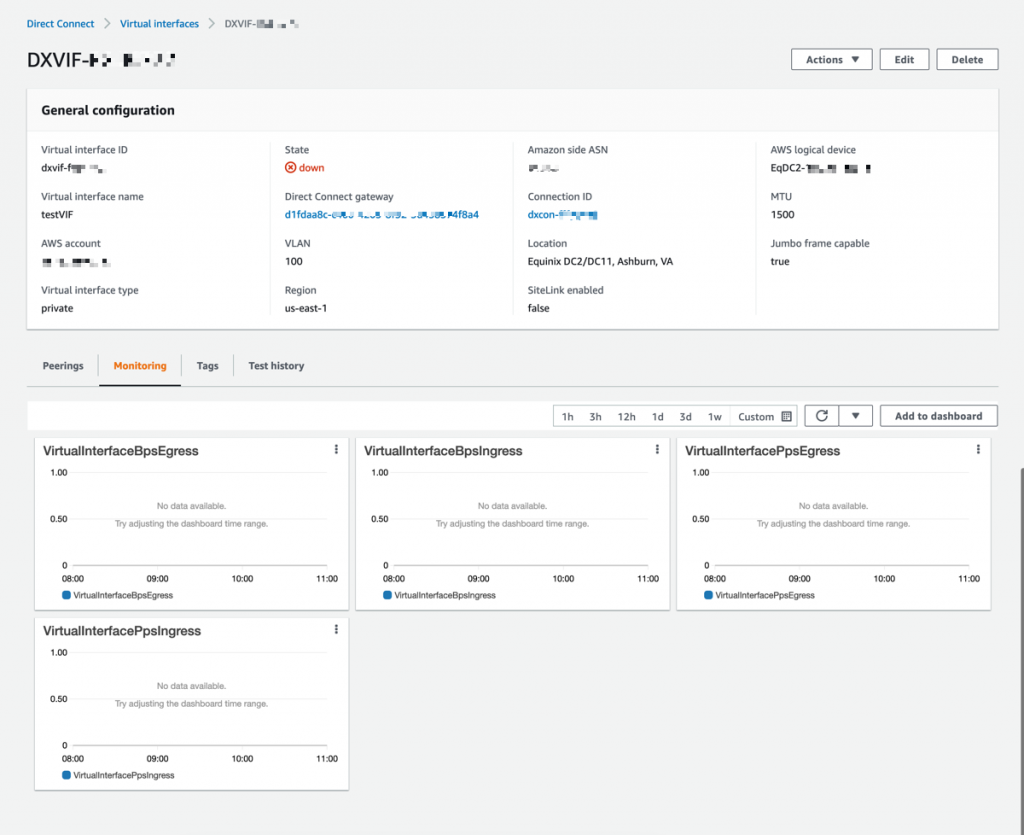

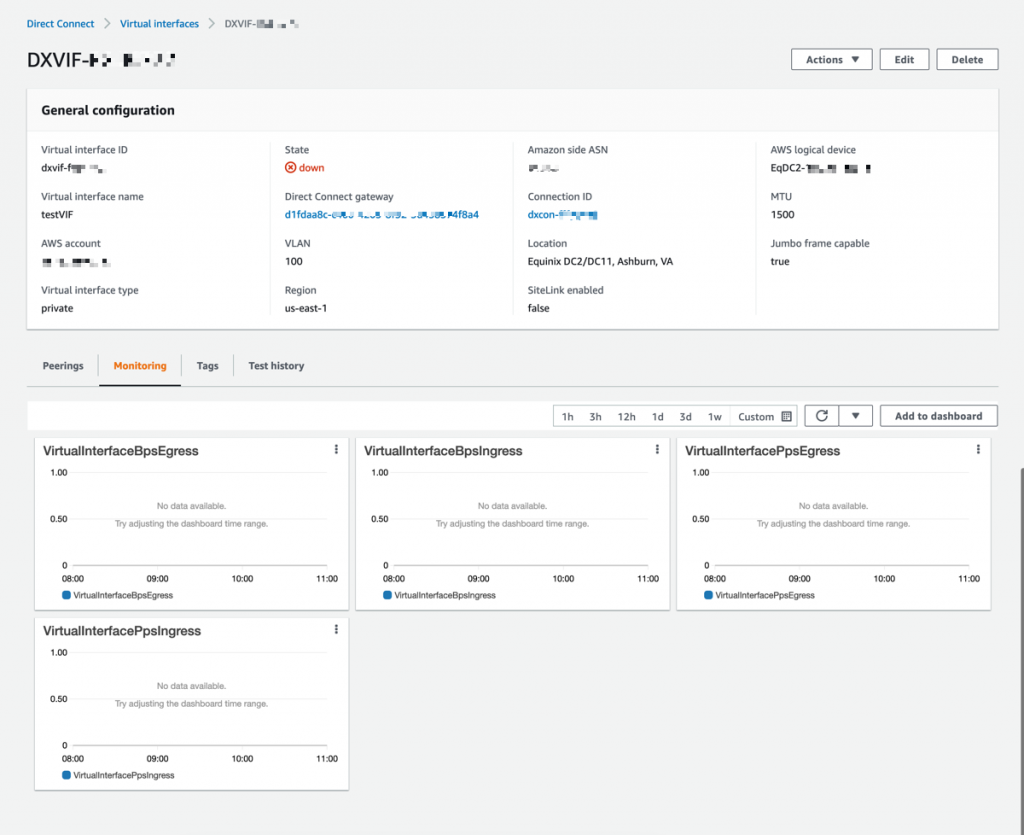

I have access to similar monitoring dashboards and metrics published to CloudWatch. I select my virtual interface, and then navigate to the Monitoring tab (hopefully your ViF will have more data available than mine that was created just for this post).

Availability and Pricing

You can connect your on-premises networks or branch offices to any of our Direct Connect locations available today, except in China.

Pricing is pay-as-you-go, with no commitment or recurring fees. In addition to existing Direct Connect charges, your monthly bill will include a price-per-hour for SiteLink virtual interfaces, as well as the cost of SiteLink data transfer. Check the pricing page to get the details.

Go ahead an start connecting your on-premises locations together with Direct Connect SiteLink!

-- seb

New – Enhanced Dead-letter Queue Management Experience for Amazon SQS Standard Queues

=======================

Hundreds of thousands of customers use Amazon Simple Queue Service (SQS) to build message-based applications to decouple and scale microservices, distributed systems, and serverless apps. When a message cannot be successfully processed by the queue consumer, you can configure SQS to store it in a dead-letter queue (DLQ).

As a software developer or architect, you’d like to examine and review unconsumed messages in your DLQs to figure out why they couldn’t be processed, identify patterns, resolve code errors, and ultimately reprocess these messages in the original queue. The life cycle of these unconsumed messages is part of your error-handling workflow, which is often manual and time consuming.

Today, I’m happy to announce the general availability of a new enhanced DLQ management experience for SQS standard queues that lets you easily redrive unconsumed messages from your DLQ to the source queue.

This new functionality is available in the SQS console and helps you focus on the important phase of your error handling workflow, which consists of identifying and resolving processing errors. With this new development experience, you can easily inspect a sample of the unconsumed messages and move them back to the original queue with a click, and without writing, maintaining, and securing any custom code. This new experience also takes care of redriving messages in batches, reducing overall costs.

DLQ and Lambda Processor Setup

If you’re already comfortable with the DLQ setup, then skip the setup and jump into the new DLQ redrive experience.

First, I create two queues: the source queue and the dead-letter queue.

I edit the source queue and configure the Dead-letter queue section. Here, I pick the DLQ and configure the Maximum receives, which is the number of times after which a message is reprocessed before being sent to the DLQ. For this demonstration, I’ve set it to one. This means that every failed message goes to the DLQ immediately. In a real-world environment, you might want to set a higher number depending on your requirements and based on what a failure means with respect to your application.

I also edit the DLQ to make sure that only my source queue is allowed to use this DLQ. This configuration is optional: when this Redrive allow policy is disabled, any SQS queue can use this DLQ. There are cases where you want to reuse a single DLQ for multiple queues. But usually it’s considered best practices to setup independent DLQs per source queue to simplify the redrive phase without affecting cost. Keep in mind that you’re charged based on the number of API calls, not the number of queues.

Once the DLQ is correctly set up, I need a processor. Let’s implement a simple message consumer using AWS Lambda.

The Lambda function written in Python will iterate over the batch of incoming messages, fetch two values from the message body, and print the sum of these two values.

import json

def lambda_handler(event, context):

for record in event['Records']:

payload = json.loads(record['body'])

value1 = payload['value1']

value2 = payload['value2']

value_sum = value1 + value2

print("the sum is %s" % value_sum)

return "OK"

The code above assumes that each message’s body contains two integer values that can be summed, without dealing with any validation or error handling. As you can imagine, this will lead to trouble later on.

Before processing any messages, you must grant this Lambda function enough permissions to read messages from SQS and configure its trigger. For the IAM permissions, I use the managed policy named AWSLambdaSQSQueueExecutionRole, which grants permissions to invoke sqs:ReceiveMessage, sqs:DeleteMessage, and sqs:GetQueueAttributes.

I use the Lambda console to set up the SQS trigger. I could achieve the same from the SQS console too.

Now I’m ready to process new messages using Send and receive messages for my source queue in the SQS console. I write {"value1": 10, "value2": 5} in the message body, and select Send message.

When I look at the CloudWatch logs of my Lambda function, I see a successful invocation.

START RequestId: 637888a3-c98b-5c20-8113-d2a74fd9edd1 Version: $LATEST

the sum is 15

END RequestId: 637888a3-c98b-5c20-8113-d2a74fd9edd1

REPORT RequestId: 637888a3-c98b-5c20-8113-d2a74fd9edd1 Duration: 1.31 ms Billed Duration: 2 ms Memory Size: 128 MB Max Memory Used: 39 MB Init Duration: 116.90 ms

Troubleshooting powered by DLQ Redrive

Now what if a different producer starts publishing messages with the wrong format? For example, {"value1": "10", "value2": 5}. The first number is a string and this is quite likely to become a problem in my processor.

In fact, this is what I find in the CloudWatch logs:

START RequestId: 542ac2ca-1db3-5575-a1fb-98ce9b30f4b3 Version: $LATEST

[ERROR] TypeError: can only concatenate str (not "int") to str

Traceback (most recent call last):

File "/var/task/lambda_function.py", line 8, in lambda_handler

value_sum = value1 + value2

END RequestId: 542ac2ca-1db3-5575-a1fb-98ce9b30f4b3

REPORT RequestId: 542ac2ca-1db3-5575-a1fb-98ce9b30f4b3 Duration: 1.69 ms Billed Duration: 2 ms Memory Size: 128 MB Max Memory Used: 39 MB

To figure out what’s wrong in the offending message, I use the new SQS redrive functionality, selecting DLQ redrive in my dead-letter queue.

I use Poll for messages and fetch all unconsumed messages from the DLQ.

And then I inspect the unconsumed message by selecting it.

The problem is clear, and I decide to update my processing code to handle this case properly. In the ideal world, this is an upstream issue that should be fixed in the message producer. But let’s assume that I can’t control that system and it’s critically important for the business that I process this new type of messages.

Therefore, I update the processing logic as follows:

import json

def lambda_handler(event, context):

for record in event['Records']:

payload = json.loads(record['body'])

value1 = int(payload['value1'])

value2 = int(payload['value2'])

value_sum = value1 + value2

print("the sum is %s" % value_sum)

# do some more stuff

return "OK"

Now that my code is ready to process the unconsumed message, I start a new redrive task from the DLQ to the source queue.

By default, SQS will redrive unconsumed messages to the source queue. But you could also specify a different destination and provide a custom velocity to set the maximum number of messages per second.

I wait for the redrive task to complete by monitoring the redrive status in the console. This new section always shows the status of most recent redrive task.

The message has been moved back to the source queue and successfully processed by my Lambda function. Everything looks fine in my CloudWatch logs.

START RequestId: 637888a3-c98b-5c20-8113-d2a74fd9edd1 Version: $LATEST

the sum is 15

END RequestId: 637888a3-c98b-5c20-8113-d2a74fd9edd1

REPORT RequestId: 637888a3-c98b-5c20-8113-d2a74fd9edd1 Duration: 1.31 ms Billed Duration: 2 ms Memory Size: 128 MB Max Memory Used: 39 MB Init Duration: 116.90 ms

Available Today at No Additional Cost

Today you can start leveraging the new DLQ redrive experience to simplify your development and troubleshooting workflows, without any additional cost. This new console experience is available in all AWS Regions where SQS is available, and we’re looking forward to hearing your feedback.

Check out the DLQ redrive documentation here.

— Alex

Page 1|Page 2|Page 3|Page 4

Last AWS re:Invent 2020, we announced

Last AWS re:Invent 2020, we announced  Before the end of the current LTS period, you will be able to use your AWS account to complete the FreeRTOS EMP registration on the FreeRTOS console, review and agree to the associated terms and conditions, select the LTS version, and buy an annual subscription. You will then gain access to the private repository where you’ll receive .zip files containing a git repo with chosen libraries, patches, and related notifications.

Before the end of the current LTS period, you will be able to use your AWS account to complete the FreeRTOS EMP registration on the FreeRTOS console, review and agree to the associated terms and conditions, select the LTS version, and buy an annual subscription. You will then gain access to the private repository where you’ll receive .zip files containing a git repo with chosen libraries, patches, and related notifications.